HDFS HA HADOOP集群部署

1.集群环境节点分布

JournalNode: bigdatasvr01 ,

bigdatasvr02

,bigdatasvr03

namenode: bigdatasvr02(active),bigdatasvr03(standby)

datanode: bigdatasvr01, bigdatasvr03

namenode: bigdatasvr02(active),bigdatasvr03(standby)

datanode: bigdatasvr01, bigdatasvr03

nodemanager: bigdatasvr01, bigdatasvr03

ResourceManager: bigdatasvr02

2.修改主机名

3.设置免密码登录

每台机器上都执行命令:

ssh-keygen -t rsa -P ''

将

bigdatasvr02

的公钥拷贝到

bigdatasvr01 ,

bigdatasvr03上

ssh-copy-id hadoop@bigdatasvr01

ssh-copy-id hadoop@bigdatasvr03

至少要保证bigdatasvr02免密码登录到bigdatasvr01 ,bigdatasvr03上

4.设置环境变量

1.设置JDK环境变量

2.设置hadoop环境变量,在/etc/profile.d下新建一个hadoop.sh:

export HADOOP_HOME=/home/hadoop/hadoop

export HADOOP_COMMON_LIB_NATIVE_DIR=$HADOOP_HOME/lib/native

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

export HADOOP_OPTS="-Djava.library.path=$HADOOP_HOME/lib"

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

使其生效:

source hadoop.sh

5.搭建hadoop集群

用的hadoop是apache原生包hadoop-2.7.1.tar.gz

5.1 修改配置文件

把下面6个文件修改好,然后拷贝到所有节点。

hadoop-env.sh,core-stie.xml,hdfs-site.xml,yarn-site.xml,mapred-site.xml,slaves

5.1.1修改core-stie.xml

<property>

<name>fs.defaultFS</name>

<value>hdfs://bigdatasvr02:9000</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>131072</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/home/hadoop/hadoop/tmp</value>

<description>Abasefor other temporary directories.</description>

</property>5.1.2修改hdfs-site.xml

<property>

<name>dfs.nameservices</name>

<value>hadoopcluster</value>

</property>

<property>

<name>dfs.ha.namenodes.hadoopcluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.hadoopcluster.nn1</name>

<value>bigdatasvr02:9000</value>

</property>

<property>

<name>dfs.namenode.rpc-address.hadoopcluster.nn2</name>

<value>bigdatasvr03:9000</value>

</property>

<property>

<name>dfs.namenode.http-address.hadoopcluster.nn1</name>

<value>bigdatasvr02:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.hadoopcluster.nn2</name>

<value>bigdatasvr03:50070</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/home/hadoop/hadoop/ha/hdfs/name</value>

<description>allow multiple directory split by ,</description>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://bigdatasvr01:8485;bigdatasvr02:8485;bigdatasvr03:8485/hadoopcluster</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/home/hadoop/hadoop/ha/hdfs/data</value>

<description>allow multiple directory split by ,</description>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>false</value>

<description>Whether automatic failover is enabled. See the HDFS High

Availability documentation for details on automatic HA configuration.</description>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/home/hadoop/hadoop/ha/hdfs/journal</value>

</property>

<property>

<name>dfs.replication</name>

<value>2</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>5.1.3修改mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>bigdatasvr03:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>bigdatasvr03:19888</value>

</property>

5.1.4修改yarn-site.xml

<property>

<description>The hostname of the RM.</description>

<name>yarn.resourcemanager.hostname</name>

<value>bigdatasvr02</value>

</property>

<property>

<description>The address of the applications manager interface in the RM.</description>

<name>yarn.resourcemanager.address</name>

<value>${yarn.resourcemanager.hostname}:8032</value>

</property>

<property>

<description>The http address of the RM web application.</description>

<name>yarn.resourcemanager.webapp.address</name>

<value>${yarn.resourcemanager.hostname}:8088</value>

</property>

<property>

<description>The https adddress of the RM web application.</description>

<name>yarn.resourcemanager.webapp.https.address</name>

<value>${yarn.resourcemanager.hostname}:8090</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>${yarn.resourcemanager.hostname}:8031</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>${yarn.resourcemanager.hostname}:8030</value>

</property>

<property>

<description>The address of the RM admin interface.</description>

<name>yarn.resourcemanager.admin.address</name>

<value>${yarn.resourcemanager.hostname}:8033</value>

</property>

<property>

<description>List of directories to store localized files in. An application's localized file directory will be found in:

${yarn.nodemanager.local-dirs}/usercache/${user}/appcache/application_${appid}.

Individual containers' work directories, called container_${contid}, will

be subdirectories of this.</description>

<name>yarn.nodemanager.local-dirs</name>

<value>/home/hadoop/hadoop/ha/yarn/local</value>

</property>

<property>

<description>Whether to enable log aggregation</description>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<description>Where to aggregate logs to.</description>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>/home/hadoop/logs</value>

</property>

<property>

<description>Number of CPU cores that can be allocated for containers.</description>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>4</value>

</property>

<property>

<description>the valid service name should only contain a-zA-Z0-9_ and can not start with numbers</description>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

5.1.5修改slaves

bigdatasvr01

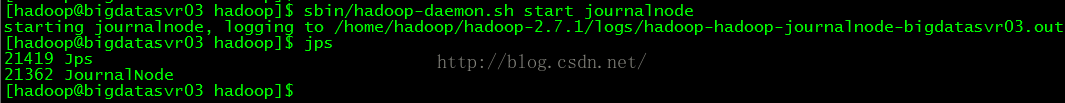

bigdatasvr035.2 启动journalnode(每个节点上都运行)

运行命令:

sbin/hadoop-daemon.sh start

journalnode

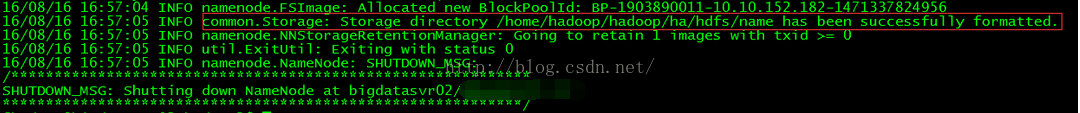

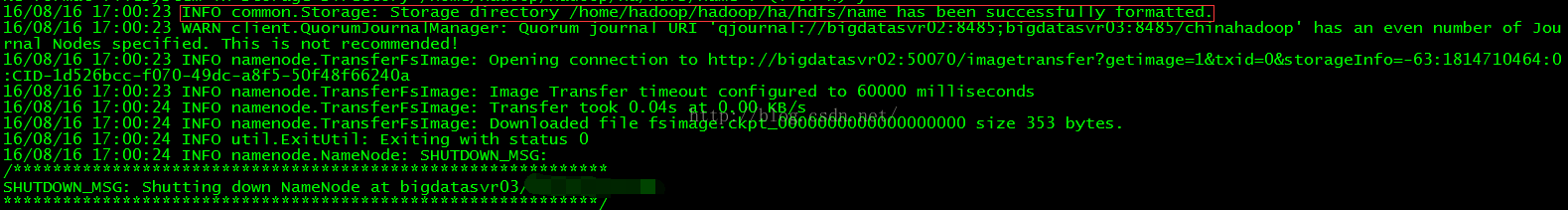

5.3 格式化namenode(nn1)

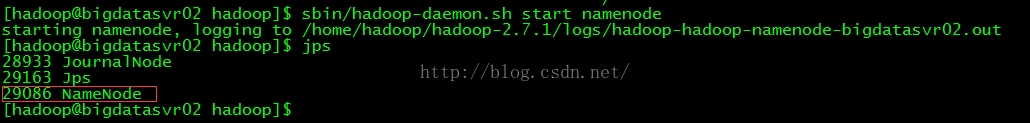

5.4 启动namenode (nn1)

只有当namenode格式化成功之后才能正常启动namenode

在

bigdatasvr02 上运行命令:

sbin/hadoop-daemon.sh start namenode

5.5格式化namenode(nn2)

在

bigdatasvr03 上运行命令:

bin/hdfs namenode -bootstrapStandby

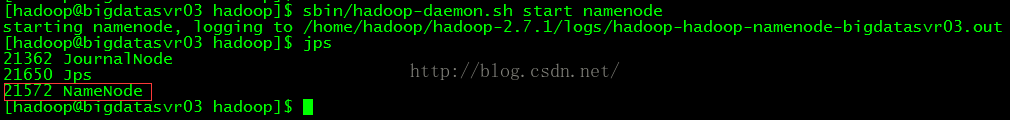

5.6启动namenode (nn2)

在

bigdatasvr03 上运行命令:

sbin/hadoop-daemon.sh start namenode

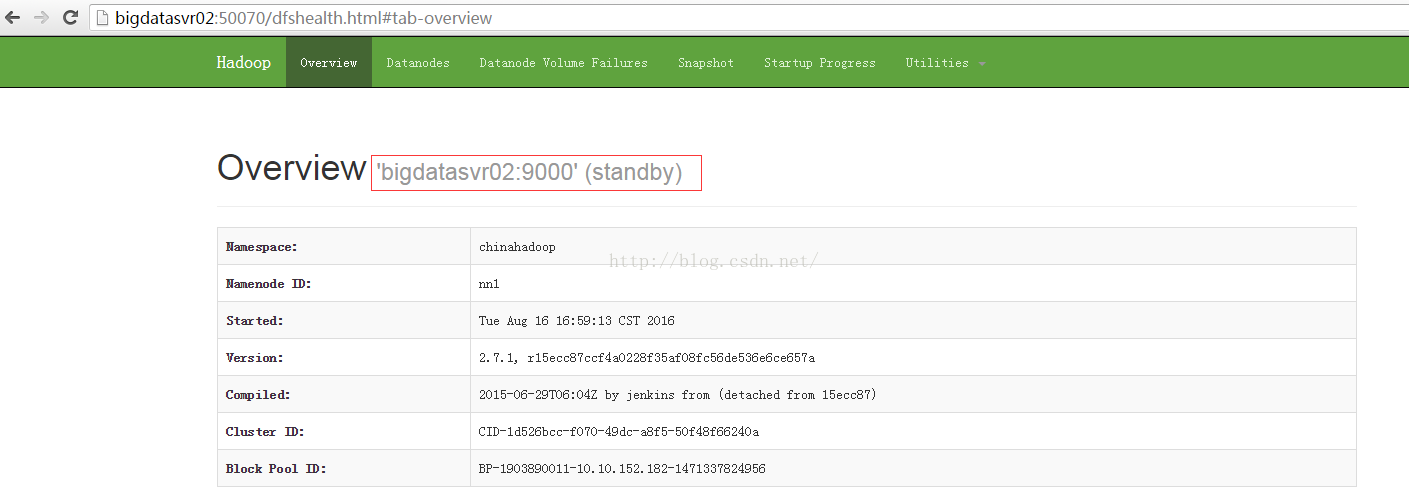

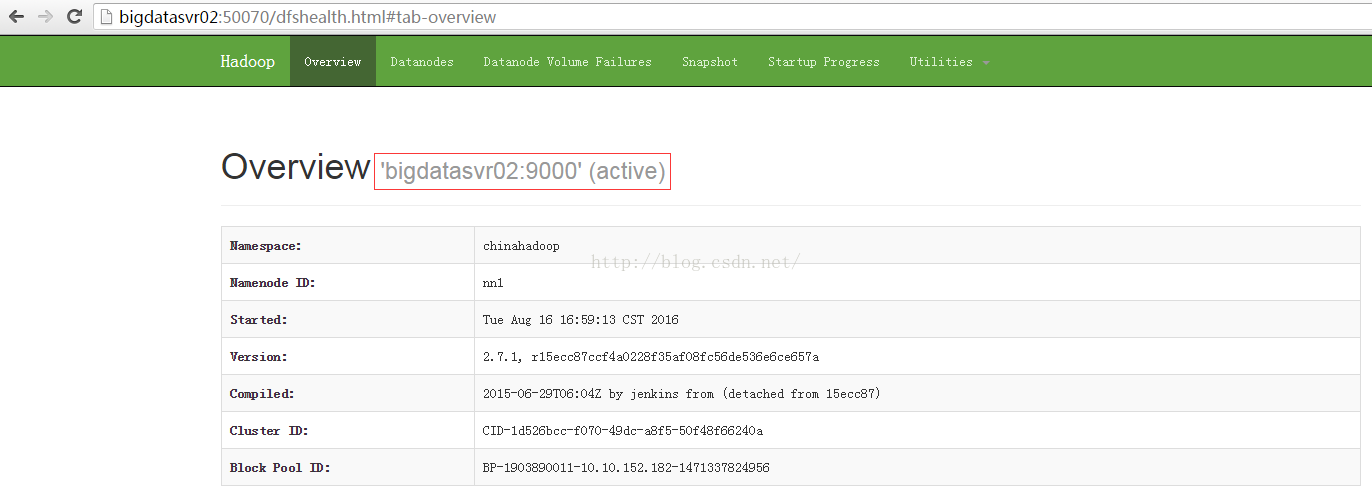

在浏览器上访问http://bigdatasvr02:50070/ 当前是 standby 状态

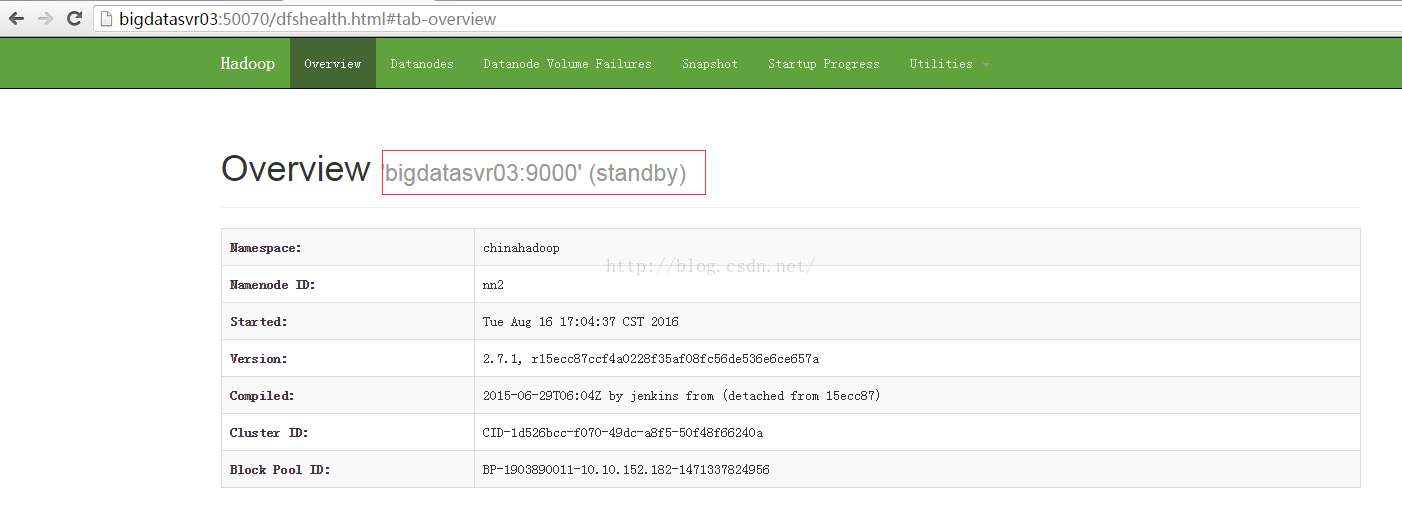

在浏览器上访问

http://bigdatasvr03:50070/

当前是

standby

状态

5.7激活namenode

在

bigdatasvr02 上运行命令:

bin/hdfs haadmin -transitionToActive nn1

在浏览器上访问http://bigdatasvr02:50070

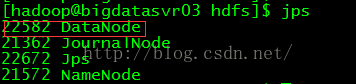

5.8启动datanode

在

bigdatasvr02 上运行命令:

sbin/hadoop-daemons.sh start datanode

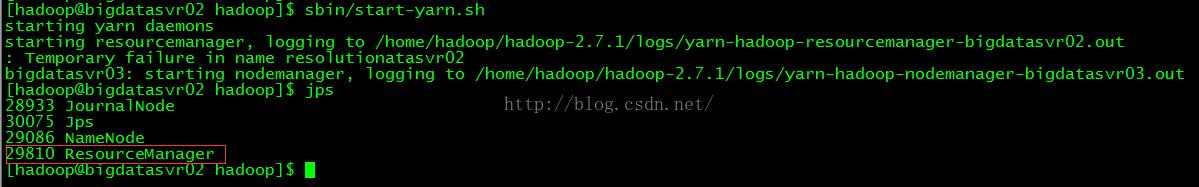

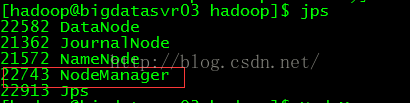

5.9启动yarn

在

bigdatasvr02 上运行命令:

sbin/start-yarn.sh

在

bigdatasvr02生成

ResourceManager进程

在datanode节点上生成

NodeManager进程

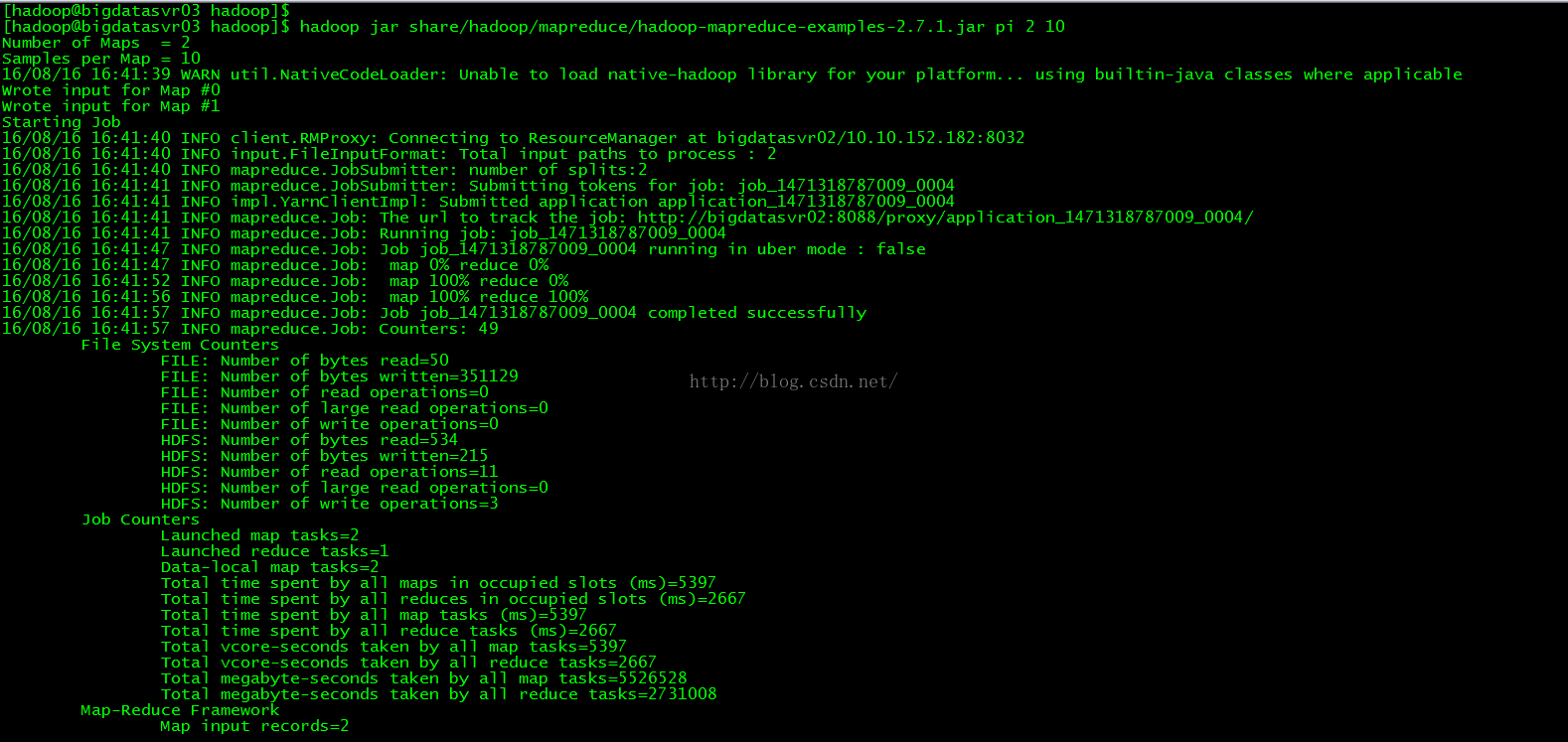

5.10执行一个MapReduce

hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.1.jar pi 2 10

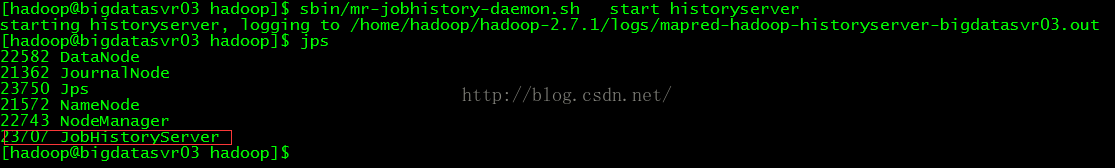

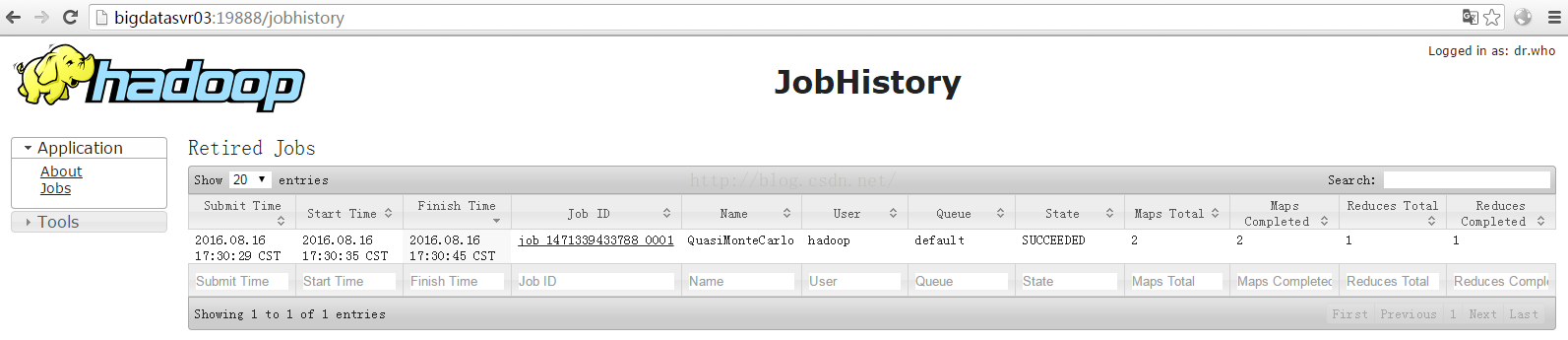

5.11启动日志记录服务

在

bigdatasvr03 上运行命令:

sbin/mr-jobhistory-daemon.sh start historyserver

在浏览器中输入:http://bigdatasvr03:19888/

6.停止hadoop集群

sbin/stop-all.sh

查看集群状态:

bin/hdfs dfsadmin -report

7.Hive部署安装

7.1安装mysql

7.2创建hive数据库和用户

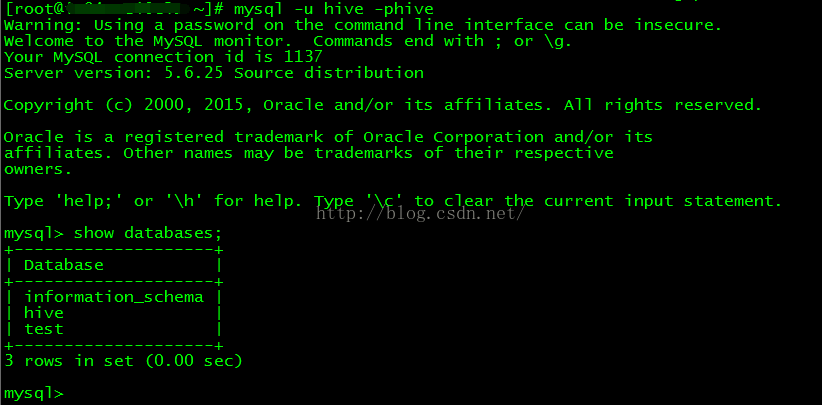

1.登录mysql 以root用户身份登录

mysql -uroot -p123123

2.创建hive用户,数据库

insert into user(Host,User,Password,ssl_cipher,x509_issuer,x509_subject) values("localhost","hive",password("hive"),"","","");

create database hive;

grant all on hive.* to hive@'%' identified by 'hive';

grant all on hive.* to hive@'localhost' identified by 'hive';

flush privileges;

7.3验证hive用户

7.4安装Hive

使用安装包为:hive-1.2.1-bin.tar.gz

1.下载解压安装包

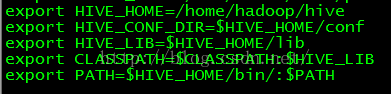

2.配置hive环境变量:vi /etc/profile.d/hadoop.sh

使其生效:source /etc/profile.d/hadoop.sh

3.修改hive-site.xml

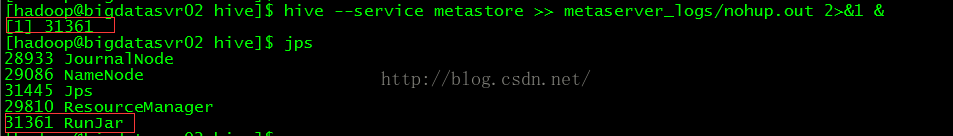

4.启动hive的metastore

nohup hive --service metastore >> metaserver_logs/nohup.out 2>&1 &

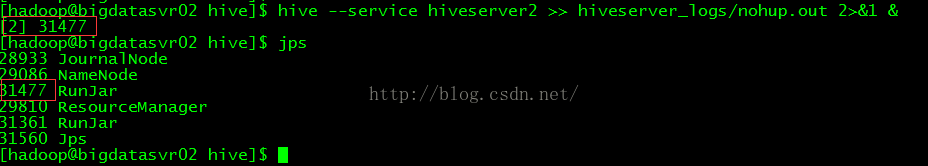

5.启动hive的jdbc等服务程序,提供jdbc、beeline远端连接服务

hive --service hiveserver2 >> hiveserver_logs/nohup.out 2>&1 &

6.启动测试hive

执行hive命令:hive

7.创建hive表

create table

inter_table

(id int,

name string,

age int,

tele string)

ROW FORMAT DELIMITED

FIELDS TERMINATED BY 't'

STORED AS TEXTFILE;

如果在创建表的时候卡很久一段时间并报错则需要设置mysql中hive数据库的编码为latin1.

最后

以上就是沉默煎饼最近收集整理的关于HA HADOOP集群和HIVE部署的全部内容,更多相关HA内容请搜索靠谱客的其他文章。

本图文内容来源于网友提供,作为学习参考使用,或来自网络收集整理,版权属于原作者所有。

发表评论 取消回复