文章目录

- 概述

- KNN算法原理

- KNN二维分类器模型

- DSIFT

- 手势识别应用

- 手势识别具体流程

概述

本文介绍了KNN算法的基本原理,以及配合dfift(稠密sift)进行一个手势识别方面的应用

KNN算法原理

KNN算法(K-Nearest Neighbor,K邻近分类法)。看似十分神秘以及高大上,实则相当简单。举一个简单的例子,在一本字典里,有很多不同的单词,它们可能对应着相同的中文意思,比如安静,在字典里它可能是“quiet”,“slience”等等。视觉图像的特征也是如此,描述视觉特征的一些信息可能属于同一类,我们可以给它们打上标签,然后储存在计算机的字典里。而在python里有这么一个用法,就叫做字典。配合字典的一些功能特性,我们可以实现KNN算法。如果对于字典的用法比较陌生,这里有一篇CSDN社区其他博主写的一篇文章,可以参考一下。

https://blog.csdn.net/qq_40678222/article/details/83448740

KNN二维分类器模型

# -*- coding: utf-8 -*-

from numpy.random import randn

import pickle

from pylab import *

# create sample data of 2D points

n = 200

# two normal distributions

class_1 = 0.6 * randn(n,2)

class_2 = 1.2 * randn(n,2) + array([5,1])

labels = hstack((ones(n),-ones(n)))

# save with Pickle

#with open('points_normal.pkl', 'w') as f:

with open('points_normal.pkl', 'w') as f:

pickle.dump(class_1,f)

pickle.dump(class_2,f)

pickle.dump(labels,f)

# normal distribution and ring around it

print "save OK!"

class_1 = 0.6 * randn(n,2)

r = 0.8 * randn(n,1) + 5

angle = 2*pi * randn(n,1)

class_2 = hstack((r*cos(angle),r*sin(angle)))

labels = hstack((ones(n),-ones(n)))

# save with Pickle

#with open('points_ring.pkl', 'w') as f:

with open('points_ring.pkl', 'w') as f:

pickle.dump(class_1,f)

pickle.dump(class_2,f)

pickle.dump(labels,f)

print "save OK!"

这里进行了两次保存,第二次需要改一下代码中的文件名,这样我们可以的到一个训练集以及一个测试集。

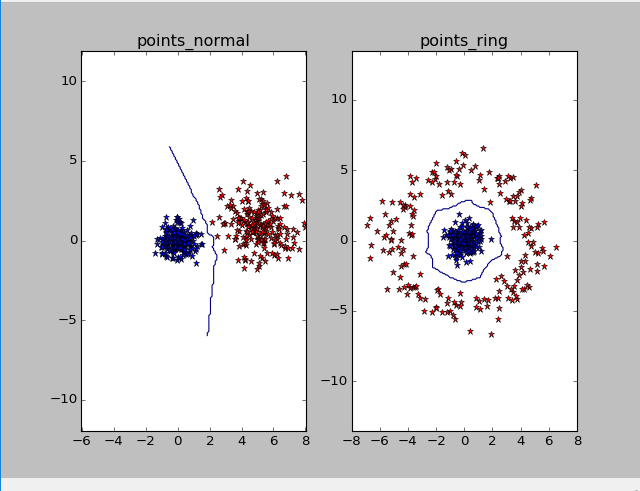

我们可以利用一段代码来可视化KNN算法的分类效果

# -*- coding: utf-8 -*-

import pickle

from pylab import *

from PCV.classifiers import knn

from PCV.tools import imtools

pklist=['points_normal.pkl','points_ring.pkl']

figure()

# load 2D points using Pickle

for i, pklfile in enumerate(pklist):

with open(pklfile, 'r') as f:

class_1 = pickle.load(f)

class_2 = pickle.load(f)

labels = pickle.load(f)

# load test data using Pickle

with open(pklfile[:-4]+'_test.pkl', 'r') as f:

class_1 = pickle.load(f)

class_2 = pickle.load(f)

labels = pickle.load(f)

model = knn.KnnClassifier(labels,vstack((class_1,class_2)))

# test on the first point

print model.classify(class_1[0])

#define function for plotting

def classify(x,y,model=model):

return array([model.classify([xx,yy]) for (xx,yy) in zip(x,y)])

# lot the classification boundary

subplot(1,2,i+1)

imtools.plot_2D_boundary([-6,6,-6,6],[class_1,class_2],classify,[1,-1])

titlename=pklfile[:-4]

title(titlename)

show()

DSIFT

dsift(dense—sift),稠密sift,其特点如字面意思,它是密集采样的sift描述子。具体操作过程可以参考下面这篇博客

https://blog.csdn.net/langb2014/article/details/48738669

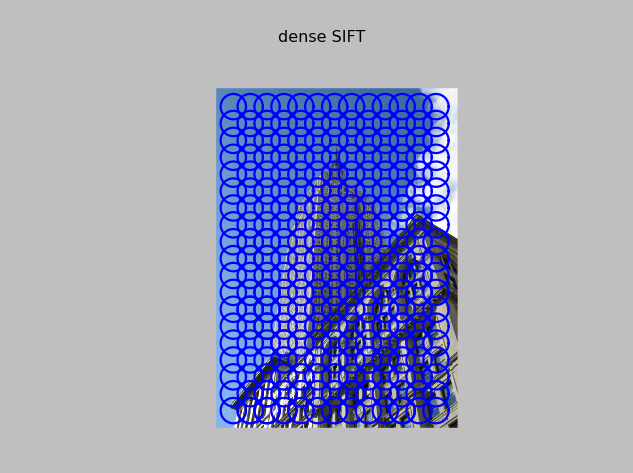

这里我们利用对一张图片进行了DSIFT特征提取,并记录了它的可视化结果

# -*- coding: utf-8 -*-

from PCV.localdescriptors import sift, dsift

from pylab import *

from PIL import Image

dsift.process_image_dsift('gesture/empire.jpg','empire.dsift',90,40,True)

l,d = sift.read_features_from_file('empire.dsift')

im = array(Image.open('gesture/empire.jpg'))

sift.plot_features(im,l,True)

title('dense SIFT')

show()

可视化结果:

手势识别应用

我们要利用上面提到的KNN算法以及DSIFT,实现手势识别

手势识别具体流程

- 提取dsift特征

- 利用KNN算法构建“字典”

- 使用测试集训练模型

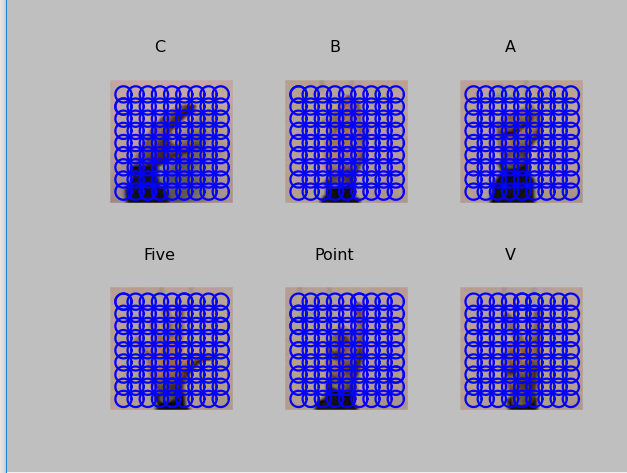

这是手势意义以及对应dense-sift的可视化

# -*- coding: utf-8 -*-

import os

from PCV.localdescriptors import sift, dsift

from pylab import *

from PIL import Image

imlist=['gesture/train/C-uniform02.ppm','gesture/train/B-uniform01.ppm',

'gesture/train/A-uniform01.ppm','gesture/train/Five-uniform01.ppm',

'gesture/train/Point-uniform01.ppm','gesture/train/V-uniform01.ppm']

figure()

for i, im in enumerate(imlist):

print im

dsift.process_image_dsift(im,im[:-3]+'dsift',10,5,True)

l,d = sift.read_features_from_file(im[:-3]+'dsift')

dirpath, filename=os.path.split(im)

im = array(Image.open(im))

#显示手势含义title

titlename=filename[:-14]

subplot(2,3,i+1)

sift.plot_features(im,l,True)

title(titlename)

show()

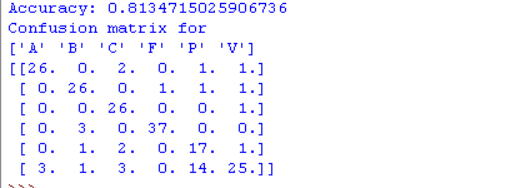

下面是针对我们一个训练集的训练结果

# -*- coding: utf-8 -*-

from PCV.localdescriptors import dsift

import os

from PCV.localdescriptors import sift

from pylab import *

from PCV.classifiers import knn

def get_imagelist(path):

""" Returns a list of filenames for

all jpg images in a directory. """

return [os.path.join(path,f) for f in os.listdir(path) if f.endswith('.ppm')]

def read_gesture_features_labels(path):

# create list of all files ending in .dsift

featlist = [os.path.join(path,f) for f in os.listdir(path) if f.endswith('.dsift')]

# read the features

features = []

for featfile in featlist:

l,d = sift.read_features_from_file(featfile)

features.append(d.flatten())

features = array(features)

# create labels

labels = [featfile.split('/')[-1][0] for featfile in featlist]

return features,array(labels)

def print_confusion(res,labels,classnames):

n = len(classnames)

# confusion matrix

class_ind = dict([(classnames[i],i) for i in range(n)])

confuse = zeros((n,n))

for i in range(len(test_labels)):

confuse[class_ind[res[i]],class_ind[test_labels[i]]] += 1

print 'Confusion matrix for'

print classnames

print confuse

filelist_train = get_imagelist('gesture/train')

filelist_test = get_imagelist('gesture/test')

imlist=filelist_train+filelist_test

# process images at fixed size (50,50)

for filename in imlist:

featfile = filename[:-3]+'dsift'

dsift.process_image_dsift(filename,featfile,10,5,resize=(50,50))

features,labels = read_gesture_features_labels('gesture/train/')

test_features,test_labels = read_gesture_features_labels('gesture/test/')

classnames = unique(labels)

# test kNN

k = 1

knn_classifier = knn.KnnClassifier(labels,features)

res = array([knn_classifier.classify(test_features[i],k) for i in

range(len(test_labels))])

# accuracy

acc = sum(1.0*(res==test_labels)) / len(test_labels)

print 'Accuracy:', acc

print_confusion(res,test_labels,classnames)

修改一行代码:

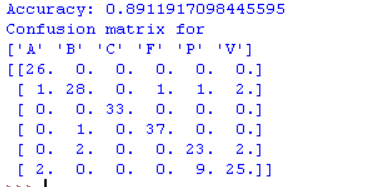

dsift.process_image_dsift(filename,featfile,10,5,resize=(100,100)),把第一次中的50 * 50改为100 * 100,可以得到如下结果:

在改为100 * 100后,准确率提高了约8个百分点,在原大小下“p”和“v”的较高混淆率也得到了降低。

最后

以上就是羞涩镜子最近收集整理的关于计算机视觉——KNN算法以及手势识别应用的全部内容,更多相关计算机视觉——KNN算法以及手势识别应用内容请搜索靠谱客的其他文章。

发表评论 取消回复