Rich feature hierarchies for accurate object detection and semantic segmentation——Tech report (v5)用于精确物体定位和语义分割的丰富特征层次结构——技术报告(第5版)Ross Girshick Jeff Donahue Trevor Darrell Jitendra MalikUC Berkeley加州大学伯克利分校{frbg,jdonahue,trevor,malikg}@eecs.berkeley.edu |

Abstract

Object detection performance, as measured on the canonical PASCAL VOC dataset, has plateaued in the last few years. The best-performing methods are complex ensemble systems that typically combine multiple low-level image features with high-level context. In this paper, we propose a simple and scalable detection algorithm that improves mean average precision (mAP) by more than 30% relative to the previous best result on VOC 2012—achieving a mAP of 53.3%. Our approach combines two key insights: (1) one can apply high-capacity convolutional neural networks

(CNNs) to bottom-up region proposals in order to localize and segment objects and (2) when labeled training data is scarce, supervised pre-training for an auxiliary task, followed by domain-specific fine-tuning, yields a significant performance boost. Since we combine region proposals with CNNs, we call our method R-CNN: Regions with CNN features. We also compare R-CNN to OverFeat, a recently proposed sliding-window detector based on a similar CNN architecture. We find that R-CNN outperforms OverFeat by a large margin on the 200-class ILSVRC2013 detection dataset. Source code for the complete system is available at http://www.cs.berkeley.edu/˜rbg/rcnn.

摘要

过去几年,在经典数据集PASCAL上,物体检测的效果已经达到一个稳定水平。效果最好的方法是融合了多种低维图像特征和高维上下文环境的复杂集成系统。在这篇论文里,我们提出了一种简单并且可扩展的检测算法,可以在VOC2012最好结果的基础上将mAP值提高30%以上——达到了53.3%。我们的方法结合了两个关键的思想:(1)在候选区域上自下而上使用大型卷积神经网络(CNNs),用以定位和分割物体。(2)当带标签的训练数据不足时,先针对辅助任务进行有监督预训练,再进行特定任务的调优,就可以产生明显的性能提升。

因为我们把region proposal和CNNs结合起来,所以该方法被称为R-CNN:Regions with CNN features。我们也把R-CNN效果跟OverFeat比较了下,OverFeat是最近提出的基于类似的CNN特征并采用滑动窗口进行目标检测的一种方法,结果发现R-CNN在200个类别的ILSVRC2013检测数据集上的性能明显优于OVerFeat。系统完整的源代码见:http://www.cs.berkeley.edu/˜rbg/rcnn。(译者注:网址已失效,github新地址:https://github.com/rbgirshick/rcnn)

1. Introduction

Features matter. The last decade of progress on various visual recognition tasks has been based considerably on the use of SIFT [29] and HOG [7]. But if we look at performance on the canonical visual recognition task, PASCAL VOC object detection [15], it is generally acknowledged that progress has been slow during 2010-2012, with small gains obtained by building ensemble systems and employing minor variants of successful methods.

1. 前言

特征的重要性。在过去十年,各类视觉识别任务基本都建立在对SIFT[29]和HOG[7]特征的使用。但如果我们关注一下PASCAL VOC对象检测[15]这个经典的视觉识别任务,就会发现2010-2012年进展缓慢,取得的微小进步都是通过构建一些集成系统和采用一些成功方法的变种才达到的。

SIFT and HOG are blockwise orientation histograms, a representation we could associate roughly with complex cells in V1, the first cortical area in the primate visual pathway. But we also know that recognition occurs several stages downstream, which suggests that there might be hierarchical, multi-stage processes for computing features that are even more informative for visual recognition.

SIFT和HOG是块方向直方图(blockwise orientation histograms),一种类似大脑初级皮层V1层复杂细胞的表示方法。但我们知道识别发生在多个下游阶段,(我们是先看到了一些特征,然后才意识到这是什么东西)也就是说对于视觉识别来说,更有价值的信息,是层次化的,多个阶段的特征。

Fukushima’s “neocognitron” [19], a biologically-inspired hierarchical and shift-invariant model for pattern recognition, was an early attempt at just such a process. The neocognitron, however, lacked a supervised training algorithm. Building on Rumelhart et al. [33], LeCun et al. [26] showed that stochastic gradient descent via backpropagation was effective for training convolutional neural networks (CNNs), a class of models that extend the neocognitron.

Fukushima的“neocognitron,一种受生物学启发用于模式识别的层次化、移动不变性模型,算是这方面最早的尝试。然而,neocognitron缺乏监督训练算法。基于Rumelhart等人的研究,Lecun等人提出反向传播的随机梯度下降(SGD)对训练卷积神经网络(CNNs)非常有效,CNNs被认为是neocognitron的一种扩展。

CNNs saw heavy use in the 1990s (e.g., [27]), but then fell out of fashion with the rise of support vector machines. In 2012, Krizhevsky et al. [25] rekindled interest in CNNs by showing substantially higher image classification accuracy on the ImageNet Large Scale Visual Recognition Challenge (ILSVRC) [9, 10]. Their success resulted from training a large CNN on 1.2 million labeled images, together with a few twists on LeCun’s CNN (e.g., max(x; 0) rectifying non-linearities and “dropout” regularization).

CNNs在1990年代被广泛使用,但之后随着SVM的崛起便淡出研究主流。2012年,Krizhevsky等人在ImageNet大规模视觉识别挑战赛(ILSVRC)上的出色表现重新燃起了对CNNs的兴趣。他们的成功在于在120万标注的图像上使用了一个大型的CNN,并且对LeCUN的CNN进行了一些改造(比如ReLU和Dropout正则化)。

The significance of the ImageNet result was vigorously debated during the ILSVRC 2012 workshop. The central issue can be distilled to the following: To what extent do the CNN classification results on ImageNet generalize to object detection results on the PASCAL VOC Challenge?

这个ImangeNet的结果的重要性在ILSVRC2012 workshop上得到了热烈的讨论。提炼出来的核心问题是:ImageNet上的CNN分类结果在何种程度上能够应用到PASCAL VOC挑战的物体检测任务上?

We answer this question by bridging the gap between image classification and object detection. This paper is the first to show that a CNN can lead to dramatically higher object detection performance on PASCAL VOC as compared to systems based on simpler HOG-like features. To achieve this result, we focused on two problems: localizing objects with a deep network and training a high-capacity model with only a small quantity of annotated detection data.

我们通过弥合图像分类和目标检测差别,回答了这个问题。本论文是第一个说明在PASCAL VOC的物体检测任务上CNN比基于简单的类似HOG特征的系统有大幅的性能提升。我们主要关注了两个问题:使用深度网络定位目标和在小规模的标注数据集上进行大型网络模型的训练。

Unlike image classification, detection requires localizing (likely many) objects within an image. One approach frames localization as a regression problem. However, work from Szegedy et al. [38], concurrent with our own, indicates that this strategy may not fare well in practice (they report a mAP of 30.5% on VOC 2007 compared to the 58.5% achieved by our method). An alternative is to build a sliding-window detector. CNNs have been used in this way for at least two decades, typically on constrained object categories, such as faces [32, 40] and pedestrians [35]. In order to maintain high spatial resolution, these CNNs typically only have two convolutional and pooling layers. We also considered adopting a sliding-window approach. However, units high up in our network, which has five convolutional layers, have very large receptive fields (195*195 pixels) and strides (32*32 pixels) in the input image, which makes precise localization within the sliding-window paradigm an open technical challenge.

与图像分类不同,目标检测需要定位图像中的目标(可能有多个)。一个方法是将框定位看做是回归问题。但Szegedy等人的研究以及我们自己的研究表明这种策略在实际应用中并不可行(在VOC2007上他们的mAP是30.5%,而我们的达到了58.5%)。另一种方法是使用滑动窗口检测器。通过这种方法使用CNNs至少已经有20年的时间了,通常用于一些特定目标种类的检测,例如人脸检测、行人检测等。为了获得较高的空间分辨率,这些CNNs普遍采用了两个卷积层和两个池化层。我们本来也考虑过使用滑动窗口的方法。但是由于我们网络有5个卷积层,具有更深的层和更多的神经元,使得输入图片有非常大的感受野(195×195)和步长(32×32),这使得采用滑动窗口的精确定位方法充满挑战。

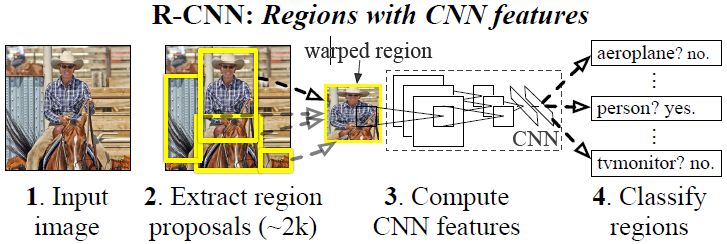

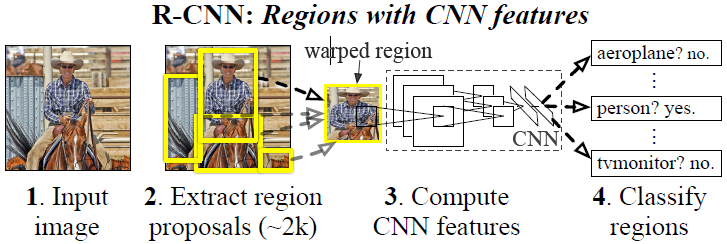

Instead, we solve the CNN localization problem by operating within the “recognition using regions” paradigm [21], which has been successful for both object detection [39] and semantic segmentation [5]. At test time, our method generates around 2000 category-independent region proposals for the input image, extracts a fixed-length feature vector from each proposal using a CNN, and then classifies each region with category-specific linear SVMs. We use a simple technique (affine image warping) to compute a fixed-size CNN input from each region proposal, regardless of the region’s shape. Figure 1 presents an overview of our method and highlights some of our results. Since our system combines region proposals with CNNs, we dub the method R-CNN: Regions with CNN features.

为了解决CNN的定位,我们是通过操作”recognition using regions”范式,这种方法已经成功用于目标检测和语义分隔。测试时,每张图片产生了接近2000个与类别无关的region proposal,然后分别通过CNN提取了一个固定长度的特征向量,最后使用特定类别的线性SVM对每个region进行分类。不论region的形状,我们使用一种简单的方法(仿射图像变形)将每个region proposal转换成固定尺寸的大小作为CNN的输入。图1展示了我们方法的全貌并突出展示了一些实验结果。由于我们的模型结合了Region proposals和CNNs,所以把这种方法称为R-CNN,即Regions with CNN features。

Figure 1: Object detection system overview. Our system (1) takes an input image, (2) extracts around 2000 bottom-up region proposals, (3) computes features for each proposal using a large convolutional neural network (CNN), and then (4) classifies each region using class-specific linear SVMs. R-CNN achieves a mean average precision (mAP) of 53.7% on PASCAL VOC 2010. For comparison, [39] reports 35.1% mAP using the same region proposals, but with a spatial pyramid and bag-of-visual-words approach. The popular deformable part models perform at 33.4%. On the 200-class ILSVRC2013 detection dataset, R-CNN’s mAP is 31.4%, a large improvement over OverFeat [34], which had the previous best result at 24.3%.

图1:物体检测系统概述。我们的系统(1)输入一张图像、(2)提取大约2000个自下而上的region proposals、(3)使用大型卷积神经网络(CNN)计算每个region proposals的特征向量、(4)使用特定类别的线性SVM对每个region进行分类。R-CNN在PASCAL VOC 2010上的mAP为53.7%。对比[39]文献中使用相同region proposals方法,并用使用采用空间金字塔和bag-of-visual-words方法的模型,其mAP只有35.1%。流行的可变部件的模型(DPM)的性能也只有33.4%。在200个类别的ILSVRC2013检测数据集上,R-CNN的mAP为31.4%,比最佳结果24.3%OverFeat有了很大改进。

In this updated version of this paper, we provide a head-to-head comparison of R-CNN and the recently proposed OverFeat [34] detection system by running R-CNN on the 200-class ILSVRC2013 detection dataset. OverFeat uses a sliding-window CNN for detection and until now was the best performing method on ILSVRC2013 detection. We show that R-CNN significantly outperforms OverFeat, with a mAP of 31.4% versus 24.3%.

本篇论文的更新版本中,我们提供了R-CNN和最近提出的OverFeat检测系统在ILSVRC2013的200分类检测数据集上对比结果。OverFeat使用了滑动窗口CNN做检测,目前为止是ILSVRC2013检测集上表现最好的方法。我们的结果显示,R-CNN的mAP达到31.4%,显著超越了OverFeat的24.3%的结果。

A second challenge faced in detection is that labeled data is scarce and the amount currently available is insufficient for training a large CNN. The conventional solution to this problem is to use unsupervised pre-training, followed by supervised fine-tuning (e.g., [35]). The second principle contribution of this paper is to show that supervised pre-training on a large auxiliary dataset (ILSVRC), followed by domain-specific fine-tuning on a small dataset (PASCAL), is an effective paradigm for learning high-capacity CNNs when data is scarce. In our experiments, fine-tuning for detection improves mAP performance by 8 percentage points. After fine-tuning, our system achieves a mAP of 54% on VOC 2010 compared to 33% for the highly-tuned, HOG-based deformable part model (DPM) [17, 20]. We also point readers to contemporaneous work by Donahue et al. [12], who show that Krizhevsky’s CNN can be used (without finetuning) as a blackbox feature extractor, yielding excellent performance on several recognition tasks including scene classification, fine-grained sub-categorization, and domain adaptation.

检测任务第二个挑战是标签数据太少,手头可用于训练一个大型卷积网络的数据往往很不充足。传统解决方法通常采用无监督预训练,再进行有监督调优的方法(如[35])。本文的第二个核心贡献是在辅助数据集(ILSVRC)上进行有监督预训练,再在小数据集(PASCAL)上针对特定问题进行调优,这种方法在训练数据稀少的情况下训练大型卷积神经网络是非常有效的。我们的实验中,针对检测的调优将mAP提高了8个百分点。调优后,我们的系统在VOC2010上达到了54%的mAP,远远超过高度优化的基于HOG的可变性部件模型(deformable part model,DPM)[17, 20]。另外提醒读者朋友们关注Donahue等人同时期的工作,他们的研究表明Krizhevsky的CNN(译者注:Krizhevsky的CNN指AlexNet网络)可以用来作为一个黑盒特征提取器,(没有调优的情况下)在多个识别任务上包括场景分类、细粒度的子分类和领域自适应(domain adaptation)方面均表现很出色。

Our system is also quite efficient. The only class-specific computations are a reasonably small matrix-vector product and greedy non-maximum suppression. This computational property follows from features that are shared across all categories and that are also two orders of magnitude lower-dimensional than previously used region features (cf. [39]).

同时,我们的模型系统也很高效。都是相当小型的矩阵向量相乘和贪婪的非极大值抑制这些特定类别的计算。这个计算特性源自于所有类别都共享的特征,同时这些特征比之前使用的区域特征([39])少了两个数量级的维度。

Understanding the failure modes of our approach is also critical for improving it, and so we report results from the detection analysis tool of Hoiem et al. [23]. As an immediate consequence of this analysis, we demonstrate that a simple bounding-box regression method significantly reduces mislocalizations, which are the dominant error mode.

分析我们方法的失败案例对进一步改进和提升很有帮助,所以我们借助Hoiem等人的定位分析工具[23]做实验结果的报告和分析。作为本次分析的直接结果,我们发现一个简单的边界框回归的方法会明显地降低错误定位问题,而错误定位是我们的模型系统的主要误差。

Before developing technical details, we note that because R-CNN operates on regions it is natural to extend it to the task of semantic segmentation. With minor modifications, we also achieve competitive results on the PASCAL VOC segmentation task, with an average segmentation accuracy of 47.9% on the VOC 2011 test set.

开发技术细节之前,我们注意到由于R-CNN是在推荐区域上进行操作,所以可以很自然地扩展到语义分割任务上。经过微小的改动,我们就在PASCAL VOC语义分割任务上达到了很有竞争力的结果,在VOC 2011测试集上平均语义分割精度达到了47.9%。

2. Object detection with R-CNN

Our object detection system consists of three modules. The first generates category-independent region proposals. These proposals define the set of candidate detections available to our detector. The second module is a large convolutional neural network that extracts a fixed-length feature vector from each region. The third module is a set of classs-pecific linear SVMs. In this section, we present our design decisions for each module, describe their test-time usage, detail how their parameters are learned, and show detection results on PASCAL VOC 2010-12 and on ILSVRC2013.

2. 使用R-CNN做物体检测

我们的物体检测系统有三个模块构成。第一个模块产生类别无关的region proposals。这些proposals组成了一个模型可用的候选检测区域的集合。第二个模块是一个大型卷积神经网络,从每个region提取固定长度的特征向量。第三个模块是特定类别线性SVM的集合。这一节将展示每个模块的设计,并介绍它们的测试阶段的用法,以及一些参数学习的细节,并得出在PASCAL VOC 2010-12和ILSVRC2013上的检测结果。

2.1. Module design

Region proposals. A variety of recent papers offer methods for generating category-independent region proposals. Examples include: objectness [1], selective search [39], category-independent object proposals [14], constrained parametric min-cuts (CPMC) [5], multi-scale combinatorial grouping [3], and Cires¸an et al. [6], who detect mitotic cells by applying a CNN to regularly-spaced square crops, which are a special case of region proposals. While R-CNN is agnostic to the particular region proposal method, we use selective search to enable a controlled comparison with prior detection work (e.g., [39, 41]).

2.1 模块设计

区域推荐(Region Proposals)。近来有很多研究都提出了生成类别无关的区域推荐的方法。比如objectness [1]、selective search [39]、category-independent object proposals [14]、constrained parametric min-cuts (CPMC) [5]、multi-scale combinatorial grouping [3],以及Ciresan等人提出的将CNN用在规律空间块裁剪上以检测有丝分裂细胞的方法,也算是一种特殊的区域推荐类型。由于R-CNN对特定区域算法是不关心的,所以我们采用了selective search以方便和之前的工作[39, 41]进行可控的比较。

Feature extraction. We extract a 4096-dimensional feature vector from each region proposal using the Caffe [24] implementation of the CNN described by Krizhevsky et al. [25]. Features are computed by forward propagating a mean-subtracted 227*227 RGB image through five convolutional layers and two fully connected layers. We refer readers to [24, 25] for more network architecture details.

特征提取。我们使用Krizhevsky等人[25]所描述的CNN(译者注:AlexNet)的一个Caffe[24]实现版本对每个推荐区域提取一个4096维度的特征向量。减去像素均值的277×277大小的RGB输入图像通过五个卷积层和两个全连接层,最终计算得到特征向量。读者可以参考[24, 25]获得更多的网络架构细节。

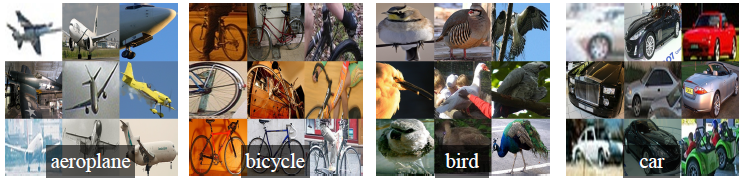

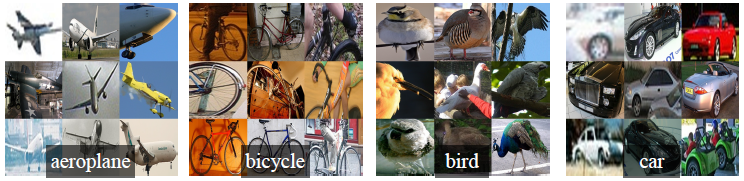

In order to compute features for a region proposal, we must first convert the image data in that region into a form that is compatible with the CNN (its architecture requires inputs of a fixed 227*227 pixel size). Of the many possible transformations of our arbitrary-shaped regions, we opt for the simplest. Regardless of the size or aspect ratio of the candidate region, we warp all pixels in a tight bounding box around it to the required size. Prior to warping, we dilate the tight bounding box so that at the warped size there are exactly p pixels of warped image context around the original box (we use p = 16). Figure 2 shows a random sampling of warped training regions. Alternatives to warping are discussed in Appendix A.

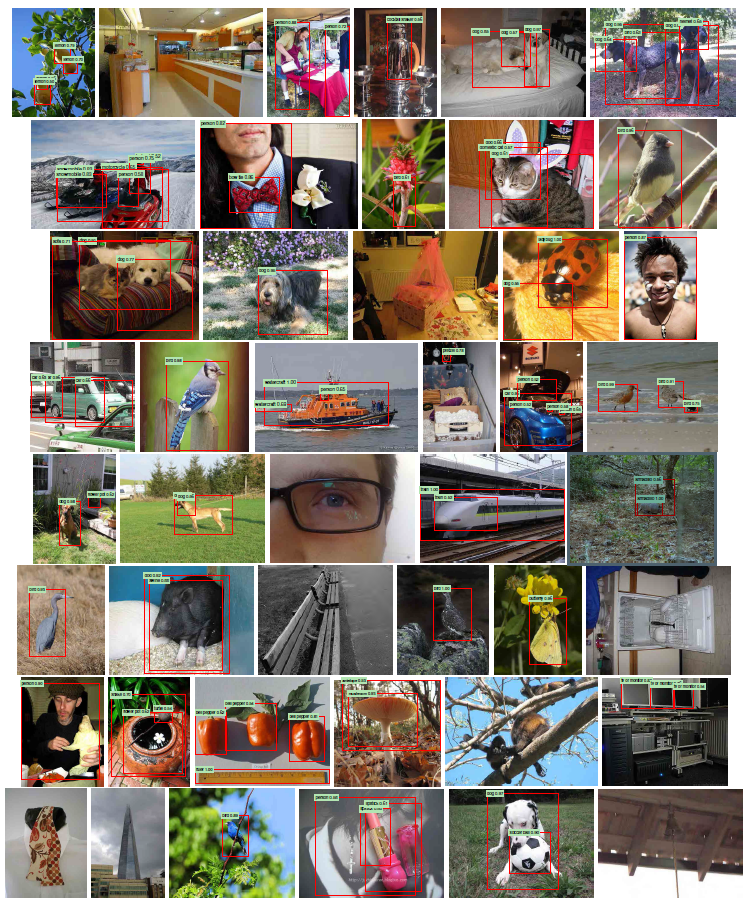

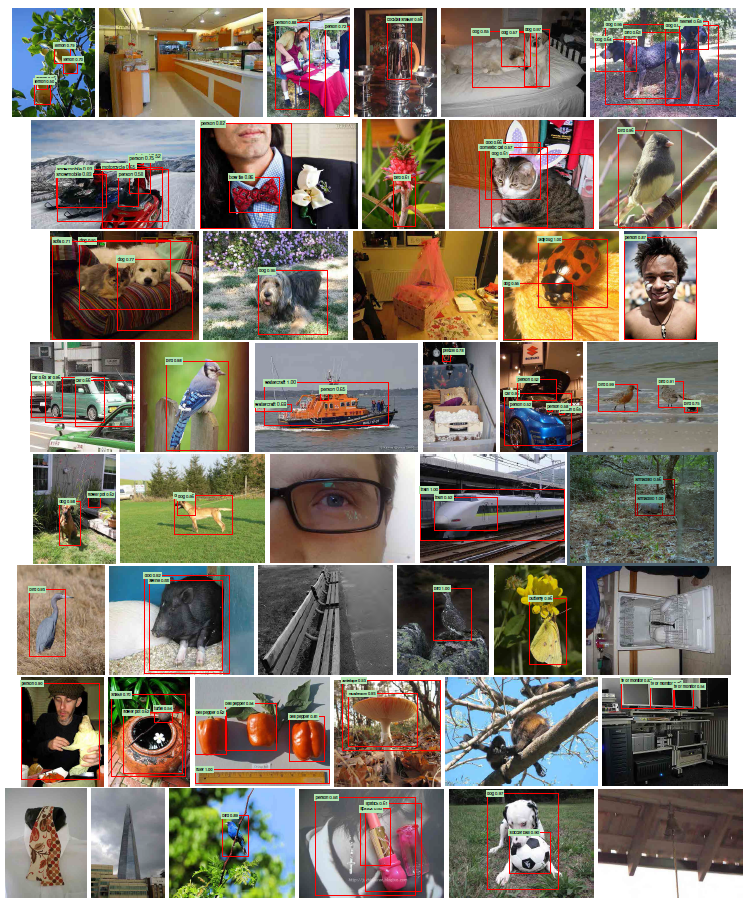

Figure 2: Warped training samples from VOC 2007 train.

为了计算推荐区域的特征,首先需要将输入的图像数据进行转变,使得推荐的区域变成CNN可以接受的方式(我们架构中的CNN只能接受像素宽高比为227*227固定大小的图像)。有很多种方法可以对我们任意形状的区域进行变换,我们选择了最简单的一种。无论候选区域是什么尺寸或者任意长宽比,我们将区域放入无缝的边框内变形到希望的尺寸。变形之前,先放大紧边框以便在新的变形后的尺寸上保证变形图像上下文的p的像素都围绕在原始框上(我们使用p=16)。图2展示了一些变形训练图像的例子。其它的变形方法可以参考附录A。

图2:VOC 2007训练集部分图像变形成训练样本。

2.2. Test-time detection

At test time, we run selective search on the test image to extract around 2000 region proposals (we use selective search’s “fast mode” in all experiments). We warp each proposal and forward propagate it through the CNN in order to compute features. Then, for each class, we score each extracted feature vector using the SVM trained for that class. Given all scored regions in an image, we apply a greedy non-maximum suppression (for each class independently) that rejects a region if it has an intersection-overunion (IoU) overlap with a higher scoring selected region larger than a learned threshold.

2.2 测试阶段的物体检测

在测试阶段,在测试图像上使用selective search提取2000个推荐区域(所有实验中我们使用了selective search的加速版本)。对每一个推荐区域变形后通过CNN前向传播计算出特征。然后我们使用训练过特定类别的SVM给特征向量中的每个类别单独打分。然后给出一张图像中所有的打分区域,然后使用贪婪非最大值抑制算法(每个类别是独立进行的)舍弃那些与大于学习阈值更高得分的推荐区域有重叠(intersection-overunion (IoU))的区域。

Run-time analysis. Two properties make detection efficient. First, all CNN parameters are shared across all categories. Second, the feature vectors computed by the CNN are low-dimensional when compared to other common approaches, such as spatial pyramids with bag-of-visual-word encodings. The features used in the UVA detection system [39], for example, are two orders of magnitude larger than ours (360k vs. 4k-dimensional).

运行时间分析。两个特性让检测变得很高效。首先,所有的CNN参数都是跨类别共享的。其次,通过CNN计算的特征向量维度相比其他常见方法(比如spatial pyramids with bag-of-visual-word encodings)计算特征的维度是很低的。例如,UVA检测系统[39]中使用的特征比我们的要多两个数量级(360k维相比于4k维)。

The result of such sharing is that the time spent computing region proposals and features (13s/image on a GPU or 53s/image on a CPU) is amortized over all classes. The only class-specific computations are dot products between features and SVM weights and non-maximum suppression. In practice, all dot products for an image are batched into a single matrix-matrix product. The feature matrix is typically 2000*4096 and the SVM weight matrix is 4096*N, where N is the number of classes.

这种共享的结果就是计算推荐区域和特征的耗时可以分摊到所有类别上(GPU:每张图13s,CPU:每张图53s)。唯一和类别有关的计算都是特征和SVM权重以及最大化抑制之间的点积。实际应用中,所有的点积都可以批量化成一个单独矩阵间运算。特征矩阵的通常是2000×4096(译者注:通常产生2000个左右的推荐区域,每个推荐区域经过CNN产生4096维的向量),SVM权重的矩阵是4096*N,其中N是类别数目。

This analysis shows that R-CNN can scale to thousands of object classes without resorting to approximate techniques, such as hashing. Even if there were 100k classes, the resulting matrix multiplication takes only 10 seconds on a modern multi-core CPU. This efficiency is not merely the result of using region proposals and shared features. The UVA system, due to its high-dimensional features, would be two orders of magnitude slower while requiring 134GB of memory just to store 100k linear predictors, compared to just 1.5GB for our lower-dimensional features.

分析表明R-CNN可以扩展到上千个类别,而不需要诉诸近似技术,例如hashing。即使有10万个类别,计算矩阵乘法在现代多核CPU上只需要10s而已。这种高效不仅仅是因为使用了区域推荐和共享的特征。UVA系统由于其高维特征需要134GB的内存来存10万个线性预测器,而我们只要1.5GB,这使得UVA系统比我们慢了两个数量级。

It is also interesting to contrast R-CNN with the recent work from Dean et al. on scalable detection using DPMs and hashing [8]. They report a mAP of around 16% on VOC 2007 at a run-time of 5 minutes per image when introducing 10k distractor classes. With our approach, 10k detectors can run in about a minute on a CPU, and because no approximations are made mAP would remain at 59% (Section 3.2).

更有趣的是R-CCN和最近Dean等使用了DPMs和hashing[8]进行大规模检测任务对比。当他们用了1万个干扰类时每五分钟可以处理一张图片,在VOC2007上的mAP能达到16%。我们的方法1万个检测器由于没有做近似,可以在CPU上一分钟跑完,达到59%的mAP(3.2节)。

2.3. Training

Supervised pre-training. We discriminatively pre-trained the CNN on a large auxiliary dataset (ILSVRC2012 classification) using image-level annotations only (bounding-box labels are not available for this data). Pre-training was performed using the open source Caffe CNN library [24]. In brief, our CNN nearly matches the performance of Krizhevsky et al. [25], obtaining a top-1 error rate 2.2 percentage points higher on the ILSVRC2012 classification validation set. This discrepancy is due to simplifications in the training process.

2.3 训练

有监督预训练。我们仅使用图像级注释的大型辅助数据集(ILSVRC2012分类任务)上有区别地预训练了CNN(该数据集没有边界框标签)。预训练采用了开源的Caffe CNN库[24]。简单地说,我们的CNN十分接近krizhevsky等人网络的性能,在ILSVRC2012分类验证集上top-1错误率比他们高2.2%。差异主要来自于训练过程的简化。

Domain-specific fine-tuning. To adapt our CNN to the new task (detection) and the new domain (warped proposal windows), we continue stochastic gradient descent (SGD) training of the CNN parameters using only warped region proposals. Aside from replacing the CNN’s ImageNet-specific 1000-way classification layer with a randomly initialized (N+1)-way classification layer (where N is the number of object classes, plus 1 for background), the CNN architecture is unchanged. For VOC, N = 20 and for ILSVRC2013, N = 200. We treat all region proposals with >= 0.5 IoU overlap with a ground-truth box as positives for that box’s class and the rest as negatives. We start SGD at a learning rate of 0.001 (1/10th of the initial pre-training rate), which allows fine-tuning to make progress while not clobbering the initialization. In each SGD iteration, we uniformly sample 32 positive windows (over all classes) and 96 background windows to construct a mini-batch of size 128. We bias the sampling towards positive windows because they are extremely rare compared to background.

特定领域的参数fine-tune。为了让我们的CNN适应新的任务(即检测任务)和新的领域(变形后的推荐窗口),我们只使用变形后的推荐区域对CNN参数进行SGD训练。我们替掉了ImageNet特定的1000类分类层,换成了一个随机初始化的(N+1)类的分类层(其中N是目标类别数目,1代表背景),而卷积层架构没有改变。对于VOC,N=20,对于ILSVRC2013,N=200。对于所有的推荐区域,如果与真实标注框的IoU重叠大于等于0.5就认为该推荐区域代表的类是正例,否则就是负例。SGD初始学习率为0.001(初始化预训练时的十分之一),这使得调优得以有效进行而不会破坏初始化的成果。每轮SGD迭代,我们统一使用32个正例窗口(跨所有类别)和96个背景窗口组成大小为128的mini-batch。另外我们倾向于采样正例窗口,因为和背景相比他们很稀少。

Object category classifiers. Consider training a binary classifier to detect cars. It’s clear that an image region tightly enclosing a car should be a positive example. Similarly, it’s clear that a background region, which has nothing to do with cars, should be a negative example. Less clear is how to label a region that partially overlaps a car. We resolve this issue with an IoU overlap threshold, below which regions are defined as negatives. The overlap threshold, 0:3, was selected by a grid search over f0; 0:1; : : : ; 0:5g on a validation set. We found that selecting this threshold carefully is important. Setting it to 0:5, as in [39], decreased mAP by 5 points. Similarly, setting it to 0 decreased mAP by 4 points. Positive examples are defined simply to be the ground-truth bounding boxes for each class.

目标类别分类器。思考一下检测汽车的二分类器。很显然,一个图像区域紧紧包裹着一辆汽车应该就是正例。相似的,背景区域应该看不到任何汽车,就是负例。较为不明晰的是怎样标注哪些只和汽车部分重叠的区域。我们使用IoU重叠阈值来解决这个问题,低于这个阈值的就是负例。这个阈值我们选择了0.3,是在验证集上基于{0, 0.1, … 0.5}通过网格搜索得到的。我们发现认真选择这个阈值很重要。如果设置为0.5,如[39],可以提升mAP5个点,设置为0,就会降低4个点。正例就严格的是标注的框。

Once features are extracted and training labels are applied, we optimize one linear SVM per class. Since the training data is too large to fit in memory, we adopt the standard hard negative mining method [17, 37]. Hard negative mining converges quickly and in practice mAP stops increasing after only a single pass over all images.

一旦特征提取出来,就应用标签数据,然后优化每个类的线性SVM。由于训练数据太大,难以装进内存,我们选择了标准的hard negative mining method(高难负例挖掘算法?用途就是正负例数量不均衡,而负例分散代表性又不够的问题)[17, 37]。 高难负例挖掘算法收敛很快,实践中只要经过一轮mAP就可以基本停止增加了。

In Appendix B we discuss why the positive and negative examples are defined differently in fine-tuning versus SVM training. We also discuss the trade-offs involved in training detection SVMs rather than simply using the outputs from the final softmax layer of the fine-tuned CNN.

附录B中,我们讨论了,正例和负例在调优和SVM训练两个阶段的为什么定义得如此不同。我们也会讨论训练检测SVM的平衡问题,而不只是简单地使用来自调优后的CNN的最终softmax层的输出。

2.4. Results on PASCAL VOC 2010-12

Following the PASCAL VOC best practices [15], we validated all design decisions and hyperparameters on the VOC 2007 dataset (Section 3.2). For final results on the VOC 2010-12 datasets, we fine-tuned the CNN on VOC 2012 train and optimized our detection SVMs on VOC 2012 trainval. We submitted test results to the evaluation server only once for each of the two major algorithm variants (with and without bounding-box regression).

2.4. 在PASCAL VOC 2010-12上的结果

按照PASCAL VOC的最佳实践步骤,我们在VOC2007的数据集上验证了我们所有的设计思想和参数处理,对于在2010-2012数据库中,我们在VOC2012上训练和优化了我们的支持向量机检测器,我们一种方法(带BBox和不带BBox)只提交了一次评估服务器。

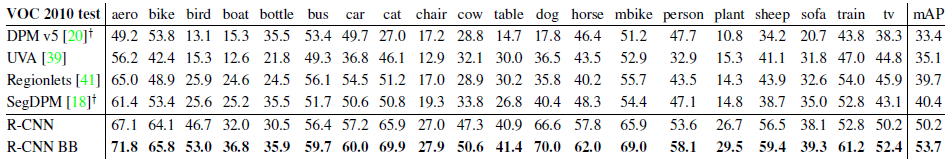

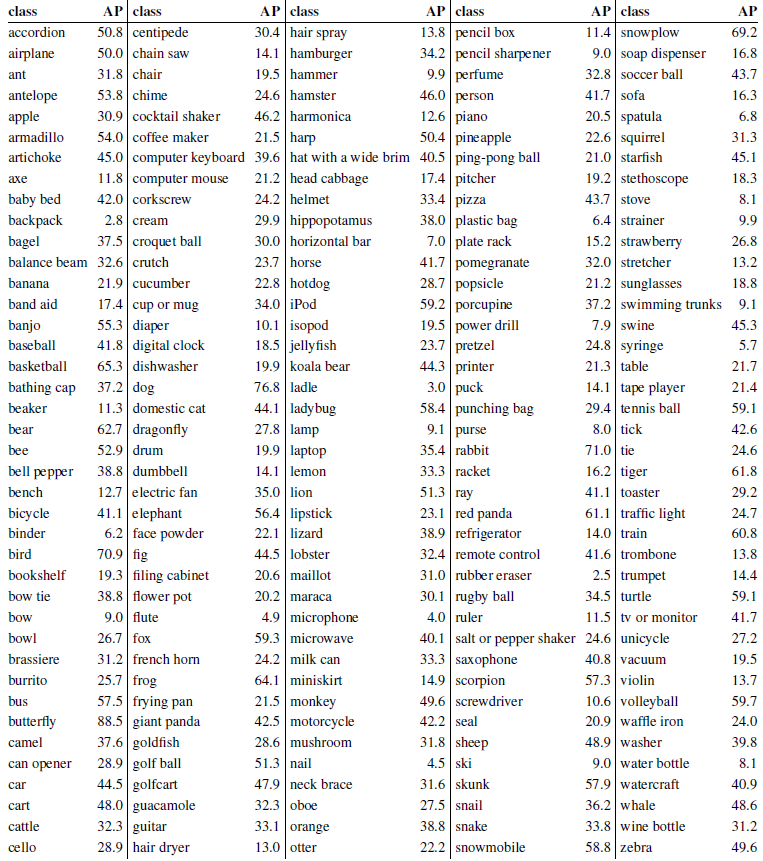

Table 1 shows complete results on VOC 2010. We compare our method against four strong baselines, including SegDPM [18], which combines DPM detectors with the output of a semantic segmentation system [4] and uses additional inter-detector context and image-classifier rescoring. The most germane comparison is to the UVA system from Uijlings et al. [39], since our systems use the same region proposal algorithm. To classify regions, their method builds a four-level spatial pyramid and populates it with densely sampled SIFT, Extended OpponentSIFT, and RGBSIFT descriptors, each vector quantized with 4000-word codebooks. Classification is performed with a histogram intersection kernel SVM. Compared to their multi-feature, non-linear kernel SVM approach, we achieve a large improvement in mAP, from 35.1% to 53.7% mAP, while also being much faster (Section 2.2). Our method achieves similar performance (53.3% mAP) on VOC 2011/12 test.

Table 1: Detection average precision (%) on VOC 2010 test. R-CNN is most directly comparable to UVA and Regionlets since all methods use selective search region proposals. Bounding-box regression (BB) is described in Section C. At publication time, SegDPM was the top-performer on the PASCAL VOC leaderboard. yDPM and SegDPM use context rescoring not used by the other methods.

表1展示了(本方法)在VOC2010的结果,我们将自己的方法同四种先进基准方法作对比,其中包括SegDPM,这种方法将DPM检测子与语义分割系统相结合并且使用附加的内核的环境和图片检测器打分。更加恰当的比较是同Uijling的UVA系统比较,因为我们的方法同样基于候选框算法。对于候选区域的分类,他们通过构建一个四层的金字塔,并且将之与SIFT模板结合,SIFT为扩展的OpponentSIFT和RGB-SIFT描述子,每一个向量被量化为4000词的codebook。分类任务由一个交叉核的支持向量机承担,对比这种方法的多特征方法,非线性内核的SVM方法,我们在mAP达到一个更大的提升,从35.1%提升至53.7%,而且速度更快。我们的方法在VOC2011/2012数据达到了相似的检测效果mAP53.3%。

表1:VOC 2010测试集上的检测平均精度(%)。R-CNN与UVA和Regionlet直接比较,因为所有方法都使用selective search的region proposals方法。Bounding-box回归(BB)在C节中进行了描述。本文发布时,SegDPM是PASCAL VOC排行榜上性能最优的算法。DPM和SegDPM使用其他方法未使用的context rescoring。

2.5. Results on ILSVRC2013 detection

We ran R-CNN on the 200-class ILSVRC2013 detection dataset using the same system hyperparameters that we used for PASCAL VOC. We followed the same protocol of submitting test results to the ILSVRC2013 evaluation server only twice, once with and once without bounding-box regression.

2.5. 在ILSVRC2013上检测任务结果

我们使用与用于PASCAL VOC相同的系统超参数在200类ILSVRC2013检测数据集上运行R-CNN。我们遵循相同的协议,仅将测试结果提交给ILSVRC2013评估服务器两次,一次带有边界框回归,一次带没有边界框回归。

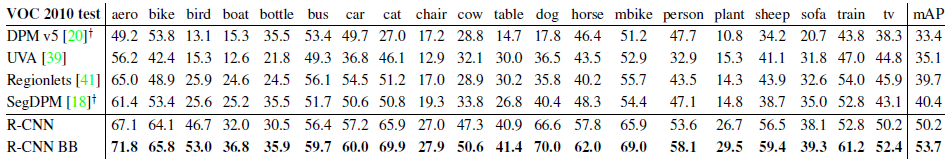

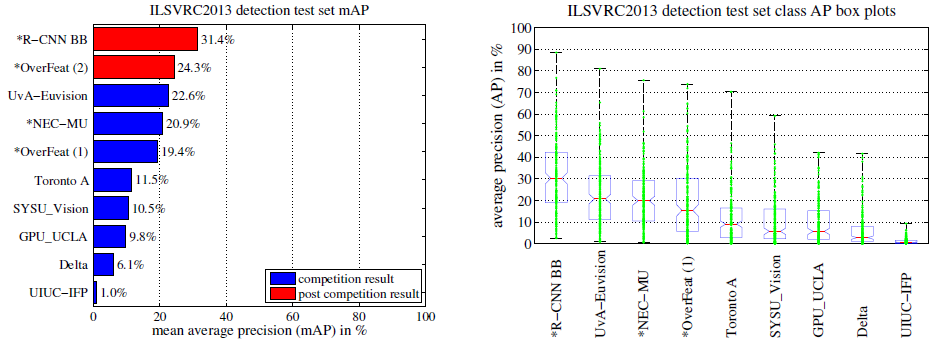

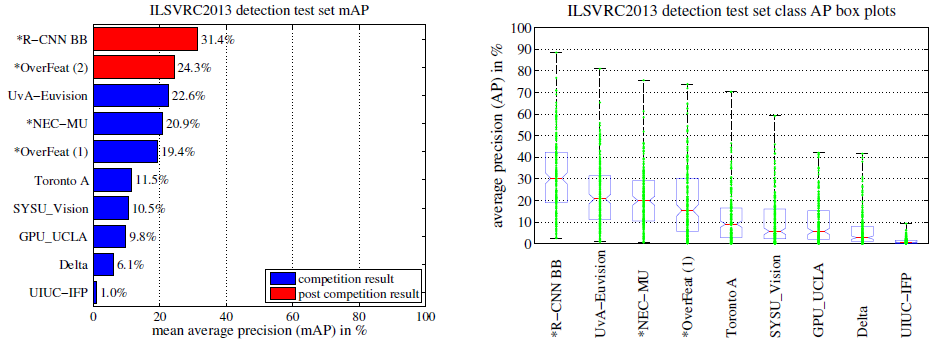

Figure 3 compares R-CNN to the entries in the ILSVRC 2013 competition and to the post-competition OverFeat result [34]. R-CNN achieves a mAP of 31.4%, which is significantly ahead of the second-best result of 24.3% from OverFeat. To give a sense of the AP distribution over classes, box plots are also presented and a table of perclass APs follows at the end of the paper in Table 8. Most of the competing submissions (OverFeat, NEC-MU, UvAEuvision, Toronto A, and UIUC-IFP) used convolutional neural networks, indicating that there is significant nuance in how CNNs can be applied to object detection, leading to greatly varying outcomes. In Section 4, we give an overview of the ILSVRC2013 detection dataset and provide details about choices that we made when running R-CNN on it.

Figure 3: (Left) Mean average precision on the ILSVRC2013 detection test set. Methods preceeded by * use outside training data (images and labels from the ILSVRC classification dataset in all cases). (Right) Box plots for the 200 average precision values per method. A box plot for the post-competition OverFeat result is not shown because per-class APs are not yet available (per-class APs for R-CNN are in Table 8 and also included in the tech report source uploaded to arXiv.org; see R-CNN-ILSVRC2013-APs.txt). The red line marks the median AP, the box bottom and top are the 25th and 75th percentiles. The whiskers extend to the min and max AP of each method. Each AP is plotted as a green dot over the whiskers (best viewed digitally with zoom).

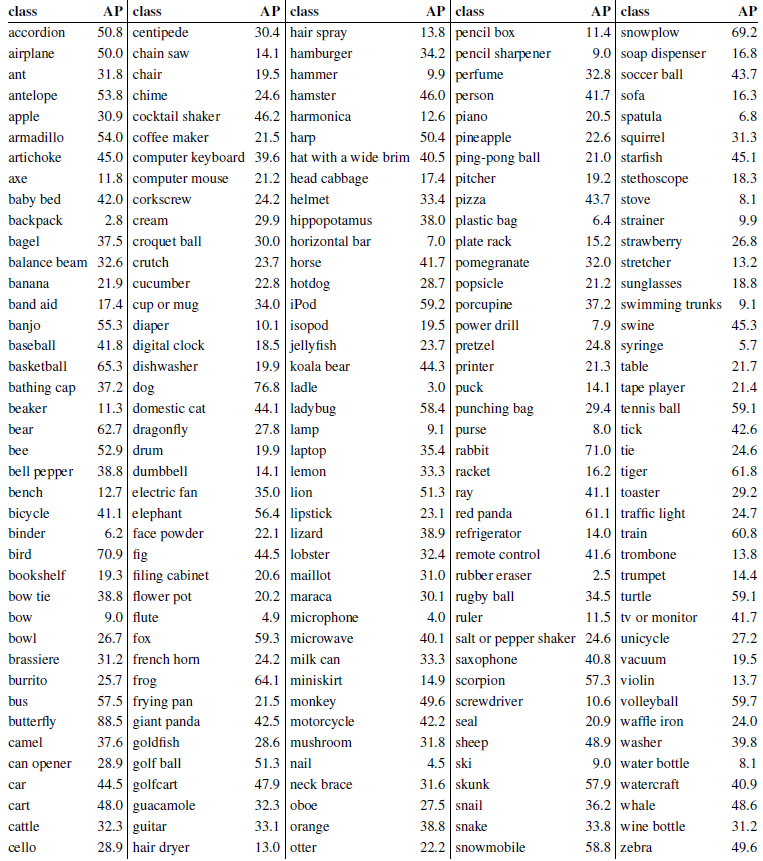

Table 8: Per-class average precision (%) on the ILSVRC2013 detection test set.

图3将R-CNN与ILSVRC 2013竞赛中的参赛作品以及竞赛后的OverFeat结果进行了比较[34]。 R-CNN的mAP达到31.4%,大大超过了OverFeat的第二佳结果24.3%。 为了让您了解AP在各个类别中的分布情况,还提供了箱形图,并在表8的末尾列出了每个类别的AP。大多数竞争者(OverFeat,NEC-MU,UvAEuvision,Toronto A, 和UIUC-IFP)使用了卷积神经网络,这表明CNN如何应用于目标检测有很大的细微差别,导致结果差异很大。 在第4节中,我们概述了ILSVRC2013检测数据集,并提供了有关在其上运行R-CNN时所做选择的详细信息。

图3 :(左图)ILSVRC2013检测测试集的mAP。*开头的方法使用外部训练数据(所有方法都使用ILSVRC分类数据集中的图像和标签)。(右图)每种方法的200个平均精度值的箱形图。竞赛后的OverFeat结果的箱形图未显示,因为无法获得按类别的AP(R-CNN按类别的AP在表8中,并且也包含在上传到arXiv.org的技术报告资源中;详细见:R-CNN-ILSVRC2013-APs.txt)。红线标记AP的中位数,方框的底部和顶部分别是第25个和第75个百分点。whiskers扩展到每种方法的AP最小值和最大值。将每个AP绘制为whiskers上的绿点(最好通过电子版缩放进行查看)(译者注:需要将右图放大看,否则可能看不清楚)。

表8:ILSVRC 2013检测测试集上的每类平均精度(%)。

3. Visualization, ablation, and modes of error

3.1. Visualizing learned features

First-layer filters can be visualized directly and are easy to understand [25]. They capture oriented edges and opponent colors. Understanding the subsequent layers is more challenging. Zeiler and Fergus present a visually attractive deconvolutional approach in [42]. We propose a simple (and complementary) non-parametric method that directly shows what the network learned.

3. 可视化、消融研究和模型误差

3.1. 可视化学习到的特征

直接可视化第一层特征过滤器非常容易理解[25],它们主要捕获方向性边缘和对比色。难以理解的是后面的层。Zeiler and Fergus提出了一种可视化的很棒的反卷积方法[42]。我们则使用了一种简单的非参数化方法,直接展示网络学到的东西。这个想法是单一输出网络中一个特定单元(特征),然后把它当做一个正确类别的物体检测器来使用。

The idea is to single out a particular unit (feature) in the network and use it as if it were an object detector in its own right. That is, we compute the unit’s activations on a large set of held-out region proposals (about 10 million), sort the proposals from highest to lowest activation, perform nonmaximum suppression, and then display the top-scoring regions. Our method lets the selected unit “speak for itself” by showing exactly which inputs it fires on. We avoid averaging in order to see different visual modes and gain insight into the invariances computed by the unit.

方法是这样的,先计算所有抽取出来的推荐区域(大约1000万),计算每个区域所导致的对应单元的激活值,然后按激活值对这些区域进行排序,然后进行最大值抑制,最后展示分值最高的若干个区域。这个方法让被选中的单元在遇到他想激活的输入时“自己说话”。我们避免平均化是为了看到不同的视觉模式和深入观察单元计算出来的不变性。

We visualize units from layer pool5, which is the maxpooled output of the network’s fifth and final convolutional layer. The pool5 feature map is 6 6 256 = 9216-dimensional. Ignoring boundary effects, each pool5 unit has a receptive field of 195195 pixels in the original 227227 pixel input. A central pool5 unit has a nearly global view, while one near the edge has a smaller, clipped support.

我们可视化了第五层的池化层pool5,是卷积网络的最后一层,feature_map(卷积核和特征数的总称)的大小是6 x 6 x 256 = 9216维。忽略边界效应,每个pool5单元拥有195×195的感受野,输入是227×227。pool5中间的单元,几乎是一个全局视角,而边缘的单元有较小的带裁切的支持。

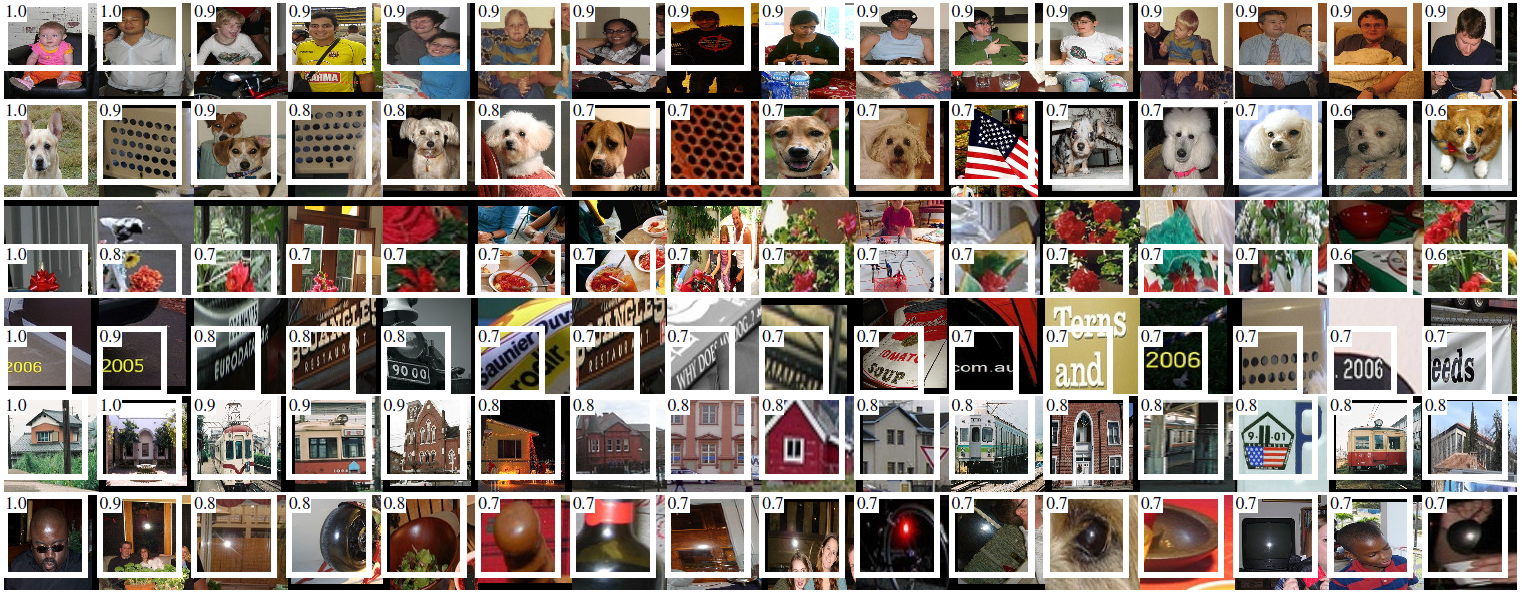

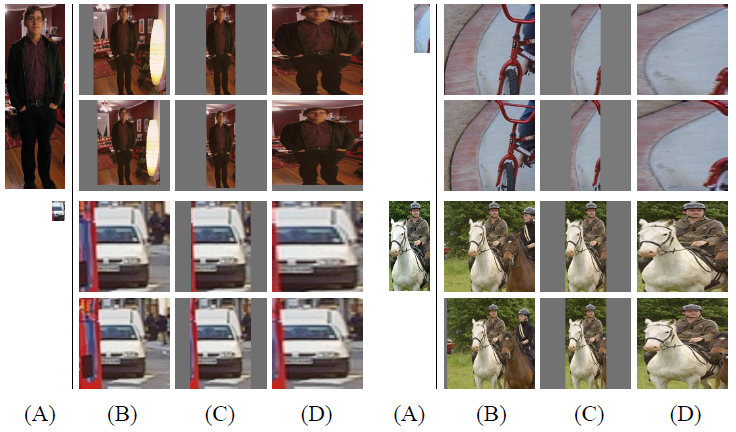

Each row in Figure 4 displays the top 16 activations for a pool5 unit from a CNN that we fine-tuned on VOC 2007 trainval. Six of the 256 functionally unique units are visualized (Appendix D includes more). These units were selected to show a representative sample of what the network learns. In the second row, we see a unit that fires on dog faces and dot arrays. The unit corresponding to the third row is a red blob detector. There are also detectors for human faces and more abstract patterns such as text and triangular structures with windows. The network appears to learn a representation that combines a small number of class-tuned features together with a distributed representation of shape, texture, color, and material properties. The subsequent fully connected layer fc6 has the ability to model a large set of compositions of these rich features.

Figure 4: Top regions for six pool5 units. Receptive fields and activation values are drawn in white. Some units are aligned to concepts, such as people (row 1) or text (4). Other units capture texture and material properties, such as dot arrays (2) and specular reflections (6).

图4的每一行显示了对于一个pool5单元的最高16个激活区域情况,这个实例来自于VOC 2007上我们调优的CNN,这里只展示了256个单元中的6个(附录D包含更多)。我们看看这些单元都学到了什么。第二行,有一个单元看到狗和斑点的时候就会激活,第三行对应红斑点,还有人脸,当然还有一些抽象的模式,比如文字和带窗户的三角结构。这个网络似乎学到了一些类别调优相关的特征,这些特征都是形状、纹理、颜色和材质特性的分布式表示。而后续的fc6层则对这些丰富的特征建立大量的组合来表达各种不同的事物。

图4:六个pool5神经元的顶部区域。感受野和激活值以白线绘制。有些神经元与概念保持一致,例如人(第1行)或文本(第4行)。 其他单元捕获纹理和材料属性,例如点阵列(第2行)和镜面反射(第6行)。

3.2. Ablation studies

Performance layer-by-layer, without fine-tuning. To understand which layers are critical for detection performance, we analyzed results on the VOC 2007 dataset for each of the CNN’s last three layers. Layer pool5 was briefly described in Section 3.1. The final two layers are summarized below.

3.2. 消融研究

没有调优的各层性能。为了理解哪一层对于检测的性能十分重要,我们分析了CNN最后三层的每一层在VOC2007上面的结果。Pool5在3.1中做过剪短的表述。最后两层下面来总结一下。

Layer fc6 is fully connected to pool5. To compute features, it multiplies a 40969216 weight matrix by the pool5 feature map (reshaped as a 9216-dimensional vector) and then adds a vector of biases. This intermediate vector is component-wise half-wave rectified (x max(0; x)).

fc6是一个与pool5连接的全连接层。为了计算特征,它和pool5的feature map(reshape成一个9216维度的向量)做了一个4096×9216的矩阵乘法,并添加了一个bias向量。中间的向量是逐个组件的半波整流(component-wise half-wave rectified)ReLU(x <– max(0,="" x))。<="" p="">

Layer fc7 is the final layer of the network. It is implemented by multiplying the features computed by fc6 by a 4096 4096 weight matrix, and similarly adding a vector of biases and applying half-wave rectification.

fc7是网络的最后一层。跟fc6之间通过一个4096×4096的矩阵相乘。也是添加了bias向量和应用了ReLU。

We start by looking at results from the CNN without fine-tuning on PASCAL, i.e. all CNN parameters were pre-trained on ILSVRC 2012 only. Analyzing performance layer-by-layer (Table 2 rows 1-3) reveals that features from fc7 generalize worse than features from fc6. This means that 29%, or about 16.8 million, of the CNN’s parameters can be removed without degrading mAP. More surprising is that removing both fc7 and fc6 produces quite good results even though pool5 features are computed using only 6% of the CNN’s parameters. Much of the CNN’s representational power comes from its convolutional layers, rather than from the much larger densely connected layers. This finding suggests potential utility in computing a dense feature map, in the sense of HOG, of an arbitrary-sized image by using only the convolutional layers of the CNN. This representation would enable experimentation with sliding-window detectors, including DPM, on top of pool5 features.

我们先来看看没有调优的CNN在PASCAL上的表现,没有调优是指所有的CNN参数就是在ILSVRC2012上训练后的状态。分析每一层的性能显示来自fc7的特征泛化能力不如fc6的特征。这意味29%的CNN参数,也就是1680万的参数可以移除掉,而且不影响mAP。更多的惊喜是即使同时移除fc6和fc7,仅仅使用pool5的特征,只使用CNN参数的6%也能有非常好的结果。可见CNN的主要表达力来自于卷积层,而不是全连接层。这个发现提醒我们也许可以在计算一个任意尺寸的图片的稠密特征图(dense feature map)时使仅仅使用CNN的卷积层。这种表示可以直接在pool5的特征上进行滑动窗口检测的实验。

Performance layer-by-layer, with fine-tuning. We now look at results from our CNN after having fine-tuned its parameters on VOC 2007 trainval. The improvement is striking (Table 2 rows 4-6): fine-tuning increases mAP by 8.0 percentage points to 54.2%. The boost from fine-tuning is much larger for fc6 and fc7 than for pool5, which suggests that the pool5 features learned from ImageNet are general and that most of the improvement is gained from learning domain-specific non-linear classifiers on top of them.

调优后的各层性能。我们来看看调优后在VOC2007上的结果表现。提升非常明显,mAP提升了8个百分点,达到了54.2%。fc6和fc7的提升明显优于pool5,这说明pool5从ImageNet学习的特征通用性很强,在它之上层的大部分提升主要是在学习领域相关的非线性分类器。

Comparison to recent feature learning methods. Relatively few feature learning methods have been tried on PASCAL VOC detection. We look at two recent approaches that build on deformable part models. For reference, we also include results for the standard HOG-based DPM [20].

对比其他特征学习方法。相当少的特征学习方法应用与VOC数据集。我们找到的两个最近的方法都是基于固定探测模型。为了参照的需要,我们也将基于基本HOG的DFM方法的结果加入比较。

The first DPM feature learning method, DPM ST [28], augments HOG features with histograms of “sketch token” probabilities. Intuitively, a sketch token is a tight distribution of contours passing through the center of an image patch. Sketch token probabilities are computed at each pixel by a random forest that was trained to classify 3535 pixel patches into one of 150 sketch tokens or background.

第一个DPM的特征学习方法,DPM ST,将HOG中加入略图表征的概率直方图。直观的,一个略图就是通过图片中心轮廓的狭小分布。略图表征概率通过一个被训练出来的分类35*35像素路径为一个150略图表征的的随机森林方法计算。

The second method, DPM HSC [31], replaces HOG with histograms of sparse codes (HSC). To compute an HSC, sparse code activations are solved for at each pixel using a learned dictionary of 100 7 7 pixel (grayscale) atoms. The resulting activations are rectified in three ways (full and both half-waves), spatially pooled, unit `2 normalized, and then power transformed (x sign(x)jxj).

第二个方法,DPM HSC,将HOG特征替换成一个稀疏编码的直方图。为了计算HSC(HSC的介绍略)

All R-CNN variants strongly outperform the three DPM baselines (Table 2 rows 8-10), including the two that use feature learning. Compared to the latest version of DPM, which uses only HOG features, our mAP is more than 20 percentage points higher: 54.2% vs. 33.7%—a 61% relative improvement. The combination of HOG and sketch tokens yields 2.5 mAP points over HOG alone, while HSC improves over HOG by 4 mAP points (when compared internally to their private DPM baselines—both use nonpublic implementations of DPM that underperform the open source version [20]). These methods achieve mAPs of 29.1% and 34.3%, respectively.

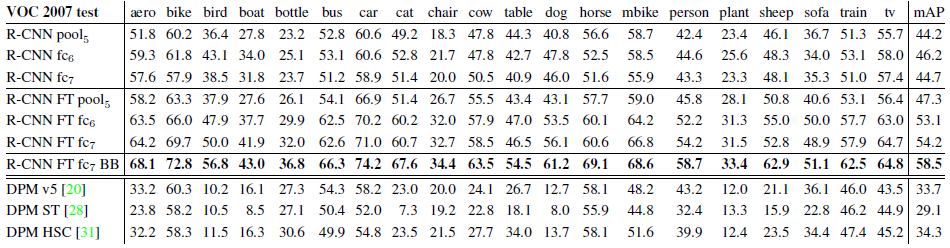

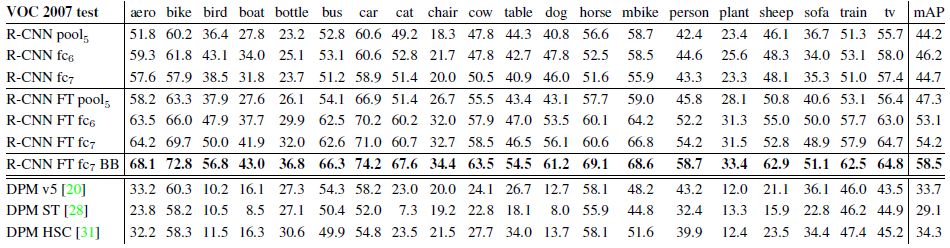

Table 2: Detection average precision (%) on VOC 2007 test. Rows 1-3 show R-CNN performance without fine-tuning. Rows 4-6 show results for the CNN pre-trained on ILSVRC 2012 and then fine-tuned (FT) on VOC 2007 trainval. Row 7 includes a simple bounding-box regression (BB) stage that reduces localization errors (Section C). Rows 8-10 present DPM methods as a strong baseline. The first uses only HOG, while the next two use different feature learning approaches to augment or replace HOG.

所有的RCNN变种算法都要强于这三个DPM方法(表2 8-10行),包括两种特征学习的方法(特征学习不同于普通的HOG方法?)与最新版本的DPM方法比较,我们的mAP要多大约20个百分点,61%的相对提升。略图表征与HOG现结合的方法比单纯HOG的性能高出2.5%,而HSC的方法相对于HOG提升四个百分点(当内在的与他们自己的DPM基准比价,全都是用的非公共DPM执行,这低于开源版本)。这些方法分别达到了29.1%和34.3%。

表2:VOC 2007测试集上的检测平均精度(%)。第1-3行显示了没有进行fine-tuning的R-CNN性能。第4-6行显示了在ILSVRC 2012上进行了预训练并在VOC 2007上进行了fine-tuning(FT)的CNN的结果。第7行包括一个简单的bounding-box回归(BB)阶段,可减少定位错误(详见C节)。第8-10行显示将DPM方法作为强baseline的结果。前一种仅使用HOG,而后两种使用不同的特征学习方法来增强或替代HOG。

3.3. Network architectures

Most results in this paper use the network architecture from Krizhevsky et al. [25]. However, we have found that the choice of architecture has a large effect on R-CNN detection performance. In Table 3 we show results on VOC 2007 test using the 16-layer deep network recently proposed by Simonyan and Zisserman [43]. This network was one of the top performers in the recent ILSVRC 2014 classification challenge. The network has a homogeneous structure consisting of 13 layers of 3 3 convolution kernels, with five max pooling layers interspersed, and topped with three fully-connected layers. We refer to this network as “O-Net” for OxfordNet and the baseline as “T-Net” for TorontoNet.

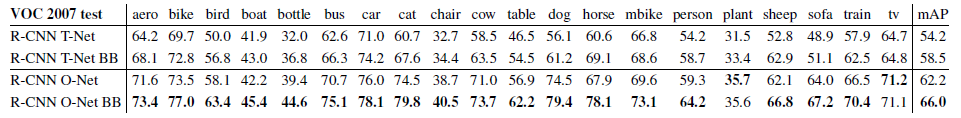

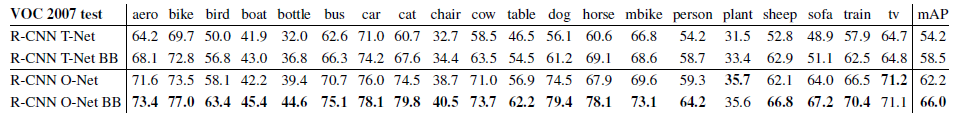

Table 3: Detection average precision (%) on VOC 2007 test for two different CNN architectures. The first two rows are results from Table 2 using Krizhevsky et al.’s architecture (T-Net). Rows three and four use the recently proposed 16-layer architecture from Simonyan and Zisserman (O-Net) [43].

3.3. 网络架构

本文中的大部分结果所采用的架构都来自于Krizhevsky等人的工作[25]。然后我们也发现架构的选择对于R-CNN的检测性能会有很大的影响。表3中我们展示了VOC2007测试时采用了16层的深度网络,由Simonyan和Zisserman[43]刚刚提出来。这个网络在ILSVRC2014分类挑战上是最佳表现。这个网络采用了完全同构的13层3×3卷积核,中间穿插了5个最大池化层,顶部有三个全连接层。我们称这个网络为O-Net表示OxfordNet,将我们的基准网络称为T-Net表示TorontoNet。

表3:两种不同CNN架构在VOC 2007测试集上的检测平均精度(%)。前两行源于表2中使用Krizhevsky等人架构(T-Net)的结果。第三和第四行使用Simonyan和Zisserman(O-Net)[43]最近提出的16层架构。

To use O-Net in R-CNN, we downloaded the publicly available pre-trained network weights for the VGG ILSVRC 16 layers model from the Caffe Model Zoo.1 We then fine-tuned the network using the same protocol as we used for T-Net. The only difference was to use smaller minibatches (24 examples) as required in order to fit within GPU memory. The results in Table 3 show that RCNN with O-Net substantially outperforms R-CNN with TNet, increasing mAP from 58.5% to 66.0%. However there is a considerable drawback in terms of compute time, with the forward pass of O-Net taking roughly 7 times longer than T-Net.

为了使用O-Net,我们从Caffe模型库中下载了他们训练好的权重VGG_ILSVRC_16_layers。然后使用和T-Net上一样的操作过程进行调优。唯一的不同是使用了更小的Batch Size(24),主要是为了适应GPU的内存。表3中的结果显示使用O-Net的R-CNN表现优越,将mAP从58.5%提升到了66.0%。然后它有个明显的缺陷就是计算耗时。O-Net的前向传播耗时大概是T-Net的7倍。

3.4. Detection error analysis

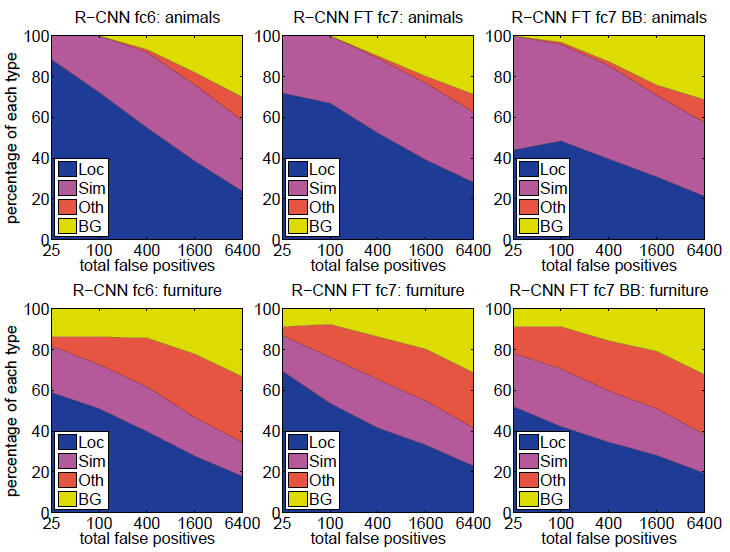

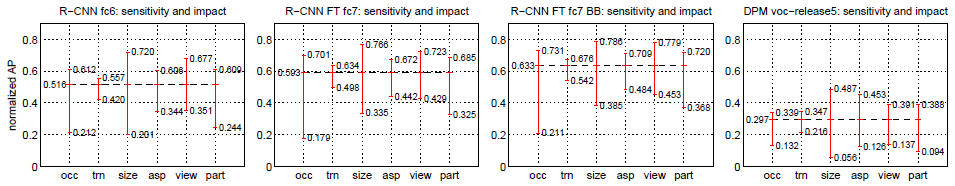

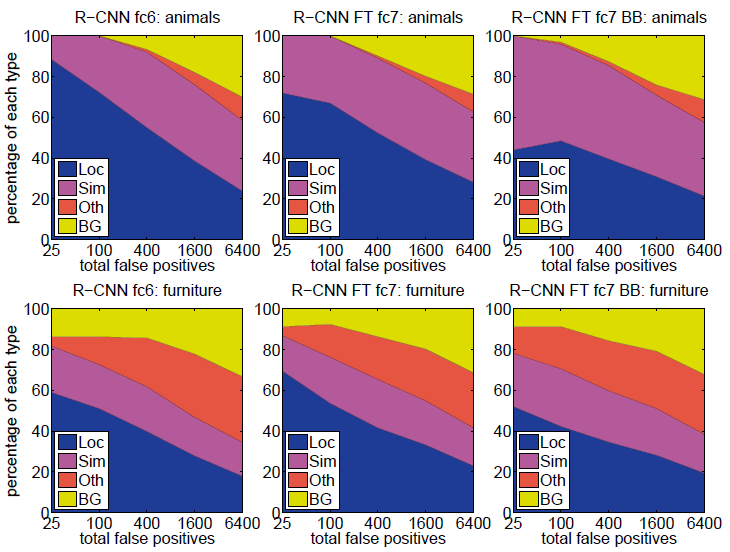

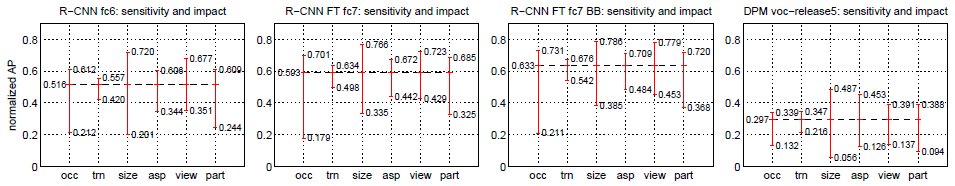

We applied the excellent detection analysis tool from Hoiem et al. [23] in order to reveal our method’s error modes, understand how fine-tuning changes them, and to see how our error types compare with DPM. A full summary of the analysis tool is beyond the scope of this paper and we encourage readers to consult [23] to understand some finer details (such as “normalized AP”). Since the analysis is best absorbed in the context of the associated plots, we present the discussion within the captions of Figure 5 and Figure 6.

Figure 5: Distribution of top-ranked false positive (FP) types. Each plot shows the evolving distribution of FP types as more FPs are considered in order of decreasing score. Each FP is categorized into 1 of 4 types: Loc—poor localization (a detection with an IoU overlap with the correct class between 0.1 and 0.5, or a duplicate); Sim—confusion with a similar category; Oth—confusion with a dissimilar object category; BG—a FP that fired on background. Compared with DPM (see [23]), significantly more of our errors result from poor localization, rather than confusion with background or other object classes, indicating that the CNN features are much more discriminative than HOG. Loose localization likely results from our use of bottom-up region proposals and the positional invariance learned from pre-training the CNN for whole-image classification. Column three shows how our simple bounding-box regression method fixes many localization errors.

Figure 6: Sensitivity to object characteristics. Each plot shows the mean (over classes) normalized AP (see [23]) for the highest and lowest performing subsets within six different object characteristics (occlusion, truncation, bounding-box area, aspect ratio, viewpoint, part visibility). We show plots for our method (R-CNN) with and without fine-tuning (FT) and bounding-box regression (BB) as well as for DPM voc-release5. Overall, fine-tuning does not reduce sensitivity (the difference between max and min), but does substantially improve both the highest and lowest performing subsets for nearly all characteristics. This indicates that fine-tuning does more than simply improve the lowest performing subsets for aspect ratio and bounding-box area, as one might conjecture based on how we warp network inputs. Instead, fine-tuning improves robustness for all characteristics including occlusion, truncation, viewpoint, and part visibility.

3.4. 检测误差分析

为了揭示出我们方法的错误之处,我们使用Hoiem提出的优秀的检测分析工具,来理解fine-tune是怎样改变他们,并且观察相对于DPM方法,我们的错误形式(译者注:即错误的各种来源)。这个分析方法全部的介绍超出了本篇文章的范围,我们建议读者查阅文献23来了解更加详细的介绍(例如归一化AP的介绍),由于这些分析是不太有关联性,所以我们放在图4和图5的题注中讨论。

图5:排名最高的假阳性(FP)类型分布。每个图都显示了FP类型的演变分布,因为按照得分递减的顺序考虑了更多FP。每个FP分为以下4种类型:Loc——定位较差(检测结果与正确类别的IoU重叠在0.1和0.5之间,或重复);Sim——与相似类别混淆;Oth——与非相似类别混淆;BG——将背景当作检测目标的假阳性。与DPM相比(参见[23]),我们的错误明显更多是由于错误定位所致,而不是与背景或其他对象类别造成的混淆,这表明CNN特征比HOG具有更大的判别力。宽松的定位很可能是由于我们使用了自下而上的region proposals,以及从对神经网络进行整个图像分类的预训练中学到的位置不变性。第三列显示了我们的简单bounding-box回归方法如何解决许多定位错误。

图6:目标特性的敏感性。每个图都显示了六个不同目标特征(遮挡、截断、bounding-box区域、长宽比、viewpoint、部分可见性)子集性能的最高和最低均值(基于每个类别)标准化AP(详见[23])。我们展示了进行和没有进行fine-tuning(FT)、bounding-box回归(BB)以及DPM voc-release5的方法(R-CNN)的结果图。总体而言,fine-tuning没有降低灵敏度(最大值和最小值之间的差异),但会实质上改善几乎所有特性子集的最高和最低性能。这表明,fine-tuning不仅可以改善长宽比和bounding-box区域的性能最低的子集,还可以根据我们扭曲网络输入的方式来推测。相反,fine-tuning可以提高所有特性的鲁棒性,包括遮挡、截断、viewpoint,和部分可见性。

3.5. Bounding-box regression

Based on the error analysis, we implemented a simple method to reduce localization errors. Inspired by the bounding-box regression employed in DPM [17], we train a linear regression model to predict a new detection window given the pool5 features for a selective search region proposal. Full details are given in Appendix C. Results in Table 1, Table 2, and Figure 5 show that this simple approach fixes a large number of mislocalized detections, boosting mAP by 3 to 4 points.

3.5. Bounding-box回归

基于对误差的分析,我们使用了一种简单的方法减小定位误差。受到DPM[17]中使用的bounding-box回归的启发,我们训练了一个线性回归模型在给定一个选择区域的pool5特征时去预测一个新的检测窗口。详细的细节参考附录C。表1、表2和图5的结果说明这个简单的方法,改善了大量的错误定位检测结果,mAP提升了3-4个百分点。

3.6. Qualitative results

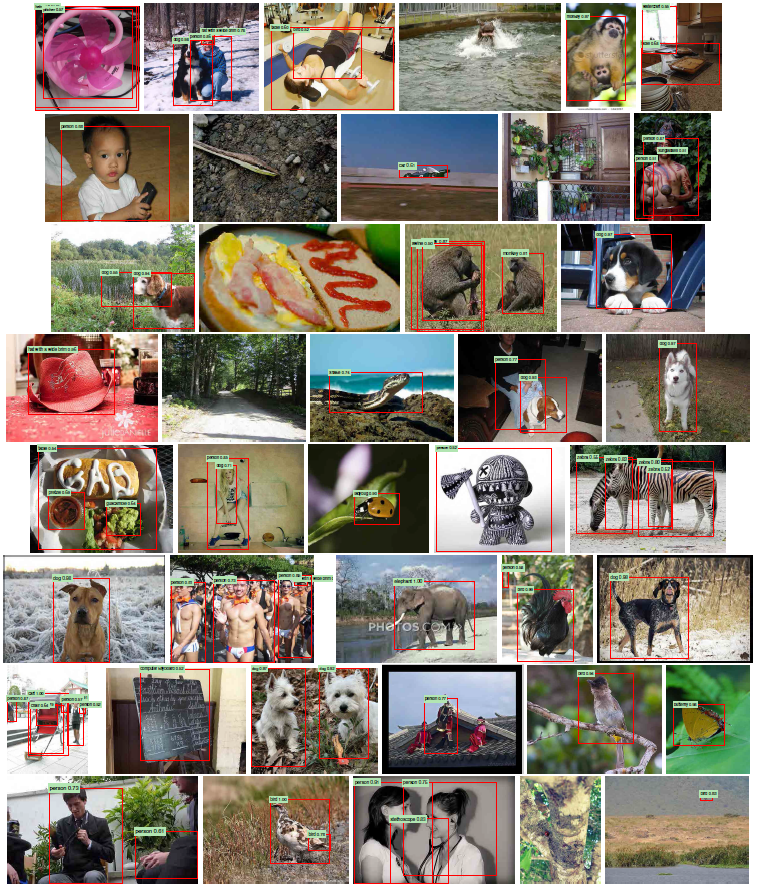

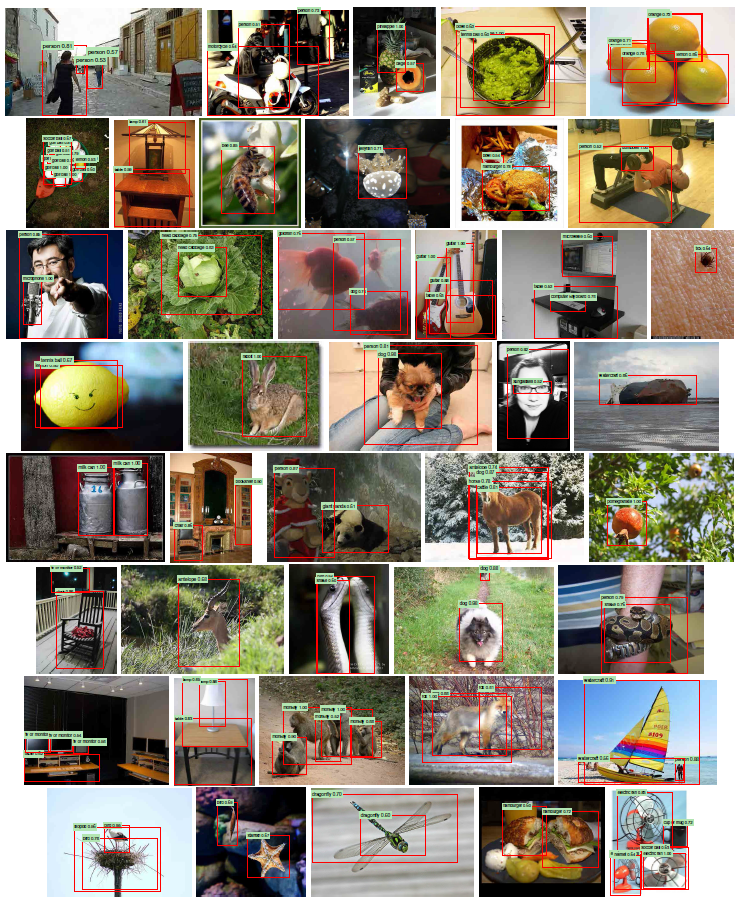

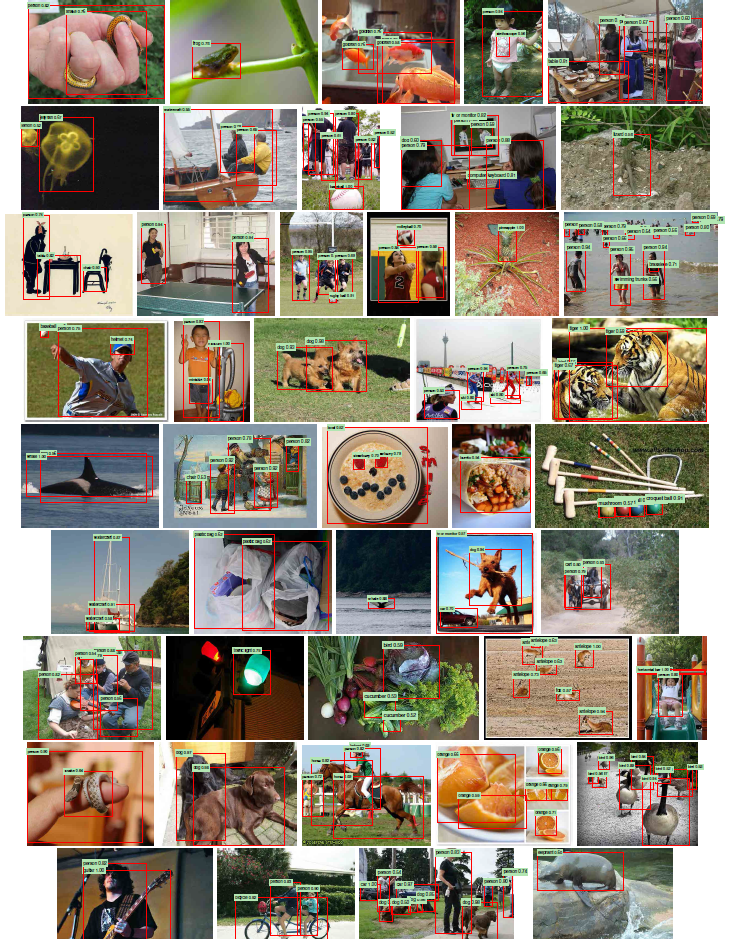

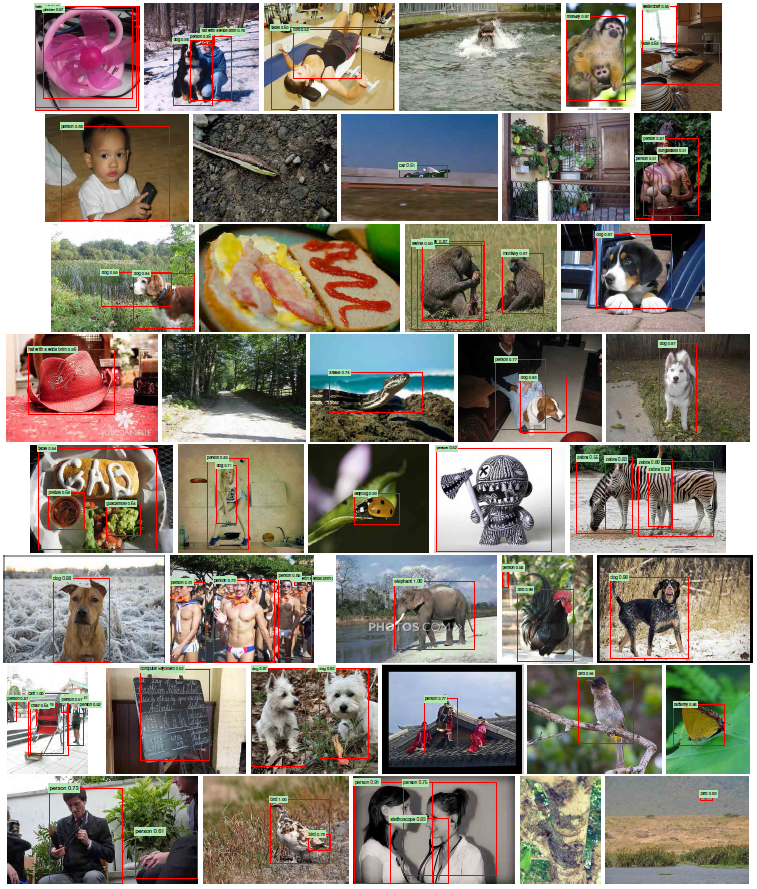

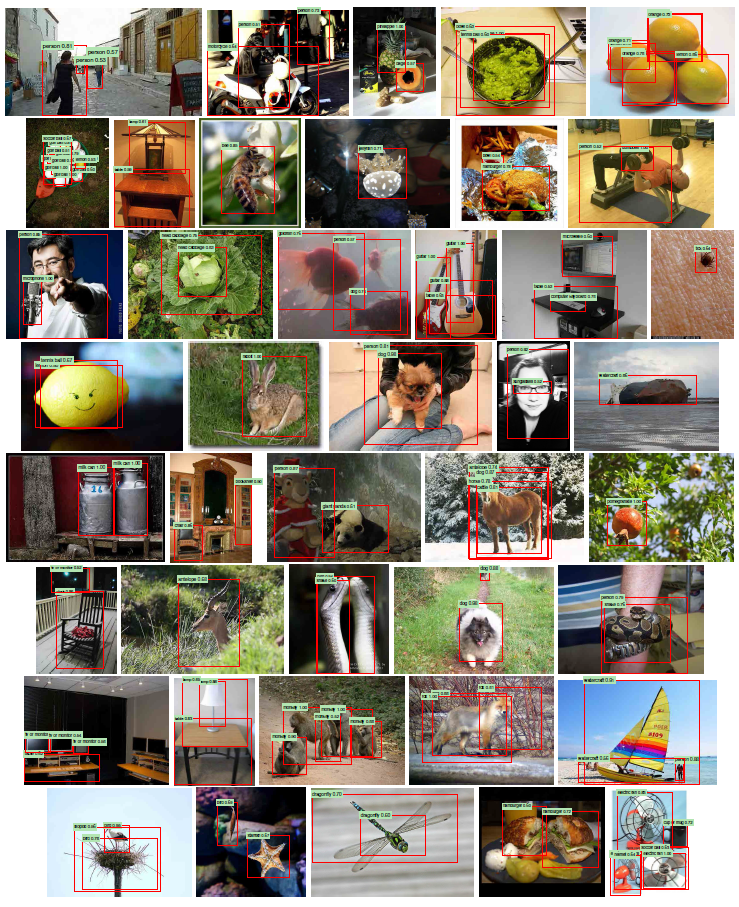

Qualitative detection results on ILSVRC2013 are presented in Figure 8 and Figure 9 at the end of the paper. Each image was sampled randomly from the val2 set and all detections from all detectors with a precision greater than 0.5 are shown. Note that these are not curated and give a realistic impression of the detectors in action. More qualitative results are presented in Figure 10 and Figure 11, but these have been curated. We selected each image because it contained interesting, surprising, or amusing results. Here, also, all detections at precision greater than 0.5 are shown.

Figure 8: Example detections on the val2 set from the configuration that achieved 31.0% mAP on val2. Each image was sampled randomly (these are not curated). All detections at precision greater than 0.5 are shown. Each detection is labeled with the predicted class and the precision value of that detection from the detector’s precision-recall curve. Viewing digitally with zoom is recommended.

Figure 9: More randomly selected examples. See Figure 8 caption for details. Viewing digitally with zoom is recommended.

Figure 10: Curated examples. Each image was selected because we found it impressive, surprising, interesting, or amusing. Viewing digitally with zoom is recommended.

Figure 11: More curated examples. See Figure 10 caption for details. Viewing digitally with zoom is recommended.

3.6. 定性结果

ILSVRC2013数据集上的定性检测结果如文章末尾图8和图9所示。从val2集中随机采样每个图像,并显示所有检测器的所有检测结果,其精度均大于0.5。请注意这些不是经过精心策划的,它们会给实际使用中的检测器以真实的印象。图10和图11给出了更多的定性结果,但是这些结果已经得到确认。我们选择每张图像是因为它包含有趣,令人惊讶或有趣的结果。此处,还显示了所有精度大于0.5的检测。

图8:通过在val2上达到31.0% mAP的配置对val2数据子集进行检测的结果。每个图像都是随机采样的(这些图像不是精心挑选的)。显示了所有精度大于0.5的检测结果。每个检测器都标记有预测类别和检测器的precision-recall曲线中的检测值的精确度。建议数字化放大观看。

图9:更多随机选择的示例。详细介绍信息见图8标题。建议数字化放大观看。

图10:精心挑选的结果示例。选择每张图片是因为我们发现它令人印象深刻、令人惊讶、有趣。建议数字化放大观看。

Figure 11: 更多精心挑选的例子。详细介绍见图10标题。建议数字化放大观看。

4. The ILSVRC2013 detection dataset

In Section 2 we presented results on the ILSVRC2013 detection dataset. This dataset is less homogeneous than PASCAL VOC, requiring choices about how to use it. Since these decisions are non-trivial, we cover them in this section.

4. ILSVRC2013检测数据集

在第2节中,我们展示了ILSVRC2013检测数据集上的结果。该数据集的同质性不如PASCAL VOC数据集,需要选择使用方式。由于这些决定是重要的,因此在本节中将对它们进行介绍。

4.1. Dataset overview

The ILSVRC2013 detection dataset is split into three sets: train (395,918), val (20,121), and test (40,152), where the number of images in each set is in parentheses. The val and test splits are drawn from the same image distribution. These images are scene-like and similar in complexity (number of objects, amount of clutter, pose variability, etc.) to PASCAL VOC images. The val and test splits are exhaustively annotated, meaning that in each image all instances from all 200 classes are labeled with bounding boxes. The train set, in contrast, is drawn from the ILSVRC2013 classification image distribution. These images have more variable complexity with a skew towards images of a single centered object. Unlike val and test, the train images (due to their large number) are not exhaustively annotated. In any given train image, instances from the 200 classes may or may not be labeled. In addition to these image sets, each class has an extra set of negative images. Negative images are manually checked to validate that they do not contain any instances of their associated class. The negative image sets were not used in this work. More information on how ILSVRC was collected and annotated can be found in [11, 36].

4.1. 数据集概述

ILSVRC2013检测数据集分为三个子集:训练集(395,918)、验证集(20,121)和测试集(40,152),括号中数字表示每个子集中的图像个数。验证集和测试集源于相同的图像分布。这些图像类似于场景,并且在复杂性(检测对象的数量、混杂程度、姿势多样性等)方面与PASCAL VOC数据集中的图像相似。验证集和测试集拆分均进行了详尽注释,即在每个图像中所有200类的所有实例都用边框标出。相反,训练集是源于ILSVRC 2013分类图像分布。这些图像具有更多的可变复杂性,并且大部分检测对象位于图像中央。与验证集和测试集不同,训练集图像(由于数量众多)未得到详尽注释。任何训练集中的图像可能标记了200个类别的实例,也有可能没有被标记。除了这些图像集之外,每个类别还具有一组负样本图像。手动检查负样本图像以确认它们不包含其关联类的任何检测对象。负样本图像集未在这项工作中使用。有关如何收集和注释ILSVRC的更多信息,请参见论文[11,36]。

The nature of these splits presents a number of choices for training R-CNN. The train images cannot be used for hard negative mining, because annotations are not exhaustive. Where should negative examples come from? Also, the train images have different statistics than val and test. Should the train images be used at all, and if so, to what extent? While we have not thoroughly evaluated a large number of choices, we present what seemed like the most obvious path based on previous experience.

这些拆分的性质为训练R-CNN提供了许多选择。训练集图像不能用于硬负样本挖掘,因为注释并不详尽。负样本应该从哪里来?而且,训练集图像的统计信息不同于验证集和测试集(译者注:也就是说训练集与验证集和测试集源于不同的样本分布)。是否应该完全使用训练集图像,如果可以,使用程度如何?尽管我们尚未彻底评估大量选择,但根据以前的经验,我们提出了最合适的选择。

Our general strategy is to rely heavily on the val set and use some of the train images as an auxiliary source of positive examples. To use val for both training and validation, we split it into roughly equally sized “val1” and “val2” sets. Since some classes have very few examples in val (the

smallest has only 31 and half have fewer than 110), it is important to produce an approximately class-balanced partition. To do this, a large number of candidate splits were generated and the one with the smallest maximum relative class imbalance was selected. Each candidate split was generated by clustering val images using their class counts as features, followed by a randomized local search that may improve the split balance. The particular split used here has a maximum relative imbalance of about 11% and a median relative imbalance of 4%. The val1/val2 split and code used to produce them will be publicly available to allow other researchers to compare their methods on the val splits used in this report.

我们的总体策略是严重依赖验证集,并使用一些训练集图像作为正样本的辅助来源。为了将验证集用于训练和验证,我们将验证集粗略分为大致相等的“val1”和“val2”子集。由于某些类别在验证集中的样本很少(最小的实例只有31个,而一半的实例少于110个),因此产生一个近似类别平衡的拆分方法是很重要的。为此,生成了大量候选拆分方法,并选择了最小最大相对类别不平衡的拆分方法。每个候选拆分方法都是通过将验证集图像以其类别计数为特征进行聚类而成的,然后进行随机局部搜索以使改善拆分平衡性。此处使用的特定拆分方法的最大相对不平衡约为11%,中值相对不平衡为4%。val1/val2拆分方法和用于生成它们的代码将公开可用,以便其他研究人员将他们的方法和本报告中使用的验证集拆分方法进行比较。

4.2. Region proposals

We followed the same region proposal approach that was used for detection on PASCAL. Selective search [39] was run in “fast mode” on each image in val1, val2, and test (but not on images in train). One minor modification was required to deal with the fact that selective search is not scale invariant and so the number of regions produced depends on the image resolution. ILSVRC image sizes range from very small to a few that are several mega-pixels, and so we resized each image to a fixed width (500 pixels) before running selective search. On val, selective search resulted in an average of 2403 region proposals per image with a 91.6% recall of all ground-truth bounding boxes (at 0.5 IoU threshold). This recall is notably lower than in PASCAL, where it is approximately 98%, indicating significant room for improvement in the region proposal stage.

4.2. Region proposals

我们关注了用于PASCAL目标检测的类似region proposal的方法。论文[39]中对val1、val2和test中的每个图像上以“快速模式”运行了Selective search的方法(但在训练集图像上没有)。需要进行一次较小的修改来处理selective search不是尺度不变的事实,因此产生的区域数量取决于图像分辨率。ILSVRC图像的大小从很小到几百万像素不等,因此我们在运行selective search之前将每个图像的大小调整为固定宽度(500像素)。在训练集上,selective search平均每幅图像产生2403个region proposals,所有真值bounding boxes的召回率达到91.6%(阈值为0.5 IoU)。这个召回率明显低于在PASCAL数据集,后者约为98%,表明在region proposal阶段仍有很大的改进空间。

4.3. Training data

For training data, we formed a set of images and boxes that includes all selective search and ground-truth boxes from val1 together with up to N ground-truth boxes per class from train (if a class has fewer than N ground-truth boxes in train, then we take all of them). We’ll call this dataset of images and boxes val1+trainN. In an ablation study, we show mAP on val2 for N ∈{0, 500, 1000} (Section 4.5).

4.3. 训练数据

对于训练数据,我们形成了图像和框的集合,其中包括val1中的所有selective search和真值bounding boxes,以及训练集上每个类别最多N个真值bounding boxes(如果训练集类别中少于N个真值bounding boxes,我们全部放到上面的集合中)。我们将此图像和框的数据集称为val1 + trainN。在消融研究中,我们展示了当N属于{0,500,1000}时val2上的mAP值(第4.5节)。

Training data is required for three procedures in R-CNN: (1) CNN fine-tuning, (2) detector SVM training, and (3) bounding-box regressor training. CNN fine-tuning was run for 50k SGD iteration on val1+trainN using the exact same settings as were used for PASCAL. Fine-tuning on a single NVIDIA Tesla K20 took 13 hours using Caffe. For SVM training, all ground-truth boxes from val1+trainN were used as positive examples for their respective classes. Hard negative mining was performed on a randomly selected subset of 5000 images from val1. An initial experiment indicated that mining negatives from all of val1, versus a 5000 image subset (roughly half of it), resulted in only a 0.5 percentage point drop in mAP, while cutting SVM training time in half. No negative examples were taken from train because the annotations are not exhaustive. The extra sets of verified negative images were not used. The bounding-box regressors were trained on val1.

R-CNN中的三个过程需要训练数据:(1)CNN fine-tuning,(2)检测器SVM训练,(3)bounding-box回归器训练。CNN fine-tuning使用与PASCAL完全相同的设置,对val1+trainN进行5万轮SGD迭代。使用Caffe在单个NVIDIA Tesla K20上进行fine-tuning需要13个小时。对于SVM训练,来自val1+trainN的所有真值bounding-box均用作各自类别的正样本。对val1中5000个图像随机选择的子集进行了负样本挖掘。最初的实验表明,从val1的所有样本中提取负样本,而不是5000个图像子集(大约占一半),最终结果mAP仅下降0.5个百分点,然而将SVM训练时间缩短了一半。由于注释不够详尽,因此没有从训练集中产生任何负样本。未使用额外的验证负样本图像。bounding-box回归在val1上进行训练。

4.4. Validation and evaluation

Before submitting results to the evaluation server, we validated data usage choices and the effect of fine-tuning and bounding-box regression on the val2 set using the training data described above. All system hyperparameters (e.g., SVM C hyperparameters, padding used in region warping, NMS thresholds, bounding-box regression hyperparameters) were fixed at the same values used for PASCAL. Undoubtedly some of these hyperparameter choices are slightly suboptimal for ILSVRC, however the goal of this work was to produce a preliminary R-CNN result on ILSVRC without extensive dataset tuning. After selecting the best choices on val2, we submitted exactly two result files to the ILSVRC2013 evaluation server. The first submission was without bounding-box regression and the second submission was with bounding-box regression. For these submissions, we expanded the SVM and bounding-box regressor training sets to use val+train1k and val, respectively. We used the CNN that was fine-tuned on val1+train1k to avoid re-running fine-tuning and feature computation.

4.4. 验证与评估

在将结果提交给评估服务器之前,我们根据上述训练数据的介绍评估了数据使用选择以及在val2子集上的fine-tune和bounding-box回归的效果。所有系统超参数(例如SVM C超参数,区域变形中使用的填充,NMS阈值,bounding-box回归超参数)都固定为与PASCAL相同的值。毫无疑问,对于ILSVRC这些超参数选择中有些是次优化的,但是这项工作的目标是在ILSVRC上产生初步的R-CNN结果,而无需进行大量的数据集调整。在val2上选择最佳选择之后,我们将两个结果文件提交给ILSVRC2013评估服务器。第一个提交没有bounding-box回归,第二个提交有bounding-box回归。对于这些提交,我们将SVM和bounding-box回归训练集扩展为分别使用val + train1k和val。我们使用已经在val1 + train1k上fine-tuning的CNN来避免重新运行fine-tuning和特征计算。

4.5. Ablation study

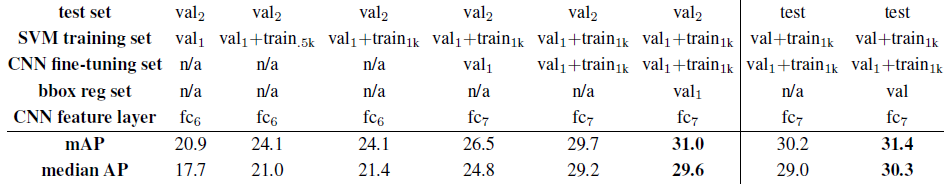

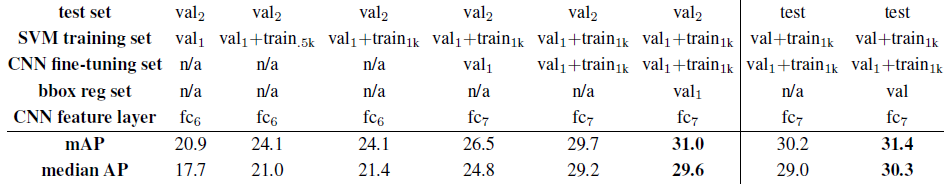

Table 4 shows an ablation study of the effects of different amounts of training data, fine-tuning, and boundingbox regression. A first observation is that mAP on val2 matches mAP on test very closely. This gives us confidence that mAP on val2 is a good indicator of test set performance. The first result, 20.9%, is what R-CNN achieves using a CNN pre-trained on the ILSVRC2012 classification dataset (no fine-tuning) and given access to the small amount of training data in val1 (recall that half of the classes in val1 have between 15 and 55 examples). Expanding the training set to val1+trainN improves performance to 24.1%, with essentially no difference between N = 500 and N = 1000. Fine-tuning the CNN using examples from just val1 gives a modest improvement to 26.5%, however there is likely significant overfitting due to the small number of positive training examples. Expanding the fine-tuning set to val1+train1k, which adds up to 1000 positive examples per class from the train set, helps significantly, boosting mAP to 29.7%. Bounding-box regression improves results to 31.0%, which is a smaller relative gain that what was observed in PASCAL.

Table 4: ILSVRC2013 ablation study of data usage choices, fine-tuning, and bounding-box regression.

4.5. 消融研究

表4显示了对不同数量的训练数据、fine-tune和边界框回归的影响的消融研究。第一个观察结果是val2上的mAP与测试中的mAP非常接近。这使我们相信val2上的mAP是测试集性能的良好指标。第一个结果为20.9%,是R-CNN使用在ILSVRC2012分类数据集上进行预训练的CNN所实现的结果(无微调),并且可以访问val1中的少量训练数据(回想一下,val1中一半类别只有15到55个样本)。将训练集扩展为val1 + trainN可以将性能提高到24.1%,而N = 500和N = 1000之间基本上没有差异。仅使用val1的样本对CNN进行微调就可以将其略微提高到26.5%,但是可能会存在严重的过拟合,因为训练数据正样本太少。将fine-tuning数据集扩展为val1 + train1k,即从训练集中获取样本将每个类别增加至1000个正样本,这将有明显的帮助作用,可以将mAP提升至29.7%。Bounding-box回归将结果提高到31.0%,这是一个较小的相对提升,比在PASCAL中观察到的要小。

表4:ILSVRC2013消融研究:数据使用选择,fine-tuning和bounding-box回归的。

4.6. Relationship to OverFeat

There is an interesting relationship between R-CNN and OverFeat: OverFeat can be seen (roughly) as a special case of R-CNN. If one were to replace selective search region proposals with a multi-scale pyramid of regular square regions and change the per-class bounding-box regressors to a single bounding-box regressor, then the systems would be very similar (modulo some potentially significant differences in how they are trained: CNN detection fine-tuning, using SVMs, etc.). It is worth noting that OverFeat has a significant speed advantage over R-CNN: it is about 9x faster, based on a figure of 2 seconds per image quoted from [34]. This speed comes from the fact that OverFeat’s sliding windows (i.e., region proposals) are not warped at the image level and therefore computation can be easily shared between overlapping windows. Sharing is implemented by running the entire network in a convolutional fashion over arbitrary-sized inputs. Speeding up R-CNN should be possible in a variety of ways and remains as future work.

4.6. 与OverFeat的关系

R-CNN与OverFeat之间存在有趣的关系:OverFeat可以(大致)视为R-CNN的特例。如果要用规则正方形区域的多尺度金字塔替换selective search region proposals,并将每类bounding-box回归更改为单个bounding-box回归,则这两个系统将非常相似(在训练方式上取一些潜在的显着差异取模:CNN检测fine-tuning,使用SVM等)。值得注意的是,OverFeat相对于R-CNN具有显着的速度优势:基于[34]引用的每幅图像2秒的数据,它的速度快约9倍。这种速度的提高是因为OverFeat的滑动窗口(例如region proposals)不会在图片级别发生扭曲,因此可以轻松地在重叠的窗口之间共享计算。通过在任意大小的输入上以卷积方式运行整个网络来实现共享。可以通过多种方式加快R-CNN,这将是未来研究的内容。

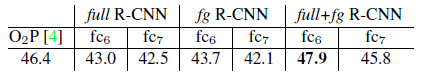

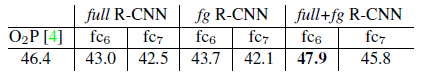

5. Semantic segmentation

Region classification is a standard technique for semantic segmentation, allowing us to easily apply R-CNN to the PASCAL VOC segmentation challenge. To facilitate a direct comparison with the current leading semantic segmentation system (called O2P for “second-order pooling”) [4], we work within their open source framework. O2P uses CPMC to generate 150 region proposals per image and then predicts the quality of each region, for each class, using support vector regression (SVR). The high performance of their approach is due to the quality of the CPMC regions and the powerful second-order pooling of multiple feature types (enriched variants of SIFT and LBP). We also note that Farabet et al. [16] recently demonstrated good results on several dense scene labeling datasets (not including PASCAL) using a CNN as a multi-scale per-pixel classifier.

5.语义分割

区域分类是语义分割的一种标准技术,使我们能够轻松地将R-CNN应用于PASCAL VOC分割挑战赛。为了便于与当前领先的语义分割系统(称为“二阶池化”的O2P)[4]进行直接的比较,我们在其开源框架开展研究工作。O2P使用CPMC为每个图像生成150个region proposals,然后使用支持向量机回归(SVR)预测每个类别的每个区域的性质。其方法的高性能归因于CPMC区域的质量以及强大的多种特征类型(SIFT和LBP的多种变体)的二阶池化。我们还注意到,Farabet等[16]最近在使用CNN作为多尺度逐像素分类器的几个密集场景标记数据集(不包括PASCAL)上得到了良好的结果。

We follow [2, 4] and extend the PASCAL segmentation training set to include the extra annotations made available by Hariharan et al. [22]. Design decisions and hyperparameters were cross-validated on the VOC 2011 validation set. Final test results were evaluated only once.

我们根据[2,4]并扩展了PASCAL分隔训练集,以包括Hariharan等人[22]提供的额外注释。设计决策和超参数在VOC 2011验证集中进行了交叉验证。最终测试结果仅评估一次。

CNN features for segmentation. We evaluate three strategies for computing features on CPMC regions, all of which begin by warping the rectangular window around the region to 227*227. The first strategy (full) ignores the region’s shape and computes CNN features directly on the warped window, exactly as we did for detection. However, these features ignore the non-rectangular shape of the region. Two regions might have very similar bounding boxes while having very little overlap. Therefore, the second strategy (fg) computes CNN features only on a region’s foreground mask. We replace the background with the mean input so that background regions are zero after mean subtraction. The third strategy (full+fg) simply concatenates the full and fg features; our experiments validate their complementarity.

使用CNN进行语义分隔特征提取。我们评估了三种用于在CPMC区域上计算特征的策略,所有策略均从将区域周围的矩形窗口变形为227*227开始。第一个策略(full)忽略区域的形状并直接在变形窗口上计算CNN特征,这与我们做检测时完全一样。但是,这些特征忽略了该区域的非矩形形状。两个区域可能具有非常相似的边界框,而几乎没有重叠。因此,第二种策略(fg)仅在区域的前景蒙版上计算CNN特征。我们用均值输入替换背景,以便减去均值后背景区域为零。第三种策略(full + fg)简单地将full和fg特征串联起来;我们的实验证明了它们的互补性。

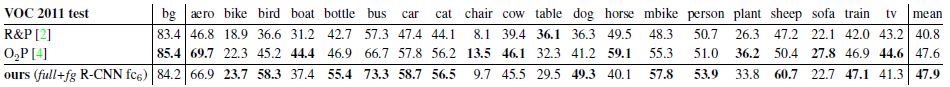

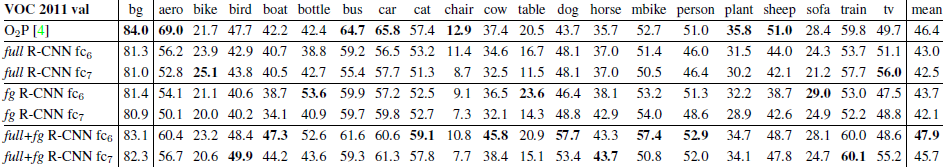

Results on VOC 2011. Table 5 shows a summary of our results on the VOC 2011 validation set compared with O2P. (See Appendix E for complete per-category results.) Within each feature computation strategy, layer fc6 always outperforms fc7 and the following discussion refers to the fc6 features. The fg strategy slightly outperforms full, indicating that the masked region shape provides a stronger signal, matching our intuition. However, full+fg achieves an average accuracy of 47.9%, our best result by a margin of 4.2% (also modestly outperforming O2P), indicating that the context provided by the full features is highly informative even given the fg features. Notably, training the 20 SVRs on our full+fg features takes an hour on a single core, compared to 10+ hours for training on O2P features.

Table 5: Segmentation mean accuracy (%) on VOC 2011 validation. Column 1 presents O2P; 2-7 use our CNN pre-trained on ILSVRC 2012.

VOC 2011上的结果。表5总结了我们与O2P相比在VOC 2011验证集中的结果(有关完整的逐类别的结果请参阅附录E)。在每种特征计算策略中,fc6层始终胜过fc7,下面的讨论涉及fc6特征。fg策略略胜于full策略,表明被遮罩的区域形状提供了更强的信号,与我们的直觉相符。但是,full + fg策略的平均准确度达到47.9%,我们的最佳结果与其相差4.2%(也略微胜过O2P),这表明即使使用fg特征,full特征所提供的上下文信息也非常有用。值得注意的是,在我们的full + fg特征上训练20个SVR在单核CPU上花费一个小时,而在O2P特征上训练则需要10多个小时。

表5:VOC 2011验证集上的(语义)分隔平均准确度(%)。 第1列表示O2P(模型);2-7列(模型)使用我们在ILSVRC 2012上进行预训练的CNN。

In Table 6 we present results on the VOC 2011 test set, comparing our best-performing method, fc6 (full+fg), against two strong baselines. Our method achieves the highest segmentation accuracy for 11 out of 21 categories, and the highest overall segmentation accuracy of 47.9%, averaged across categories (but likely ties with the O2P result under any reasonable margin of error). Still better performance could likely be achieved by fine-tuning.

Table 6: Segmentation accuracy (%) on VOC 2011 test. We compare against two strong baselines: the “Regions and Parts” (R&P) method of [2] and the second-order pooling (O2P) method of [4]. Without any fine-tuning, our CNN achieves top segmentation performance, outperforming R&P and roughly matching O2P.

在表6中,我们给出了VOC 2011测试集的结果,并将我们表现最佳的方法fc6(full + fg)与两个性能较强baseline进行了比较。我们的方法在21个类别中的11个类别中实现了最高的分隔准确度,在各个类别之间平均获得了最高的整体分隔准确度47.9%(但在任何合理的误差范围内,可能与O2P结果相关)。通过fine-tuning仍可能获得更好的性能。

表6:VOC 2011测试集上的(语义)分隔准确度(%)。我们对比了两个强大的baseline模型:论文[2]中提到的“Regions and Parts”(R&P)方法和论文[4]中提到的second-order pooling(O2P)方法。无需进行任何fine-tuning,我们的CNN就能达到最佳的语义分隔性能,胜过R&P,与O2P基本一致。

6. Conclusion

In recent years, object detection performance had stagnated. The best performing systems were complex ensembles combining multiple low-level image features with high-level context from object detectors and scene classifiers. This paper presents a simple and scalable object detection algorithm that gives a 30% relative improvement over the best previous results on PASCAL VOC 2012.

6. 结论

近年来,目标检测性能停滞不前。表现最佳的系统是复杂的集成系统,将来自目标检测器和场景分类器的多个低级图像特征与高级上下文结合在一起。本文提出了一种简单且可扩展的目标检测算法,与PASCAL VOC 2012上的最佳往期结果相比,性能相对提升了30%。

We achieved this performance through two insights. The first is to apply high-capacity convolutional neural networks to bottom-up region proposals in order to localize and segment objects. The second is a paradigm for training large CNNs when labeled training data is scarce. We show that it is highly effective to pre-train the network—with supervision—for a auxiliary task with abundant data (image classification) and then to fine-tune the network for the target task where data is scarce (detection). We conjecture that the “supervised pre-training/domain-specific fine-tuning” paradigm will be highly effective for a variety of data-scarce vision problems.

我们通过两个思路达到了这一性能。首先是将大容量卷积神经网络应用于自下而上的region proposals,主要为了定位和分割目标。其次是在缺少标签的训练数据上训练大型CNN的方法。我们的研究表明,通过有监督的方式使用具有大量数据的辅助任务(图像分类)进行网络预训练,然后针对数据稀缺的目标任务(目标检测)fine-tune网络是非常有效的。我们猜想“有监督的预训练/特定领域的fine-tune”方法将对多种数据稀缺的视觉问题都将非常有效。

We conclude by noting that it is significant that we achieved these results by using a combination of classical tools from computer vision and deep learning (bottomup region proposals and convolutional neural networks). Rather than opposing lines of scientific inquiry, the two are natural and inevitable partners.

最后,我们通过将计算机视觉和深度学习(自底向上的region proposals和卷积神经网络)中经典工具结合而获得这些结果具有非常重要的意义。这两个部分是自然而且必然的结合,并没有违背科学探索的主线。

Acknowledgments. This research was supported in part by DARPA Mind’s Eye and MSEE programs, by NSF awards IIS-0905647, IIS-1134072, and IIS-1212798, MURI N000014-10-1-0933, and by support from Toyota. The GPUs used in this research were generously donated by the NVIDIA Corporation.

附录

Appendix

A. Object proposal transformations

The convolutional neural network used in this work requires a fixed-size input of 227 227 pixels. For detection, we consider object proposals that are arbitrary image rectangles. We evaluated two approaches for transforming object proposals into valid CNN inputs.

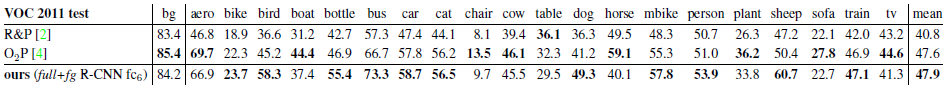

The first method (“tightest square with context”) encloses each object proposal inside the tightest square and then scales (isotropically) the image contained in that square to the CNN input size. Figure 7 column (B) shows this transformation. A variant on this method (“tightest square without context”) excludes the image content that surrounds the original object proposal. Figure 7 column (C) shows this transformation. The second method (“warp”) anisotropically scales each object proposal to the CNN input size. Figure 7 column (D) shows the warp transformation.

Figure 7: Different object proposal transformations. (A) the original object proposal at its actual scale relative to the transformed CNN inputs; (B) tightest square with context; (C) tightest square without context; (D) warp. Within each column and example proposal, the top row corresponds to p = 0 pixels of context padding while the bottom row has p = 16 pixels of context padding.

For each of these transformations, we also consider including additional image context around the original object proposal. The amount of context padding (p) is defined as a border size around the original object proposal in the transformed input coordinate frame. Figure 7 shows p = 0 pixels in the top row of each example and p = 16 pixels in the bottom row. In all methods, if the source rectangle extends beyond the image, the missing data is replaced with the image mean (which is then subtracted before inputing the image into the CNN). A pilot set of experiments showed that warping with context padding (p = 16 pixels) outperformed the alternatives by a large margin (3-5 mAP points). Obviously more alternatives are possible, including using replication instead of mean padding. Exhaustive evaluation of these alternatives is left as future work.

B. Positive vs. negative examples and softmax

Two design choices warrant further discussion. The first is: Why are positive and negative examples defined differently for fine-tuning the CNN versus training the object detection SVMs? To review the definitions briefly, for finetuning we map each object proposal to the ground-truth instance with which it has maximum IoU overlap (if any) and label it as a positive for the matched ground-truth class if the IoU is at least 0.5. All other proposals are labeled “background” (i.e., negative examples for all classes). For training SVMs, in contrast, we take only the ground-truth boxes as positive examples for their respective classes and label proposals with less than 0.3 IoU overlap with all instances of a class as a negative for that class. Proposals that fall into the grey zone (more than 0.3 IoU overlap, but are not ground truth) are ignored.

Historically speaking, we arrived at these definitions because we started by training SVMs on features computed by the ImageNet pre-trained CNN, and so fine-tuning was not a consideration at that point in time. In that setup, we found that our particular label definition for training SVMs was optimal within the set of options we evaluated (which included the setting we now use for fine-tuning). When we started using fine-tuning, we initially used the same positive and negative example definition as we were using for SVM training. However, we found that results were much worse than those obtained using our current definition of positives and negatives.

Our hypothesis is that this difference in how positives and negatives are defined is not fundamentally important and arises from the fact that fine-tuning data is limited. Our current scheme introduces many “jittered” examples (those proposals with overlap between 0.5 and 1, but not ground truth), which expands the number of positive examples by approximately 30x. We conjecture that this large set is needed when fine-tuning the entire network to avoid overfitting. However, we also note that using these jittered examples is likely suboptimal because the network is not being fine-tuned for precise localization.

This leads to the second issue: Why, after fine-tuning, train SVMs at all? It would be cleaner to simply apply the last layer of the fine-tuned network, which is a 21-way softmax regression classifier, as the object detector. We tried this and found that performance on VOC 2007 dropped from 54.2% to 50.9% mAP. This performance drop likely arises from a combination of several factors including that the definition of positive examples used in fine-tuning does not emphasize precise localization and the softmax classifier was trained on randomly sampled negative examples rather than on the subset of “hard negatives” used for SVM training.

This result shows that it’s possible to obtain close to the same level of performance without training SVMs after fine-tuning. We conjecture that with some additional tweaks to fine-tuning the remaining performance gap may be closed. If true, this would simplify and speed up R-CNN training with no loss in detection performance.

C. Bounding-box regression

We use a simple bounding-box regression stage to improve localization performance. After scoring each selective search proposal with a class-specific detection SVM, we predict a new bounding box for the detection using a class-specific bounding-box regressor. This is similar in spirit to the bounding-box regression used in deformable part models [17]. The primary difference between the two approaches is that here we regress from features computed by the CNN, rather than from geometric features computed on the inferred DPM part locations.

The input to our training algorithm is a set of N training pairs f(Pi;Gi)gi=1;:::;N , where Pi = (Pix ; Pi y; Piw ; Pih ) specifies the pixel coordinates of the center of proposal Pi’s bounding box together with Pi’s width and height in pixels. Hence forth, we drop the superscript i unless it is needed. Each ground-truth bounding box G is specified in the same way: G = (Gx;Gy;Gw;Gh). Our goal is to learn a transformation that maps a proposed box P to a ground-truth box G.

We parameterize the transformation in terms of four functions dx(P), dy(P), dw(P), and dh(P). The first two specify a scale-invariant translation of the center of P’s bounding box, while the second two specify log-space translations of the width and height of P’s bounding box. After learning these functions, we can transform an input proposal P into a predicted ground-truth box ^G by applying the transformation

^G x = Pwdx(P) + Px (1)

^G y = Phdy(P) + Py (2)

^G w = Pw exp(dw(P)) (3)

^G h = Ph exp(dh(P)): (4)

Each function d?(P) (where ? is one of x; y; h;w) is modeled as a linear function of the pool5 features of proposal P, denoted by ‑5(P). (The dependence of ‑5(P) on the image data is implicitly assumed.) Thus we have d?(P) = wT ?‑5(P), where w? is a vector of learnable model parameters. We learn w? by optimizing the regularized least squares objective (ridge regression):

w? = argmin ^w? XN I (ti ? ???? ^wT?‑5(Pi))2 + k ^w?k2 : (5)12

The regression targets t? for the training pair (P;G) are defined as

tx = (Gx ???? Px)=Pw (6)

ty = (Gy ???? Py)=Ph (7)

tw = log(Gw=Pw) (8)

th = log(Gh=Ph): (9)

As a standard regularized least squares problem, this can be solved efficiently in closed form.

We found two subtle issues while implementing bounding-box regression. The first is that regularization is important: we set = 1000 based on a validation set. The second issue is that care must be taken when selecting which training pairs (P;G) to use. Intuitively, if P is far from all ground-truth boxes, then the task of transforming P to a ground-truth box G does not make sense. Using examples like P would lead to a hopeless learning problem. Therefore, we only learn from a proposal P if it is nearby at least one ground-truth box. We implement “nearness” by assigning P to the ground-truth box G with which it has maximum IoU overlap (in case it overlaps more than one) if and only if the overlap is greater than a threshold (which we set to 0.6 using a validation set). All unassigned proposals are discarded. We do this once for each object class in order to learn a set of class-specific bounding-box regressors.

At test time, we score each proposal and predict its new detection window only once. In principle, we could iterate this procedure (i.e., re-score the newly predicted bounding box, and then predict a new bounding box from it, and so on). However, we found that iterating does not improve results.

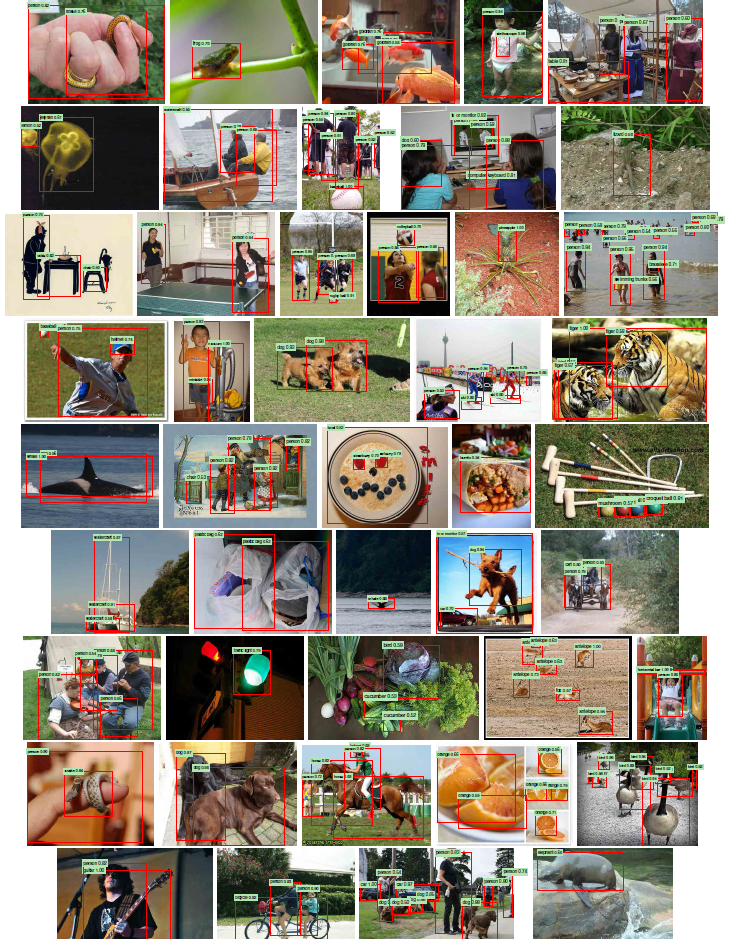

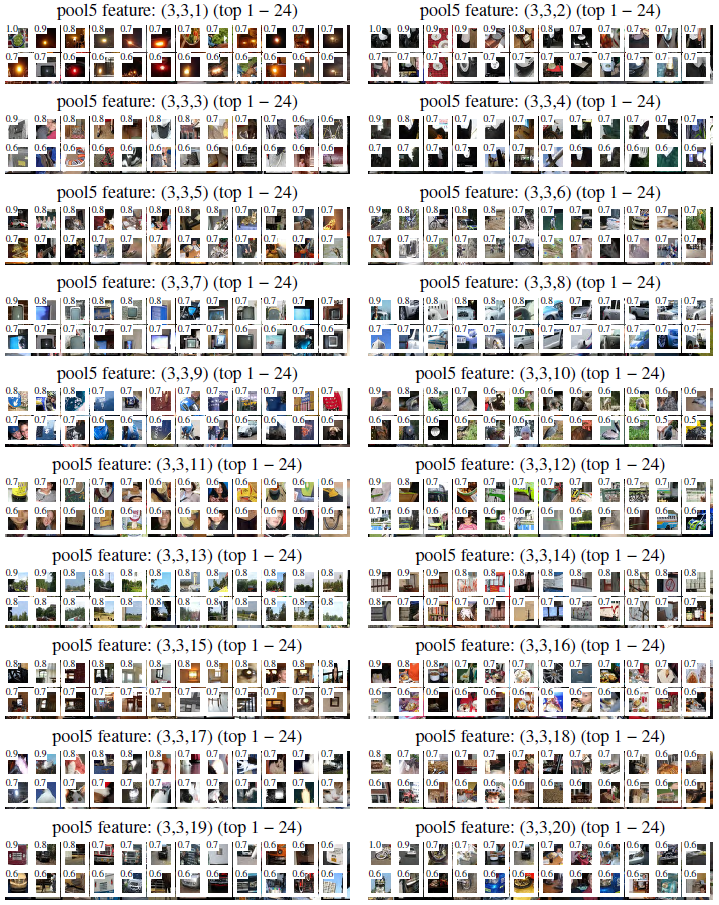

D. Additional feature visualizations

Figure 12 shows additional visualizations for 20 pool5 units. For each unit, we show the 24 region proposals that maximally activate that unit out of the full set of approximately 10 million regions in all of VOC 2007 test.

Figure 12: We show the 24 region proposals, out of the approximately 10 million regions in VOC 2007 test, that most strongly activate each of 20 units. Each montage is labeled by the unit’s (y, x, channel) position in the 6[1]6[1]256 dimensional pool5 feature map. Each image region is drawn with an overlay of the unit’s receptive field in white. The activation value (which we normalize by dividing by the max activation value over all units in a channel) is shown in the receptive field’s upper-left corner. Best viewed digitally with zoom.

We label each unit by its (y, x, channel) position in the 6 6 256 dimensional pool5 feature map. Within each channel, the CNN computes exactly the same function of the input region, with the (y, x) position changing only the receptive field.

E. Per-category segmentation results

In Table 7 we show the per-category segmentation accuracy on VOC 2011 val for each of our six segmentation methods in addition to the O2P method [4]. These results show which methods are strongest across each of the 20 PASCAL classes, plus the background class.

Table 7: Per-category segmentation accuracy (%) on the VOC 2011 validation set.

F. Analysis of cross-dataset redundancy

One concern when training on an auxiliary dataset is that there might be redundancy between it and the test set. Even though the tasks of object detection and whole-image classification are substantially different, making such cross-set redundancy much less worrisome, we still conducted a thorough investigation that quantifies the extent to which PASCAL test images are contained within the ILSVRC 2012 training and validation sets. Our findings may be useful to researchers who are interested in using ILSVRC 2012 as training data for the PASCAL image classification task.

We performed two checks for duplicate (and nearduplicate) images. The first test is based on exact matches of flickr image IDs, which are included in the VOC 2007 test annotations (these IDs are intentionally kept secret for subsequent PASCAL test sets). All PASCAL images, and about half of ILSVRC, were collected from flickr.com. This check turned up 31 matches out of 4952 (0.63%).

The second check uses GIST [30] descriptor matching, which was shown in [13] to have excellent performance at near-duplicate image detection in large (> 1 million) image collections. Following [13], we computed GIST descriptors on warped 32 32 pixel versions of all ILSVRC 2012 trainval and PASCAL 2007 test images.

Euclidean distance nearest-neighbor matching of GIST descriptors revealed 38 near-duplicate images (including all 31 found by flickr ID matching). The matches tend to vary slightly in JPEG compression level and resolution, and to a lesser extent cropping. These findings show that the overlap is small, less than 1%. For VOC 2012, because flickr IDs are not available, we used the GIST matching method only. Based on GIST matches, 1.5% of VOC 2012 test images are in ILSVRC 2012 trainval. The slightly higher rate for VOC 2012 is likely due to the fact that the two datasets were collected closer together in time than VOC 2007 and ILSVRC 2012 were.

G. Document changelog

This document tracks the progress of R-CNN. To help readers understand how it has changed over time, here’s a brief changelog describing the revisions.

v1 Initial version.

v2 CVPR 2014 camera-ready revision. Includes substantial improvements in detection performance brought about by (1) starting fine-tuning from a higher learning rate (0.001 instead of 0.0001), (2) using context padding when preparing CNN inputs, and (3) bounding-box regression to fix localization errors.

v3 Results on the ILSVRC2013 detection dataset and comparison with OverFeat were integrated into several sections (primarily Section 2 and Section 4).

v4 The softmax vs. SVM results in Appendix B contained an error, which has been fixed. We thank Sergio Guadarrama for helping to identify this issue.