一、系统环境

1、服务器

| 服务器 | 服务 | 服务器 IP |

|---|---|---|

| master | apiserver, controller-manager, scheduler,etcd | 10.39.7.69 |

| node | flannel, docker, kubelet, kube-proxy | 10.39.7.31 |

在/etc/hosts文件中添加如下解析信息:

10.39.7.69 ETCDServer K8smaster

下载安装文件

wget https://github.com/kubernetes/kubernetes/releases/download/v1.13.1/kubernetes.tar.gz

解压文件tar -xvf kubernetes.tar.gz

[root@i-8vcbbz8d k8sinst]# cd kubernetes

[root@i-8vcbbz8d kubernetes]# ls -l

total 6156

drwxr-xr-x 2 root root 4096 Dec 13 19:18 client

drwxr-xr-x 13 root root 4096 Dec 13 19:18 cluster

drwxr-xr-x 7 root root 4096 Dec 13 19:18 docs

drwxr-xr-x 3 root root 4096 Dec 13 19:18 hack

-rw-r--r-- 1 root root 6273808 Dec 13 19:18 LICENSES

-rw-r--r-- 1 root root 3179 Dec 13 19:18 README.md

drwxr-xr-x 2 root root 4096 Dec 13 19:18 server

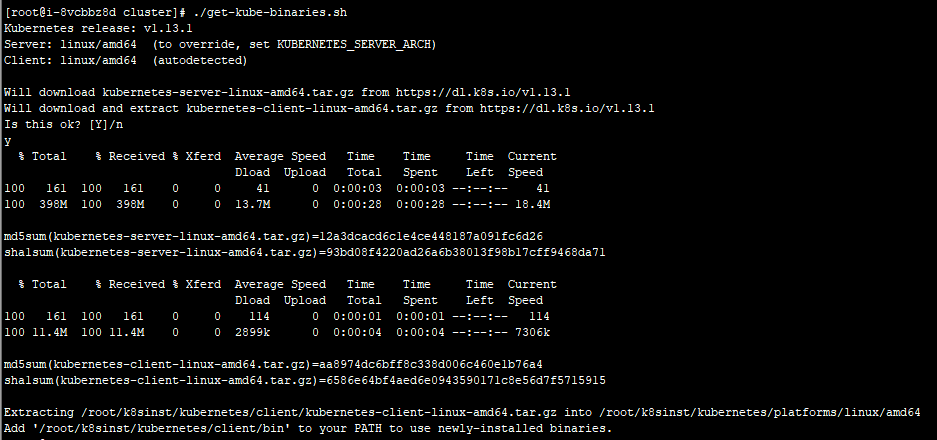

-rw-r--r-- 1 root root 8 Dec 13 19:18 version执行脚本kubernetes/cluster/get-kube-binaries.sh,下载

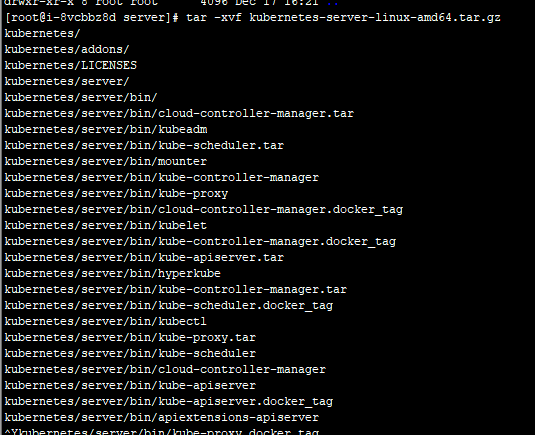

解压文件kubernetes-server-linux-amd64.tar.gz

下载etcd

https://github.com/etcd-io/etcd/releases

wget https://github.com/etcd-io/etcd/releases/download/v3.3.10/etcd-v3.3.10-linux-amd64.tar.gz

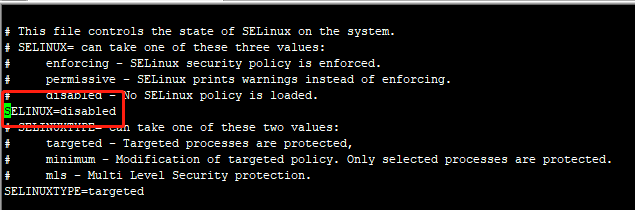

永久关闭SELinux

修改/etc/selinux/config SELINUX=disabled

关闭Swap

swapoff -a && sysctl -w vm.swappiness=0

vi /etc/fstab

#UUID=7bff6243-324c-4587-b550-55dc34018ebf swap swap defaults 0 0创建etcd和k8s的安装目录

mkdir /k8s/etcd/{bin,cfg,ssl} -p

mkdir /k8s/kubernetes/{bin,cfg,ssl} -p二、安装

1、建认证证书

安装配置cfssl

[root@i-8vcbbz8d ssl]# curl -s -L -o /bin/cfssl https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@i-8vcbbz8d ssl]# curl -s -L -o /bin/cfssljson https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@i-8vcbbz8d ssl]# curl -s -L -o /bin/cfssl-certinfo https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

[root@i-8vcbbz8d ssl]# chmod +x /bin/cfssl*

创建 ETCD 证书

cat << EOF | tee ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF创建 ETCD CA 配置文件

cat << EOF | tee ca-csr.json

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Hebei",

"ST": "Langfang"

}

]

}

EOF创建 ETCD Server 证书

cat << EOF | tee server-csr.json

{

"CN": "etcd",

"hosts": [

"10.39.7.69",

"10.39.7.12"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Hebei",

"ST": "Langfang"

}

]

}

EOF生成 ETCD CA 证书和私钥

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server创建 Kubernetes CA 证书

cat << EOF | tee ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOFcat << EOF | tee ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Hebei",

"ST": "Langfang",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -生成API_SERVER证书

cat << EOF | tee server-csr.json

{

"CN": "kubernetes",

"hosts": [

"16.0.0.1",

"127.0.0.1",

"10.39.7.69",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Hebei",

"ST": "Langfang",

"O": "k8s",

"OU": "System"

}

]

}

EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server创建 Kubernetes Proxy 证书

cat << EOF | tee kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Hebei",

"ST": "Langfang",

"O": "k8s",

"OU": "System"

}

]

}

EOFcfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

1.8、 ssh-key认证

# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:FQjjiRDp8IKGT+UDM+GbQLBzF3DqDJ+pKnMIcHGyO/o root@qas-k8s-master01

The key's randomart image is:

+---[RSA 2048]----+

|o.==o o. .. |

|ooB+o+ o. . |

|B++@o o . |

|=X**o . |

|o=O. . S |

|..+ |

|oo . |

|* . |

|o+E |

+----[SHA256]-----+

2、安装etcd

解压etcd文件

tar -xvf etcd-v3.3.10-linux-amd64.tar.gz

cd etcd-v3.3.10-linux-amd64

cp etcd etcdctl /k8s/etcd/bin/

cp etcd /usr/bin/

cp etcdctl /usr/bin/

创建配置文件

vim /k8s/etcd/cfg/etcd

ETCD_NAME=ETCDServer

ETCD_DATA_DIR="/k8s/etcd/data/"

ETCD_LISTEN_CLIENT_URLS="https://0.0.0.0:2379"

ETCD_ADVERTISE_CLIENT_URLS="https://10.39.7.69:2379"

在/etc/systemd/system/目录里创建etcd.service

vim /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/k8s/etcd/cfg/etcd

ExecStart=/k8s/etcd/bin/etcd

--cert-file=/k8s/etcd/ssl/server.pem

--key-file=/k8s/etcd/ssl/server-key.pem

--peer-cert-file=/k8s/etcd/ssl/server.pem

--peer-key-file=/k8s/etcd/ssl/server-key.pem

--trusted-ca-file=/k8s/etcd/ssl/ca.pem

--peer-trusted-ca-file=/k8s/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

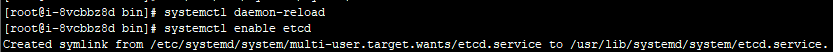

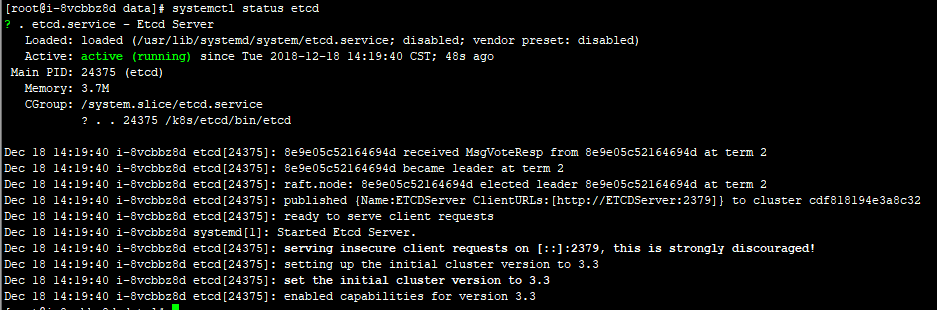

启动etcd服务

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd

检查etcd状态

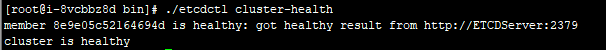

2、安装Docker

安装docker

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

yum list docker-ce --showduplicates | sort -r

yum install docker-ce -y

systemctl start docker && systemctl enable docker

设置Docker所需参数

cat << EOF | tee /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

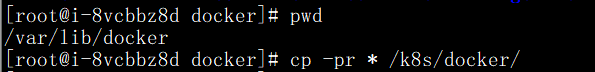

sysctl -p /etc/sysctl.d/k8s.conf修改docker 默认的数据目录

创建目标目录

mkdir /k8s/docker

停止docker服务,将原来docker目录中的数据复制到目标目录

重命名/var/lib/docker为/var/lib/docker.bak

创建软连接

ln -s /k8s/docker /var/lib/docker

重启docker,并检查docker数据目录

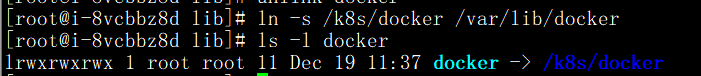

3、部署Flannel网络

向etcd输入集群Pod的网段信息

etcdctl set /coreos.com/network/config '{ "Network": "172.18.0.0/16", "Backend": {"Type": "vxlan"}}'

![]()

写入的 Pod 网段 ${CLUSTER_CIDR} 必须是 /16 段地址,必须与 kube-controller-manager 的 –cluster-cidr 参数值一致。

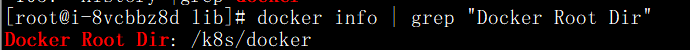

下载flannel

https://github.com/coreos/flannel/releases/download/v0.10.0/flannel-v0.10.0-linux-amd64.tar.gz

解压文件 tar -xvf flannel-v0.10.0-linux-amd64.tar.gz

cp flanneld mk-docker-opts.sh /k8s/kubernetes/bin/

![]()

配置Flannel

vim /k8s/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=https://10.39.7.69:2379 -etcd-cafile=/k8s/etcd/ssl/ca.pem -etcd-certfile=/k8s/etcd/ssl/server.pem -etcd-keyfile=/k8s/etcd/ssl/server-key.pem"创建 flanneld 的 systemd unit 文件

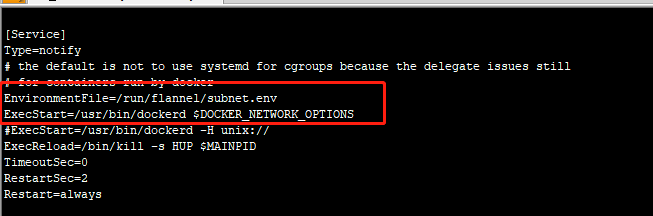

配置Docker启动指定子网段

vim /usr/lib/systemd/system/docker.service

启动服务

systemctl start flanneld

systemctl enable flanneld

systemctl start docker将flanneld systemd unit 文件到所有节点

cd /k8s/kubernetes/bin

scp flanneld mk-docker-opts.sh 10.39.7.12:/k8s/kubernetes/bin

scp /k8s/kubernetes/cfg/flanneld 10.39.7.12:/k8s/kubernetes/cfg/flanneld

scp /usr/lib/systemd/system/docker.service 10.39.7.12:/usr/lib/systemd/system/docker.service

scp /usr/lib/systemd/system/flanneld.service 10.39.7.12:/usr/lib/systemd/system/flanneld.service

/k8s/etcd

scp -r ssl 10.39.7.12:/k8s/etcd启动服务

systemctl daemon-reload

systemctl start flanneld

systemctl enable flanneld

systemctl restart docker

4、安装K8s Master

kubernetes master 节点运行如下组件:

- kube-apiserver

- kube-scheduler

- kube-controller-manager

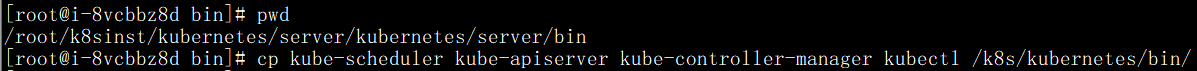

将这三个组建复制到 /k8s/kubernetes/bin/

cp kube-scheduler kube-apiserver kube-controller-manager kubectl /k8s/kubernetes/bin/

部署 kube-apiserver 组件

创建 TLS Bootstrapping Token

# head -c 16 /dev/urandom | od -An -t x | tr -d ' '

13589f773408da6c4d883a00b03e9f8a

vim /k8s/kubernetes/cfg/token.csv

13589f773408da6c4d883a00b03e9f8a,kubelet-bootstrap,10001,"system:kubelet-bootstrap"创建apiserver配置文件

vim /k8s/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true

--v=4

--etcd-servers=https://10.39.7.69:2379

--bind-address=10.39.7.69

--secure-port=6443

--advertise-address=10.39.7.69

--allow-privileged=true

--service-cluster-ip-range=10.0.0.0/24

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction

--authorization-mode=RBAC,Node

--enable-bootstrap-token-auth

--token-auth-file=/k8s/kubernetes/cfg/token.csv

--service-node-port-range=30000-50000

--tls-cert-file=/k8s/kubernetes/ssl/server.pem

--tls-private-key-file=/k8s/kubernetes/ssl/server-key.pem

--client-ca-file=/k8s/kubernetes/ssl/ca.pem

--service-account-key-file=/k8s/kubernetes/ssl/ca-key.pem

--etcd-cafile=/k8s/etcd/ssl/ca.pem

--etcd-certfile=/k8s/etcd/ssl/server.pem

--etcd-keyfile=/k8s/etcd/ssl/server-key.pem"创建 kube-apiserver systemd unit 文件

vim /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/k8s/kubernetes/cfg/kube-apiserver

ExecStart=/k8s/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target启动服务

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl start kube-apiserver查看apiserver是否运行

ps -ef |grep kube-apiserver

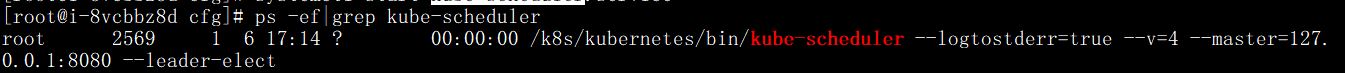

部署kube-scheduler

创建kube-scheduler配置文件

vim /k8s/kubernetes/cfg/kube-scheduler

KUBE_SCHEDULER_OPTS="--logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect"- –address:在 127.0.0.1:10251 端口接收 http /metrics 请求;kube-scheduler 目前还不支持接收 https 请求;

- –kubeconfig:指定 kubeconfig 文件路径,kube-scheduler 使用它连接和验证 kube-apiserver;

- –leader-elect=true:集群运行模式,启用选举功能;被选为 leader 的节点负责处理工作,其它节点为阻塞状态;

创建kube-scheduler systemd unit 文件

vim /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/k8s/kubernetes/cfg/kube-scheduler

ExecStart=/k8s/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target启动服务

systemctl daemon-reload

systemctl enable kube-scheduler.service

systemctl start kube-scheduler.service查看kube-scheduler是否运行

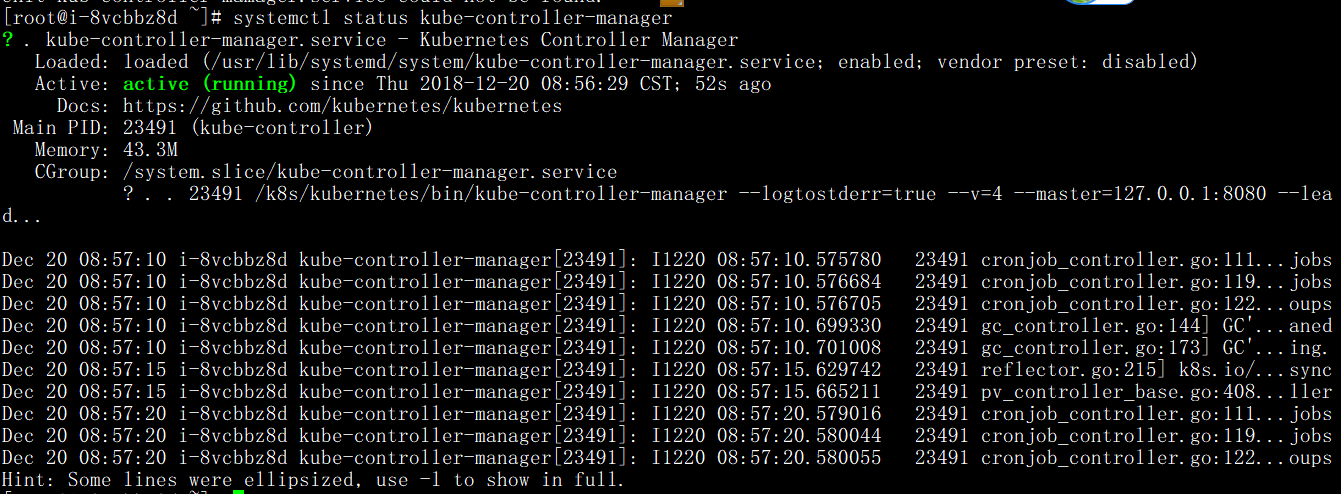

部署kube-controller-manager

创建kube-controller-manager配置文件

vim /k8s/kubernetes/cfg/kube-controller-manager

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true

--v=4

--master=127.0.0.1:8080

--leader-elect=true

--address=127.0.0.1

--service-cluster-ip-range=10.0.0.0/24

--cluster-name=kubernetes

--cluster-signing-cert-file=/k8s/kubernetes/ssl/ca.pem

--cluster-signing-key-file=/k8s/kubernetes/ssl/ca-key.pem

--root-ca-file=/k8s/kubernetes/ssl/ca.pem

--service-account-private-key-file=/k8s/kubernetes/ssl/ca-key.pem"创建kube-controller-manager systemd unit 文件

vim /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/k8s/kubernetes/cfg/kube-controller-manager

ExecStart=/k8s/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target启动服务

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl start kube-controller-manager

查看kube-controller-manager是否运行

将可执行文件路/k8s/kubernetes/ 添加到 PATH 变量中

vim /etc/profile

PATH=/k8s/kubernetes/bin:$PATH:$HOME/bin

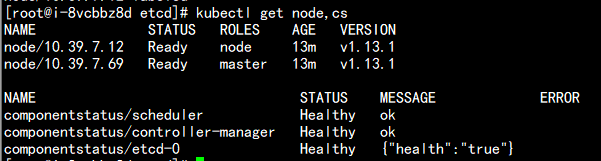

source /etc/profile查看master集群状态

# kubectl get cs,nodes

5、部署node 节点

kubernetes work 节点运行如下组件:

- docker 前面已经部署

- kubelet

- kube-proxy

部署 kubelet 组件

- kublet 运行在每个 worker 节点上,接收 kube-apiserver 发送的请求,管理 Pod 容器,执行交互式命令,如exec、run、logs 等;

- kublet 启动时自动向 kube-apiserver 注册节点信息,内置的 cadvisor 统计和监控节点的资源使用情况;

将安装包中的kubelet、kube-proxy复制到node节点和master节点目录中

![]()

cp kubelet kube-proxy /k8s/kubernetes/bin/

创建 kubelet bootstrap kubeconfig 文件

vim environment.sh

# 创建kubelet bootstrapping kubeconfig

BOOTSTRAP_TOKEN=13589f773408da6c4d883a00b03e9f8a

KUBE_APISERVER="https:/10.39.7.69:6443"

# 设置集群参数

kubectl config set-cluster kubernetes

--certificate-authority=./ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap

--token=${BOOTSTRAP_TOKEN}

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default

--cluster=kubernetes

--user=kubelet-bootstrap

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

# 创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes

--certificate-authority=./ca.pem

--embed-certs=true

--server=${KUBE_APISERVER}

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy

--client-certificate=./kube-proxy.pem

--client-key=./kube-proxy-key.pem

--embed-certs=true

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default

--cluster=kubernetes

--user=kube-proxy

--kubeconfig=kube-proxy.kubeconfig

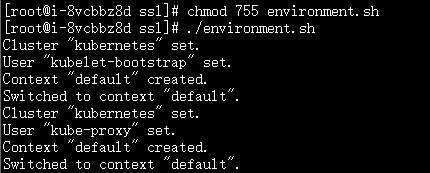

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig修改脚本权限并执行

将bootstrap kubeconfig kube-proxy.kubeconfig 文件拷贝到所有 nodes节点的cfg目录下

cp bootstrap.kubeconfig kube-proxy.kubeconfig /k8s/kubernetes/cfg/

创建kubelet 参数配置文件拷贝到所有 nodes节点

创建 kubelet 参数配置模板文件:

vim /k8s/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 10.39.7.12

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true创建kubelet配置文件

vim /k8s/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=true

--v=4

--hostname-override=10.39.7.12

--kubeconfig=/k8s/kubernetes/cfg/kubelet.kubeconfig

--bootstrap-kubeconfig=/k8s/kubernetes/cfg/bootstrap.kubeconfig

--config=/k8s/kubernetes/cfg/kubelet.config

--cert-dir=/k8s/kubernetes/ssl

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"创建kubelet systemd unit 文件

vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/k8s/kubernetes/cfg/kubelet

ExecStart=/k8s/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

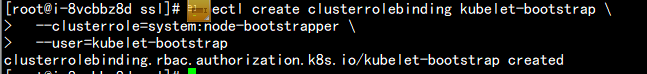

WantedBy=multi-user.target将kubelet-bootstrap用户绑定到系统集群角色(在master节点上执行)

kubectl create clusterrolebinding kubelet-bootstrap

--clusterrole=system:node-bootstrapper

--user=kubelet-bootstrap

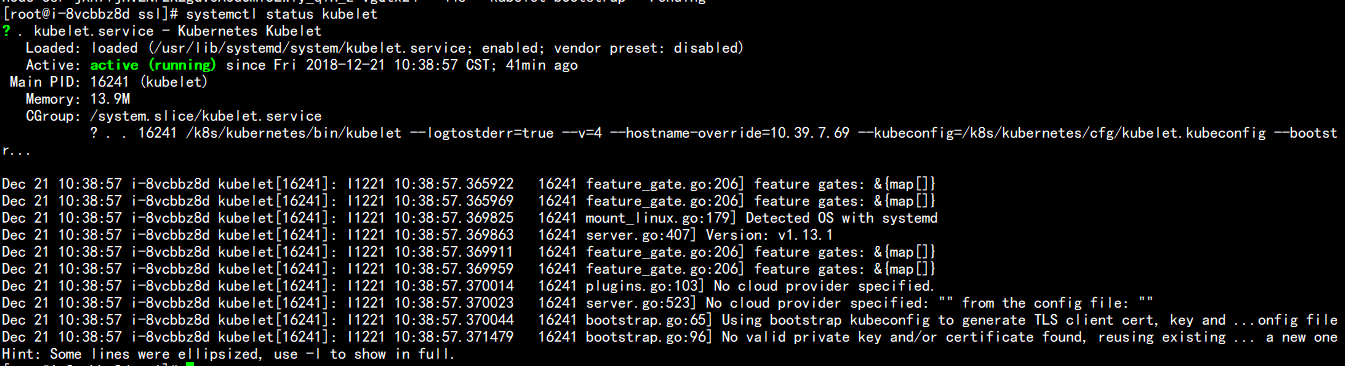

启动服务

systemctl daemon-reload

systemctl enable kubelet

systemctl start kubelet检查服务状态

approve kubelet CSR 请求

kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-4TUZLMzoQkAtSWfZ5NsCzka-Zt9ZphFa9_CayLMNaC0 22m kubelet-bootstrap Pending

node-csr-jXn7fjHvENr2KEgGV3A3dOmi5LWly_qlH_z-vgQtx24 3h20m kubelet-bootstrap Pending

kubectl certificate approve node-csr-4TUZLMzoQkAtSWfZ5NsCzka-Zt9ZphFa9_CayLMNaC0

certificatesigningrequest.certificates.k8s.io/node-csr-4TUZLMzoQkAtSWfZ5NsCzka-Zt9ZphFa9_CayLMNaC0 approved

kubectl certificate approve node-csr-jXn7fjHvENr2KEgGV3A3dOmi5LWly_qlH_z-vgQtx24

certificatesigningrequest.certificates.k8s.io/node-csr-jXn7fjHvENr2KEgGV3A3dOmi5LWly_qlH_z-vgQtx24 approved

[

kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-4TUZLMzoQkAtSWfZ5NsCzka-Zt9ZphFa9_CayLMNaC0 25m kubelet-bootstrap Approved,Issued

node-csr-jXn7fjHvENr2KEgGV3A3dOmi5LWly_qlH_z-vgQtx24 3h22m kubelet-bootstrap Approved,Issued

部署 kube-proxy 组件

kube-proxy 运行在所有 node节点上,它监听 apiserver 中 service 和 Endpoint 的变化情况,创建路由规则来进行服务负载均衡。

创建 kube-proxy 配置文件

vim /k8s/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=true

--v=4

--hostname-override=10.39.7.12

--cluster-cidr=10.0.0.0/24

--kubeconfig=/k8s/kubernetes/cfg/kube-proxy.kubeconfig"- bindAddress: 监听地址;

- clientConnection.kubeconfig: 连接 apiserver 的 kubeconfig 文件;

- clusterCIDR: kube-proxy 根据 –cluster-cidr 判断集群内部和外部流量,指定 –cluster-cidr 或 –masquerade-all 选项后 kube-proxy 才会对访问 Service IP 的请求做 SNAT;

- hostnameOverride: 参数值必须与 kubelet 的值一致,否则 kube-proxy 启动后会找不到该 Node,从而不会创建任何 ipvs 规则;

- mode: 使用 ipvs 模式

创建kube-proxy systemd unit 文件

vim /usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/k8s/kubernetes/cfg/kube-proxy

ExecStart=/k8s/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target启动服务

systemctl daemon-reload

systemctl enable kube-proxy

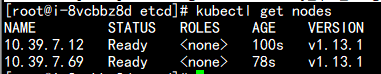

systemctl start kube-proxy# systemctl status kube-proxy为节点打标签

kubectl label node 10.39.7.69 node-role.kubernetes.io/master='master'

kubectl label node 10.39.7.12 node-role.kubernetes.io/node='node'

检查集群状态

最后

以上就是天真蓝天最近收集整理的关于Kubernetes安装的全部内容,更多相关Kubernetes安装内容请搜索靠谱客的其他文章。

![[kubernetes]-kubeadm安装的kubernetes修改apiserver参数](https://www.shuijiaxian.com/files_image/reation/bcimg10.png)

发表评论 取消回复