文章目录

- 一、Zookeeper概述与安装

- 二、Zookeeper Kerberos 鉴权认证

- 1)Kerberos安装

- 2)创建用户并生成keytab鉴权文件(前期准备)

- 3)独立zookeeper配置

- 1、配置zoo.cfg

- 2、配置jaas.conf

- 3、配置java.env

- 4、将配置copy到其它节点

- 5、启动服务

- 6、登录客户端验证

- 4)kafka内置zookeeper配置

- 1、把kerberos文件移到指定目录下

- 2、创建 JAAS 配置文件(服务端配置)

- 3、修改服务启动配置

- 4、修改服务启动脚本

- 5、创建 JAAS 配置文件(客户端配置)

- 6、修改客户端脚本

- 7、将配置copy到其它节点并修改

- 8、启动服务

- 9、测试验证

- 三、Zookeeper 账号密码鉴权认证

- 1)独立zookeeper配置

- 1、创建存储目录

- 2、配置zoo.cfg

- 3、配置jaas

- 4、配置环境变量java.env

- 5、将配置copy到其它节点

- 6、启动zookeeper服务

- 6、启动zookeeper客户端验证

- 2)kafka内置zookeeper配置

- 1、创建存储目录

- 2、配置zookeeper.properties

- 3、配置jaas

- 4、配置服务端环境变量zookeeper-server-start.sh

- 5、配置客户端环境变量zookeeper-shell.sh

- 6、将配置copy到其它节点

- 7、启动zookeeper服务

- 8、启动zookeeper客户端验证

- 四、zookeeper+Kafka鉴权认证

- 1)kafka和zookeeper同时开启kerberos鉴权认证

- 1、开启zookeeper kerberos鉴权

- 2、配置server.properties

- 3、配置jaas

- 4、修改kafka环境变量

- 5、将配置copy到其它节点

- 6、修改其它节点上的配置

- 7、启动kafka服务

- 8、kafka客户端测试验证

- 2)kafka+zookeeper同时开启账号密码鉴权认证

- 1、开启zookeeper 账号密码鉴权

- 2、配置server.properties

- 3、配置jaas

- 4、修改kafka环境变量

- 5、将配置copy到其它节点

- 6、修改其它节点上的配置

- 7、启动kafka服务

- 8、kafka客户端测试验证

- 3)Zookeeper账号密码认证+Kafka Kerberos认证

- 1、开启zookeeper 账号密码鉴权

- 2、配置server.properties

- 3、配置jaas

- 4、修改kafka环境变量

- 5、将配置copy到其它节点

- 6、修改其它节点上的配置

- 7、启动kafka服务

- 8、kafka客户端测试验证

一、Zookeeper概述与安装

Zookeeper概述与安装请参考我之前的文章:分布式开源协调服务——Zookeeper

Zookeeper的安装方式有两种,两种方式都会讲,其实大致配置都是一样的,只是少部分配置有一丢丢的区别。kafka的鉴权认证可以参考我之前的文章:大数据Hadoop之——Kerberos实战(Kafka Kerberos认证,用户密码认证和CDH Kerberos认证)

二、Zookeeper Kerberos 鉴权认证

1)Kerberos安装

Kerberos安装可以参考我之前的文章:Kerberos认证原理与环境部署

2)创建用户并生成keytab鉴权文件(前期准备)

#服务端

kadmin.local -q "addprinc -randkey zookeeper/hadoop-node1@HADOOP.COM"

kadmin.local -q "addprinc -randkey zookeeper/hadoop-node2@HADOOP.COM"

kadmin.local -q "addprinc -randkey zookeeper/hadoop-node3@HADOOP.COM"

# 导致keytab文件

kadmin.local -q "xst -k /root/zookeeper.keytab zookeeper/hadoop-node1@HADOOP.COM"

# 先定义其它名字,当使用之前得改回zookeeper-server.keytab

kadmin.local -q "xst -k /root/zookeeper-node2.keytab zookeeper/hadoop-node2@HADOOP.COM"

kadmin.local -q "xst -k /root/zookeeper-node3.keytab zookeeper/hadoop-node3@HADOOP.COM"

#客户端

kadmin.local -q "addprinc -randkey zkcli@HADOOP.COM"

# 导致keytab文件

kadmin.local -q "xst -k /root/zkcli.keytab zkcli@HADOOP.COM"

3)独立zookeeper配置

1、配置zoo.cfg

$ cd $ZOOKEEPER_HOME

# 将上面生成的keytab 放到zk目录下

$ mkdir conf/kerberos

$ mv /root/zookeeper.keytab /root/zookeeper-ndoe2.keytab /root/zookeeper-node3.keytab /root/zkcli.keytab conf/kerberos/

$ vi conf/zoo.cfg

# 在conf/zoo-kerberos.cfg配置文件中添加如下内容:

authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider

jaasLoginRenew=3600000

#将principal对应的主机名去掉,防止hbase等服务访问zookeeper时报错,如GSS initiate failed时就有可能是该项没配置

kerberos.removeHostFromPrincipal=true

kerberos.removeRealmFromPrincipal=true

2、配置jaas.conf

把服务端和客户端的配置放在一起

$ cat > $ZOOKEEPER_HOME/conf/kerberos/jaas.conf <<EOF

Server {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

keyTab="/opt/bigdata/hadoop/server/apache-zookeeper-3.8.0-bin/conf/kerberos/zookeeper.keytab"

storeKey=true

useTicketCache=false

principal="zookeeper/hadoop-node1@HADOOP.COM";

};

Client {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

keyTab="/opt/bigdata/hadoop/server/apache-zookeeper-3.8.0-bin/conf/kerberos/zkcli.keytab"

storeKey=true

useTicketCache=false

principal="zkcli@HADOOP.COM";

};

EOF

JAAS配置文件定义用于身份验证的属性,如服务主体和 keytab 文件的位置等。其中的属性意义如下:

useKeyTab:这个布尔属性定义了我们是否应该使用一个keytab文件(在这种情况下是true)。keyTab:JAAS配置文件的此部分用于主体的keytab文件的位置和名称。路径应该用双引号括起来。storeKey:这个布尔属性允许密钥存储在用户的私人凭证中。useTicketCache:该布尔属性允许从票证缓存中获取票证。debug:此布尔属性将输出调试消息,以帮助进行疑难解答。principal:要使用的服务主体的名称。

3、配置java.env

$ cat > $ZOOKEEPER_HOME/conf/java.env <<EOF

export JVMFLAGS="-Djava.security.auth.login.config=/opt/bigdata/hadoop/server/apache-zookeeper-3.8.0-bin/conf/kerberos/jaas.conf"

EOF

4、将配置copy到其它节点

# copy kerberos认证文件

$ scp -r $ZOOKEEPER_HOME/conf/kerberos hadoop-node2:/$ZOOKEEPER_HOME/conf/

$ scp -r $ZOOKEEPER_HOME/conf/kerberos hadoop-node3:/$ZOOKEEPER_HOME/conf/

# copy zoo.cfg

$ scp $ZOOKEEPER_HOME/conf/zoo.cfg hadoop-node2:/$ZOOKEEPER_HOME/conf/

$ scp $ZOOKEEPER_HOME/conf/zoo.cfg hadoop-node3:/$ZOOKEEPER_HOME/conf/

# copy java.env

$ scp $ZOOKEEPER_HOME/conf/java.env hadoop-node2:/$ZOOKEEPER_HOME/conf/

$ scp $ZOOKEEPER_HOME/conf/java.env hadoop-node3:/$ZOOKEEPER_HOME/conf/

【温馨提示】记得把

zookeeper-node2.keytab和zookeeper-node3.keytab改回zookeeper.keytab,并且把jaas.conf文件里的主机名修改

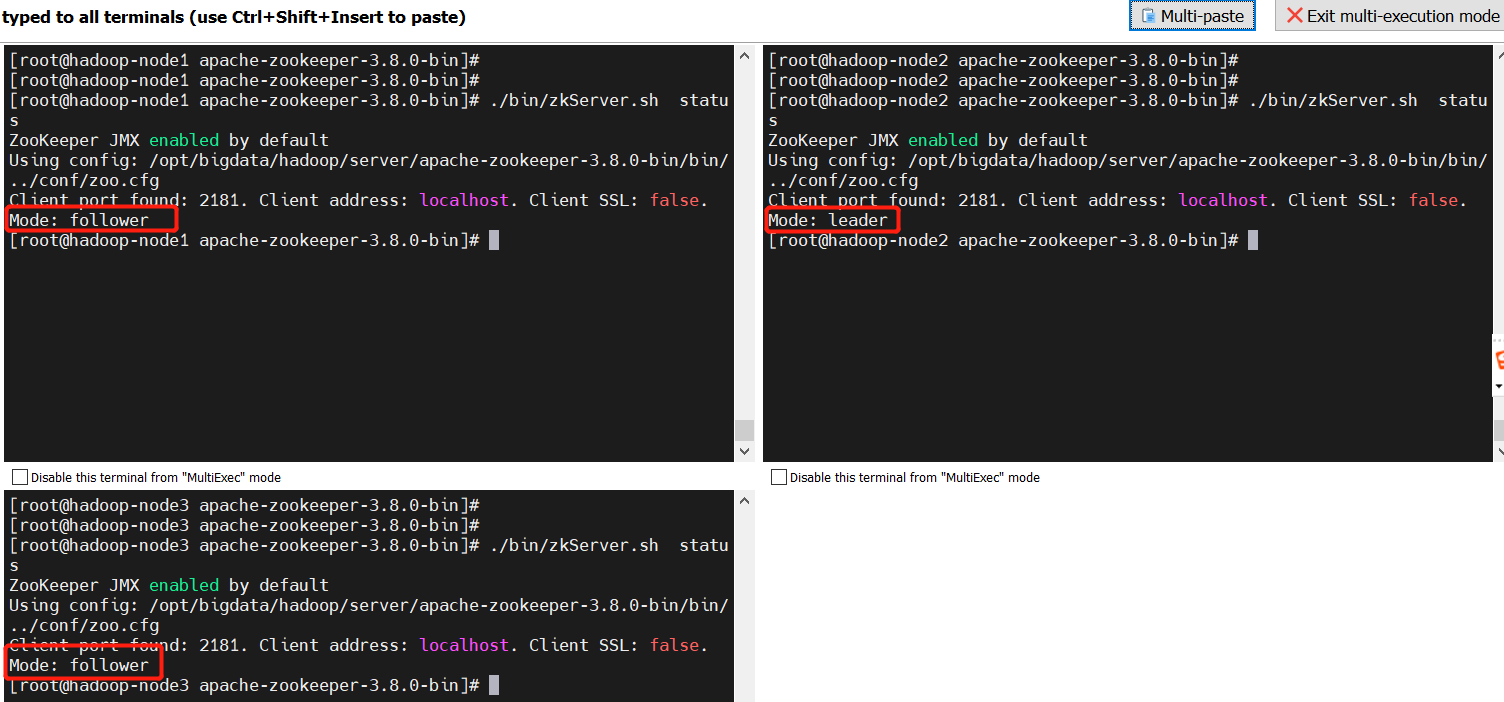

5、启动服务

$ cd $ZOOKEEPER_HOME

$ ./bin/zkServer.sh start

# 查看状态

$ ./bin/zkServer.sh status

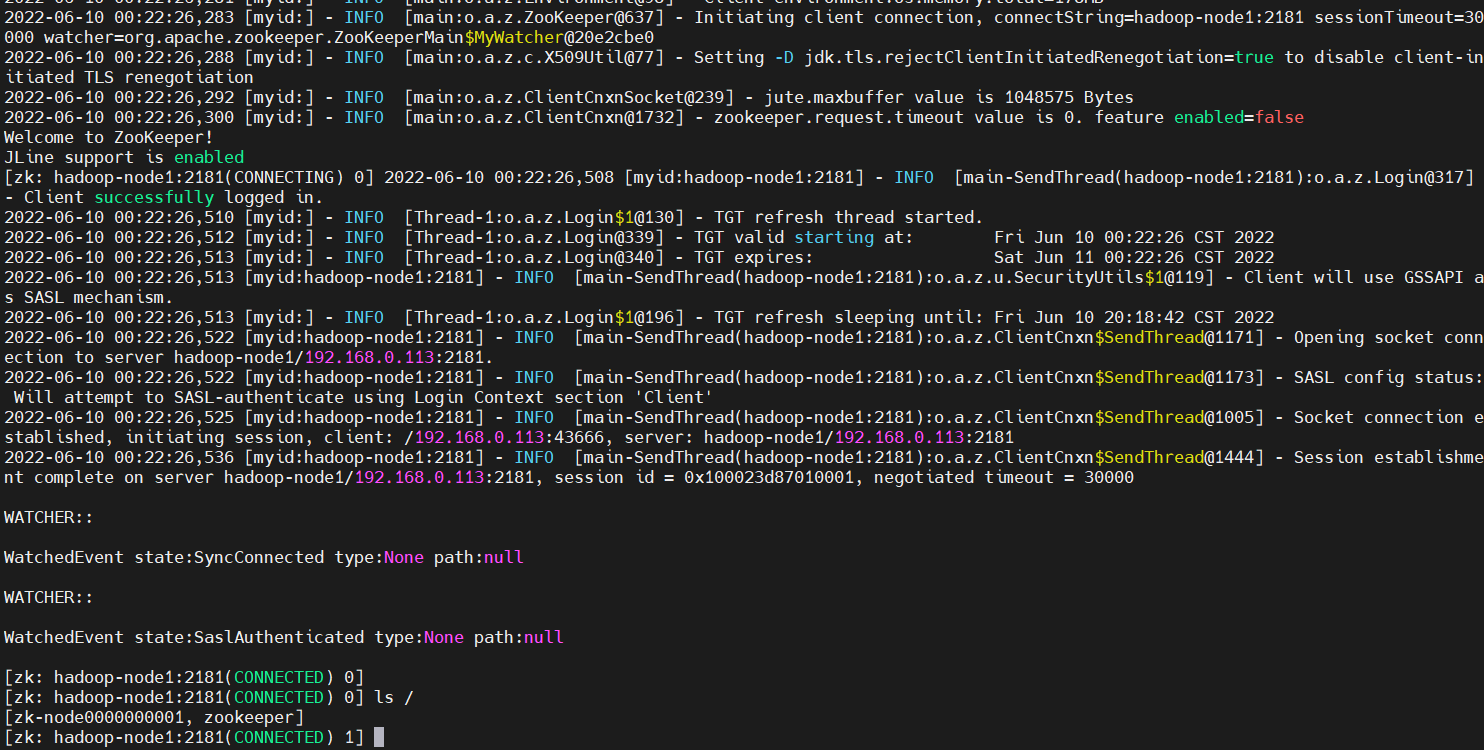

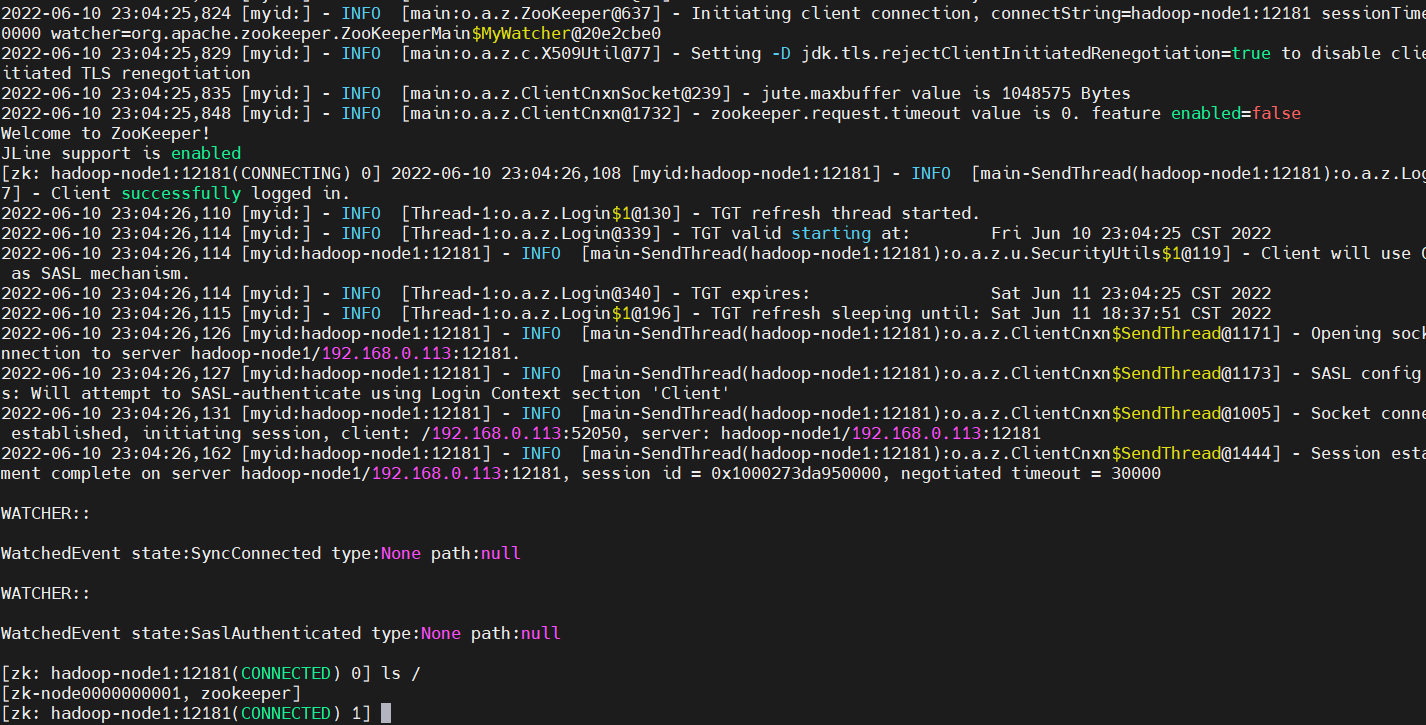

6、登录客户端验证

$ cd $ZOOKEEPER_HOME

$ ./bin/zkCli.sh -server hadoop-node1:2181

ls /

4)kafka内置zookeeper配置

1、把kerberos文件移到指定目录下

$ mkdir $KAFKA_HOME/config/zk-kerberos/

$ mv /root/zk.keytab /root/zookeeper.keytab /root/zookeeper-node2.keytab /root/zookeeper-node3.keytab $KAFKA_HOME/config/zk-kerberos/

$ ll $KAFKA_HOME/config/zk-kerberos/

# 将krb5.conf copy一份到$KAFKA_HOME/config/zk-kerberos/目录下

$ cp /etc/krb5.conf $KAFKA_HOME/config/zk-kerberos/

2、创建 JAAS 配置文件(服务端配置)

在 $KAFKA_HOME/config/zk-kerberos/的配置文件目录创建zookeeper-server-jaas.conf 文件,内容如下:

$ cat > $KAFKA_HOME/config/zk-kerberos/jaas.conf<<EOF

Server {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

keyTab="/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/kerberos/zookeeper.keytab"

storeKey=true

useTicketCache=false

principal="zookeeper/hadoop-node1@HADOOP.COM";

};

EOF

3、修改服务启动配置

这里也copy一份配置进行修改,这样好来回切换

$ cp $KAFKA_HOME/config/zookeeper.properties $KAFKA_HOME/config/zookeeper-kerberos.properties

修改或增加配置如下:

authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider

jaasLoginRenew=3600000

sessionRequireClientSASLAuth=true

4、修改服务启动脚本

$ cp $KAFKA_HOME/bin/zookeeper-server-start.sh $KAFKA_HOME/bin/zookeeper-server-start-kerberos.sh

在倒数第二行增加如下配置(bin/zookeeper-server-start-kerberos.sh):

export KAFKA_OPTS="-Djava.security.krb5.conf=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-kerberos/krb5.conf -Djava.security.auth.login.config=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-kerberos/jaas.conf"

5、创建 JAAS 配置文件(客户端配置)

$ cat > $KAFKA_HOME/config/zk-kerberos/zookeeper-client-jaas.conf<<EOF

//客户端配置

Client {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

keyTab="/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-kerberos/zkcli.keytab"

storeKey=true

useTicketCache=false

principal="zkcli@HADOOP.COM";

};

EOF

6、修改客户端脚本

$ cp $KAFKA_HOME/bin/zookeeper-shell.sh $KAFKA_HOME/bin/zookeeper-shell-kerberos.sh

在倒数第二行增加如下配置(bin/zookeeper-shell-kerberos.sh):

export KAFKA_OPTS="-Djava.security.krb5.conf=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-kerberos/krb5.conf -Djava.security.auth.login.config=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-kerberos/zookeeper-client-jaas.conf"

7、将配置copy到其它节点并修改

# kerberos认证文件

$ scp -r $KAFKA_HOME/config/zk-kerberos hadoop-node2:$KAFKA_HOME/config/

$ scp -r $KAFKA_HOME/config/zk-kerberos hadoop-node3:$KAFKA_HOME/config/

# 服务启动配置

$ scp $KAFKA_HOME/config/zookeeper-kerberos.properties hadoop-node2:$KAFKA_HOME/config/

$ scp $KAFKA_HOME/config/zookeeper-kerberos.properties hadoop-node3:$KAFKA_HOME/config/

# 服务启动脚本

$ scp $KAFKA_HOME/bin/zookeeper-server-start-kerberos.sh hadoop-node2:$KAFKA_HOME/bin/

$ scp $KAFKA_HOME/bin/zookeeper-server-start-kerberos.sh hadoop-node3:$KAFKA_HOME/bin/

【温馨提示】各个节点需要修改的配置有以下两点:

- 需要把keytab文件名字修改回

zookeeper-server.keytab - 把

zookeeper-server-jaas.conf配置文件里的主机名修改成当前机器主机名

在hadoop-node2执行

$ mv $KAFKA_HOME/config/zk-kerberos/zookeeper-server-node2.keytab $KAFKA_HOME/config/zk-kerberos/zookeeper-server.keytab

$ vi $KAFKA_HOME/config/zk-kerberos/zookeeper-server-jaas.conf

在hadoop-node3执行

$ mv $KAFKA_HOME/config/zk-kerberos/zookeeper-server-node3.keytab $KAFKA_HOME/config/zk-kerberos/zookeeper-server.keytab

$ vi $KAFKA_HOME/config/zk-kerberos/zookeeper-server-jaas.conf

8、启动服务

$ cd $KAFKA_HOME

$ ./bin/zookeeper-server-start-kerberos.sh -daemon ./config/zookeeper-kerberos.properties

9、测试验证

$ cd $KAFKA_HOME

$ ./bin/zookeeper-shell-kerberos.sh hadoop-node1:2181

三、Zookeeper 账号密码鉴权认证

1)独立zookeeper配置

1、创建存储目录

$ mkdir $ZOOKEEPER_HOME/conf/userpwd

2、配置zoo.cfg

$ vi $ZOOKEEPER_HOME/conf/zoo.cfg

# 配置如下内容:

authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider

jaasLoginRenew=3600000

sessionRequireClientSASLAuth=true

3、配置jaas

服务端和客户端配置都配置在一个文件中,省事

$ cat >$ZOOKEEPER_HOME/conf/userpwd/jaas.conf <<EOF

Server {

org.apache.zookeeper.server.auth.DigestLoginModule required

user_admin="123456"

user_kafka="123456";

};

Client {

org.apache.zookeeper.server.auth.DigestLoginModule required

username="kafka"

password=“123456";

};

EOF

4、配置环境变量java.env

$ cat >$ZOOKEEPER_HOME/conf/java.env<<EOF

JVMFLAGS="-Djava.security.auth.login.config=/opt/bigdata/hadoop/server/apache-zookeeper-3.8.0-bin/conf/userpwd/jaas.conf"

EOF

5、将配置copy到其它节点

# jass文件

$ scp -r $ZOOKEEPER_HOME/conf/userpwd hadoop-node2:$ZOOKEEPER_HOME/conf/

$ scp -r $ZOOKEEPER_HOME/conf/userpwd hadoop-node3:$ZOOKEEPER_HOME/conf/

# zoo.cfg

$ scp $ZOOKEEPER_HOME/conf/zoo.cfg hadoop-node2:$ZOOKEEPER_HOME/conf/

$ scp $ZOOKEEPER_HOME/conf/zoo.cfg hadoop-node3:$ZOOKEEPER_HOME/conf/

# java.env

$ scp $ZOOKEEPER_HOME/conf/java.env hadoop-node2:$ZOOKEEPER_HOME/conf/

$ scp $ZOOKEEPER_HOME/conf/java.env hadoop-node3:$ZOOKEEPER_HOME/conf/

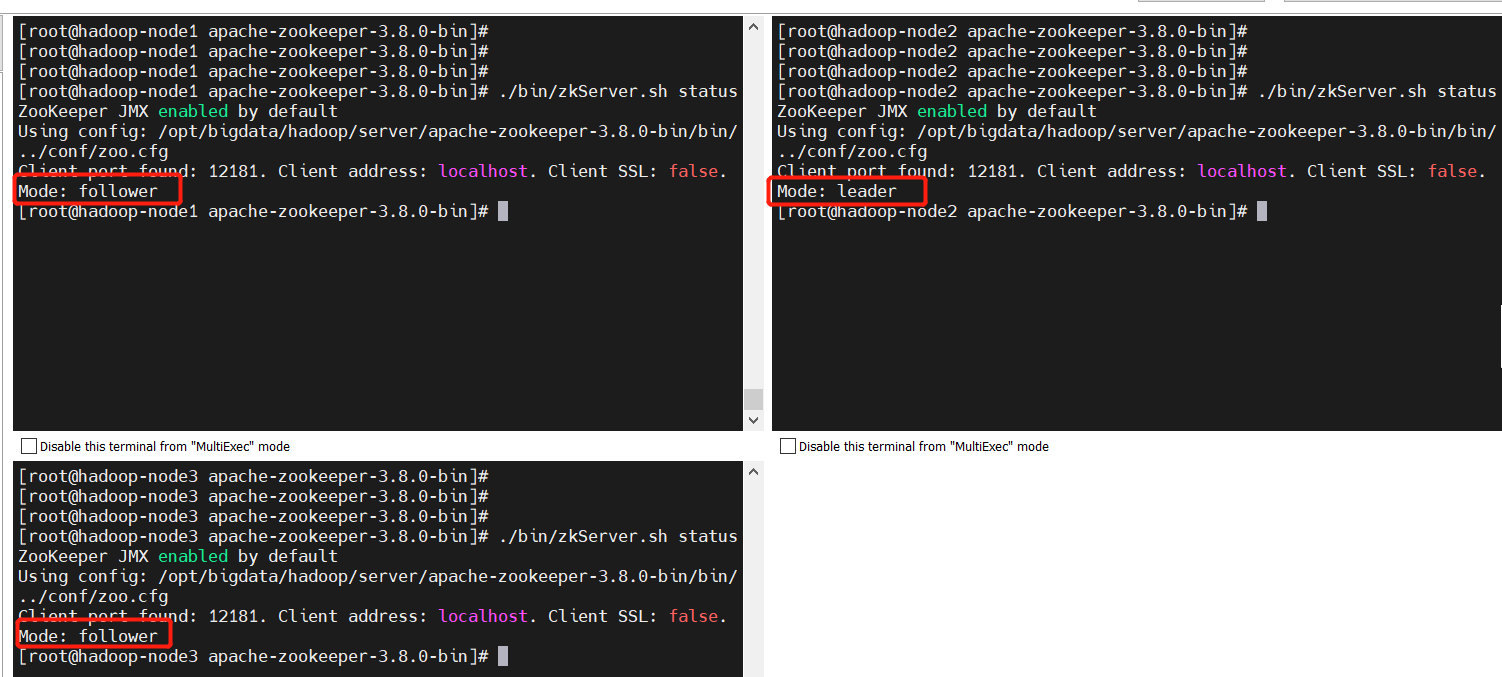

6、启动zookeeper服务

$ cd $ZOOKEEPER_HOME

$ ./bin/zkServer.sh start

$ ./bin/zkServer.sh status

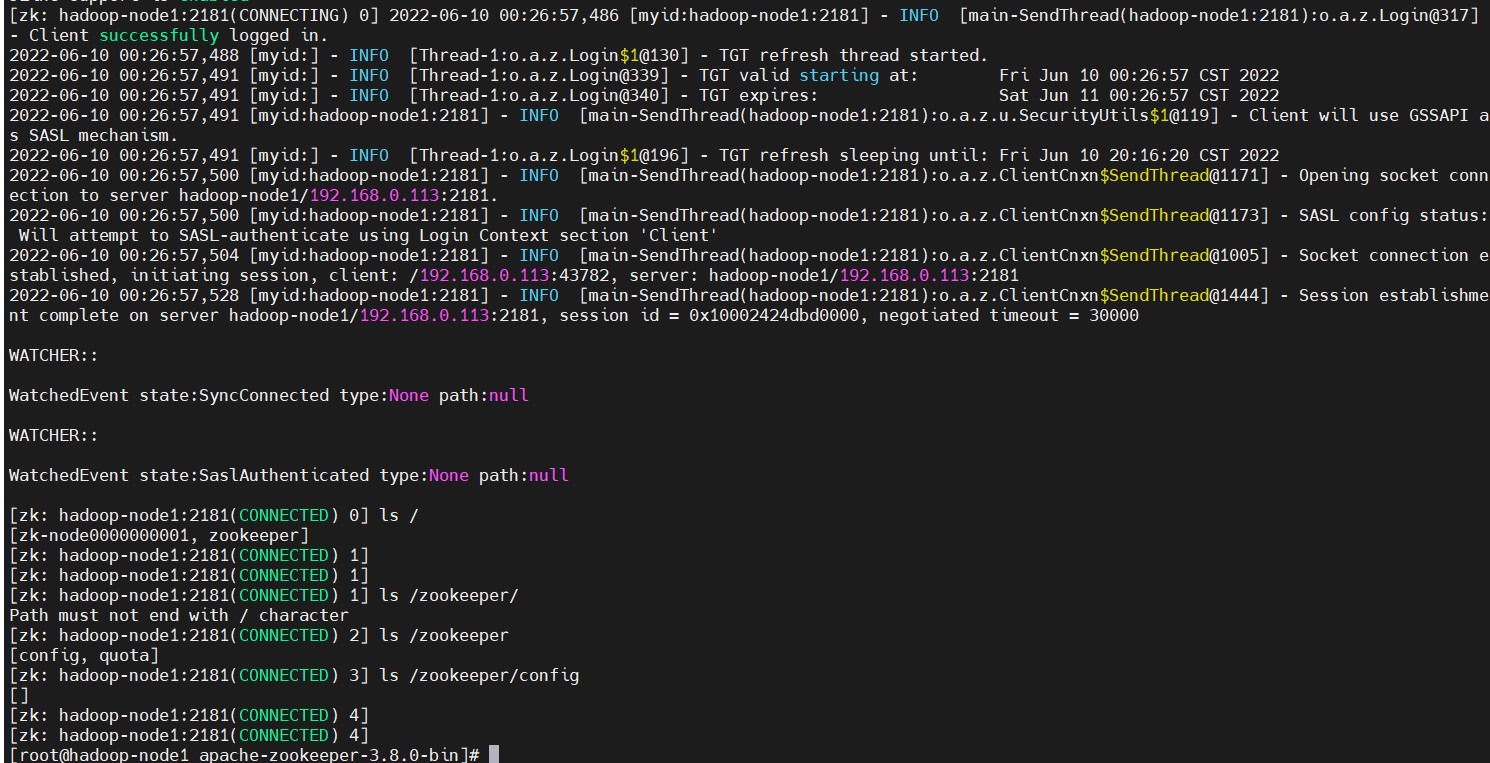

6、启动zookeeper客户端验证

【温馨提示】这里我把端口改成了12181了

$ cd $ZOOKEEPER_HOME

$ ./bin/zkCli.sh -server hadoop-node1:12181

2)kafka内置zookeeper配置

1、创建存储目录

$ mkdir $KAFKA_HOME/config/zk-userpwd

2、配置zookeeper.properties

$ cp $KAFKA_HOME/config/zookeeper.properties $KAFKA_HOME/config/zookeeper-userpwd.properties

# 配置如下内容:

authProvider.1=org.apache.zookeeper.server.auth.SASLAuthenticationProvider

jaasLoginRenew=3600000

sessionRequireClientSASLAuth=true

3、配置jaas

【温馨提示】这里服务端和客户端配置得分开配置,因为这里没有类似

java.env配置文件。

服务端配置如下:

$ cat >$KAFKA_HOME/config/zk-userpwd/zk-server-jaas.conf <<EOF

Server {

org.apache.zookeeper.server.auth.DigestLoginModule required

user_admin="123456"

user_kafka="123456";

};

EOF

客户端配置如下:

$ cat >$KAFKA_HOME/config/zk-userpwd/zk-client-jaas.conf <<EOF

Client {

org.apache.zookeeper.server.auth.DigestLoginModule required

username="kafka"

password="123456";

};

EOF

4、配置服务端环境变量zookeeper-server-start.sh

$ cp $KAFKA_HOME/bin/zookeeper-server-start.sh $KAFKA_HOME/bin/zookeeper-server-start-userpwd.sh

$ vi $KAFKA_HOME/bin/zookeeper-server-start-userpwd.sh

# 配置如下:

export KAFKA_OPTS="-Djava.security.auth.login.config=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-userpwd/zk-server-jaas.conf"

5、配置客户端环境变量zookeeper-shell.sh

$ cp $KAFKA_HOME/bin/zookeeper-shell.sh $KAFKA_HOME/bin/zookeeper-shell-userpwd.sh

$ vi $KAFKA_HOME/bin/zookeeper-shell-userpwd.sh

# 配置如下:

export KAFKA_OPTS="-Djava.security.auth.login.config=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-userpwd/zk-client-jaas.conf"

6、将配置copy到其它节点

# jaas配置文件

$ scp -r $KAFKA_HOME/config/zk-userpwd hadoop-node2:$KAFKA_HOME/config/

$ scp -r $KAFKA_HOME/config/zk-userpwd hadoop-node3:$KAFKA_HOME/config/

# zookeeper-userpwd.properties

$ scp $KAFKA_HOME/config/zookeeper-userpwd.properties hadoop-node2:$KAFKA_HOME/config/

$ scp $KAFKA_HOME/config/zookeeper-userpwd.properties hadoop-node3:$KAFKA_HOME/config/

# zookeeper-server-start-userpwd.sh

$ scp $KAFKA_HOME/bin/zookeeper-server-start-userpwd.sh hadoop-node2:$KAFKA_HOME/bin/

$ scp $KAFKA_HOME/bin/zookeeper-server-start-userpwd.sh hadoop-node3:$KAFKA_HOME/bin/

# zookeeper-shell-userpwd.sh

$ scp $KAFKA_HOME/bin/zookeeper-shell-userpwd.sh hadoop-node2:$KAFKA_HOME/bin/

$ scp $KAFKA_HOME/bin/zookeeper-shell-userpwd.sh hadoop-node3:$KAFKA_HOME/bin/

7、启动zookeeper服务

$ cd $KAFKA_HOME

$ ./bin/zookeeper-server-start-userpwd.sh ./config/zookeeper-userpwd.properties

# 后台执行

$ ./bin/zookeeper-server-start-userpwd.sh -daemon ./config/zookeeper-userpwd.properties

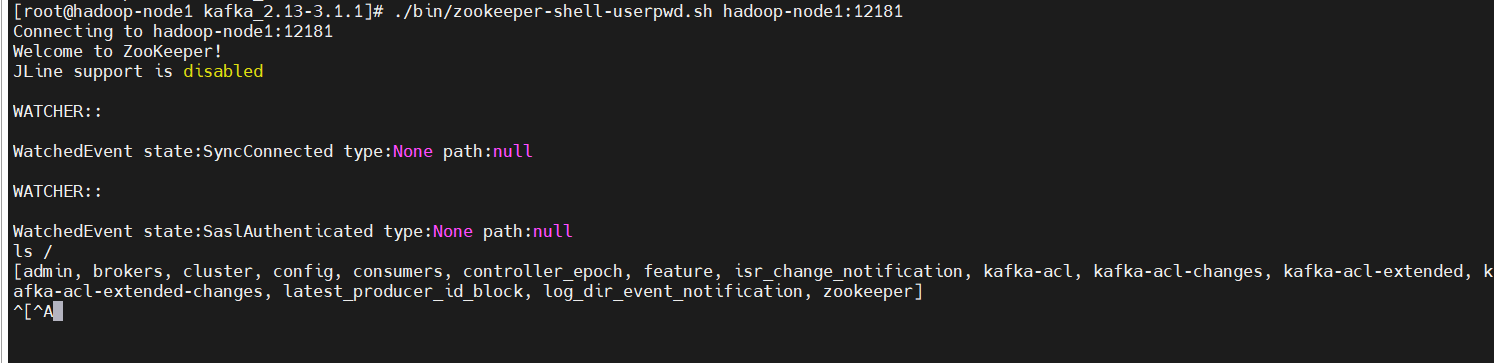

8、启动zookeeper客户端验证

$ cd $KAFKA_HOME

$ ./bin/zookeeper-shell-userpwd.sh hadoop-node1:12181

ls /

四、zookeeper+Kafka鉴权认证

kafka kerberos认证可以参考我之前的文章:大数据Hadoop之——Kerberos实战(Kafka Kerberos认证,用户密码认证和CDH Kerberos认证)

1)kafka和zookeeper同时开启kerberos鉴权认证

1、开启zookeeper kerberos鉴权

配置的话,上面很详细,这里只是给出启动命令

$ cd $KAFKA_HOME

$ ./bin/zookeeper-server-start-kerberos.sh ./config/zookeeper-kerberos.properties

# 后台启动

$ ./bin/zookeeper-server-start-kerberos.sh -daemon ./config/zookeeper-kerberos.properties

2、配置server.properties

$ cp $KAFKA_HOME/config/server.properties $KAFKA_HOME/config/server-zkcli-kerberos.properties

$ vi $KAFKA_HOME/config/server-zkcli-kerberos.properties

# 配置修改如下:

listeners=SASL_PLAINTEXT://0.0.0.0:19092

# 是暴露给外部的listeners,如果没有设置,会用listeners,参数的作用就是将Broker的Listener信息发布到Zookeeper中,注意其它节点得修改成本身的hostnaem或者ip,不支持0.0.0.0

advertised.listeners=SASL_PLAINTEXT://hadoop-node1:19092

security.inter.broker.protocol=SASL_PLAINTEXT

sasl.mechanism.inter.broker.protocol=GSSAPI

sasl.enabled.mechanisms=GSSAPI

sasl.kerberos.service.name=kafka-server

3、配置jaas

$ cp $KAFKA_HOME/config/kerberos/kafka-server-jaas.conf $KAFKA_HOME/config/kerberos/kafka-server-zkcli-jaas.conf

$ vi $KAFKA_HOME/config/kerberos/kafka-server-zkcli-jaas.conf

# 增加如下配置:

// Zookeeper client authentication

Client {

com.sun.security.auth.module.Krb5LoginModule required

useKeyTab=true

storeKey=true

keyTab="/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/zk-kerberos/zkcli.keytab"

principal="zkcli@HADOOP.COM";

};

4、修改kafka环境变量

$ cp $KAFKA_HOME/bin/kafka-server-start.sh $KAFKA_HOME/bin/kafka-server-start-zkcli-kerberos.sh

# 增加或修改如下内容:

export KAFKA_OPTS="-Dzookeeper.sasl.client=true -Dzookeeper.sasl.client.username=zookeeper -Djava.security.krb5.conf=/etc/krb5.conf -Djava.security.auth.login.config=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/kerberos/kafka-server-zkcli-jaas.conf"

5、将配置copy到其它节点

# server-zkcli-kerberos.properties

$ scp $KAFKA_HOME/config/server-zkcli-kerberos.properties hadoop-node2:$KAFKA_HOME/config/

$ scp $KAFKA_HOME/config/server-zkcli-kerberos.properties hadoop-node3:$KAFKA_HOME/config/

# kafka-server-zkcli-jaas.conf

$ scp $KAFKA_HOME/config/kerberos/kafka-server-zkcli-jaas.conf hadoop-node2:$KAFKA_HOME/config/kerberos/

$ scp $KAFKA_HOME/config/kerberos/kafka-server-zkcli-jaas.conf hadoop-node3:$KAFKA_HOME/config/kerberos/

# kafka-server-start-zkcli-kerberos.sh

$ scp $KAFKA_HOME/bin/kafka-server-start-zkcli-kerberos.sh hadoop-node2:$KAFKA_HOME/bin/

$ scp $KAFKA_HOME/bin/kafka-server-start-zkcli-kerberos.sh hadoop-node3:$KAFKA_HOME/bin/

6、修改其它节点上的配置

# 修改broker.id和advertised.listeners的主机名

$ vi $KAFKA_HOME/config/server-zkcli-kerberos.properties

# 修改主机名

$ vi $KAFKA_HOME/config/kerberos/kafka-server-zkcli-jaas.conf

7、启动kafka服务

$ cd $KAFKA_HOME

$ ./bin/kafka-server-start-zkcli-kerberos.sh ./config/server-zkcli-kerberos.properties

# 后台执行

$ ./bin/kafka-server-start-zkcli-kerberos.sh -daemon ./config/server-zkcli-kerberos.properties

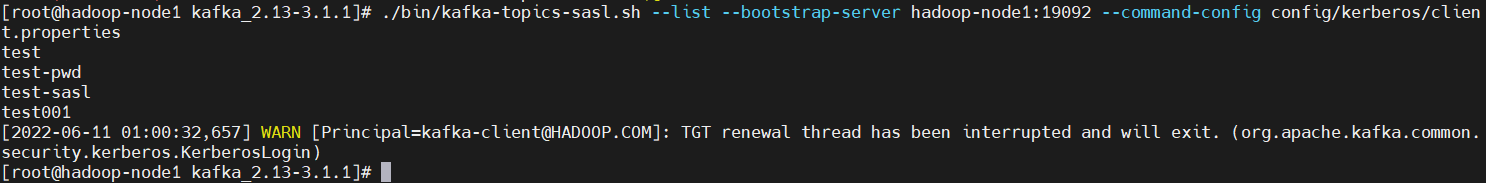

8、kafka客户端测试验证

$ cd $KAFKA_HOME

# 查看topic列表

$ ./bin/kafka-topics-sasl.sh --list --bootstrap-server hadoop-node1:19092 --command-config config/kerberos/client.properties

2)kafka+zookeeper同时开启账号密码鉴权认证

1、开启zookeeper 账号密码鉴权

$ cd $KAFKA_HOME

$ ./bin/zookeeper-server-start-userpwd.sh ./config/zookeeper-userpwd.properties

# 后台执行

$ ./bin/zookeeper-server-start-userpwd.sh -daemon ./config/zookeeper-userpwd.properties

2、配置server.properties

$ cp $KAFKA_HOME/config/server.properties $KAFKA_HOME/config/server-zkcli-userpwd.properties

$ vi $KAFKA_HOME/config/server-zkcli-userpwd.properties

# 配置如下:

listeners=SASL_PLAINTEXT://0.0.0.0:19092

# 是暴露给外部的listeners,如果没有设置,会用listeners,参数的作用就是将Broker的Listener信息发布到Zookeeper中,注意其它节点得修改成本身的hostnaem或者ip,不支持0.0.0.0

advertised.listeners=SASL_PLAINTEXT://hadoop-node1:19092

security.inter.broker.protocol=SASL_PLAINTEXT

sasl.enabled.mechanisms=PLAIN

sasl.mechanism.inter.broker.protocol=PLAIN

authorizer.class.name=kafka.security.authorizer.AclAuthorizer

allow.everyone.if.no.acl.found=true

3、配置jaas

$ scp $KAFKA_HOME/config/userpwd/kafka_server_jaas.conf $KAFKA_HOME/config/userpwd/kafka-server-zkcli-jaas.conf

$ vi $KAFKA_HOME/config/userpwd/kafka-server-zkcli-jaas.conf

# 增加如下内容:

Client {

org.apache.zookeeper.server.auth.DigestLoginModule required

username="kafka"

password="123456";

};

4、修改kafka环境变量

$ cp $KAFKA_HOME/bin/kafka-server-start-pwd.sh $KAFKA_HOME/bin/kafka-server-start-zkcli-userpwd.sh

# 配置如下:

export KAFKA_OPTS="-Dzookeeper.sasl.client=true -Dzookeeper.sasl.client.username=zookeeper -Djava.security.auth.login.config=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/userpwd/kafka-server-zkcli-jaas.conf"

5、将配置copy到其它节点

# server-zkcli-userpwd.properties

$ scp $KAFKA_HOME/config/server-zkcli-userpwd.properties hadoop-node2:$KAFKA_HOME/config/

$ scp $KAFKA_HOME/config/server-zkcli-userpwd.properties hadoop-node3:$KAFKA_HOME/config/

# kafka-server-zkcli-jaas.conf

$ scp $KAFKA_HOME/config/userpwd/kafka-server-zkcli-jaas.conf hadoop-node2:$KAFKA_HOME/config/userpwd

$ scp $KAFKA_HOME/config/userpwd/kafka-server-zkcli-jaas.conf hadoop-node3:$KAFKA_HOME/config/userpwd

# kafka-server-start-zkcli-userpwd.sh

$ scp $KAFKA_HOME/bin/kafka-server-start-zkcli-userpwd.sh hadoop-node2:$KAFKA_HOME/bin/

$ scp $KAFKA_HOME/bin/kafka-server-start-zkcli-userpwd.sh hadoop-node3:$KAFKA_HOME/bin/

6、修改其它节点上的配置

# 修改broker.id和advertised.listeners的主机名

$ vi $KAFKA_HOME/config/server-zkcli-userpwd.properties

7、启动kafka服务

$ cd $KAFKA_HOME

$ ./bin/kafka-server-start-zkcli-userpwd.sh ./config/server-zkcli-userpwd.properties

# 后台执行

$ ./bin/kafka-server-start-zkcli-userpwd.sh -daemon ./config/server-zkcli-userpwd.properties

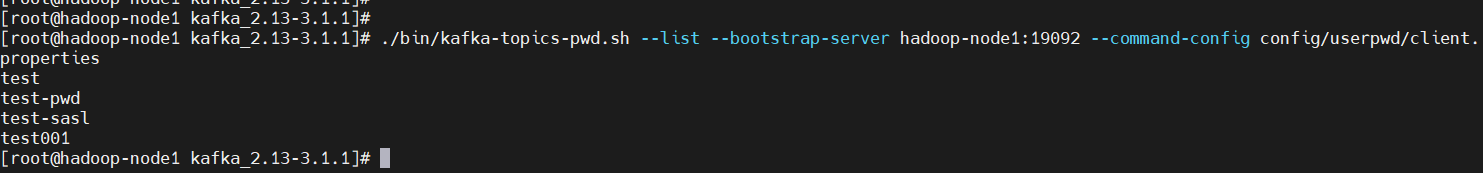

8、kafka客户端测试验证

$ cd $KAFKA_HOME

# 查看topic列表

$ ./bin/kafka-topics-pwd.sh --list --bootstrap-server hadoop-node1:19092 --command-config config/userpwd/client.properties

3)Zookeeper账号密码认证+Kafka Kerberos认证

1、开启zookeeper 账号密码鉴权

$ cd $KAFKA_HOME

$ ./bin/zookeeper-server-start-userpwd.sh ./config/zookeeper-userpwd.properties

# 后台执行

$ ./bin/zookeeper-server-start-userpwd.sh -daemon ./config/zookeeper-userpwd.properties

2、配置server.properties

$ cp $KAFKA_HOME/config/server.properties $KAFKA_HOME/config/server-kerberos-zkcli-userpwd.properties

$ vi $KAFKA_HOME/config/server-kerberos-zkcli-userpwd.properties

# 配置修改如下:

listeners=SASL_PLAINTEXT://0.0.0.0:19092

# 是暴露给外部的listeners,如果没有设置,会用listeners,参数的作用就是将Broker的Listener信息发布到Zookeeper中,注意其它节点得修改成本身的hostnaem或者ip,不支持0.0.0.0

advertised.listeners=SASL_PLAINTEXT://hadoop-node1:19092

security.inter.broker.protocol=SASL_PLAINTEXT

sasl.mechanism.inter.broker.protocol=GSSAPI

sasl.enabled.mechanisms=GSSAPI

sasl.kerberos.service.name=kafka-server

3、配置jaas

$ cp $KAFKA_HOME/config/kerberos/kafka-server-jaas.conf $KAFKA_HOME/config/kerberos/kafka-server-kerberos-zkcli-userpwd-jaas.conf

$ vi $KAFKA_HOME/config/kerberos/kafka-server-kerberos-zkcli-userpwd-jaas.conf

# 增加如下配置:

// Zookeeper client authentication

Client {

org.apache.zookeeper.server.auth.DigestLoginModule required

username="kafka"

password="123456";

};

4、修改kafka环境变量

$ cp $KAFKA_HOME/bin/kafka-server-start.sh $KAFKA_HOME/bin/kafka-server-kerberos-zkcli-userpwd-start.sh

$ vi $KAFKA_HOME/bin/kafka-server-kerberos-zkcli-userpwd-start.sh

# 增加或修改如下内容:

export KAFKA_OPTS="-Dzookeeper.sasl.client=true -Dzookeeper.sasl.client.username=zookeeper -Djava.security.krb5.conf=/etc/krb5.conf -Djava.security.auth.login.config=/opt/bigdata/hadoop/server/kafka_2.13-3.1.1/config/kerberos/kafka-server-kerberos-zkcli-userpwd-jaas.conf"

5、将配置copy到其它节点

# server-zkcli-kerberos.properties

$ scp $KAFKA_HOME/config/server-kerberos-zkcli-userpwd.properties hadoop-node2:$KAFKA_HOME/config/

$ scp $KAFKA_HOME/config/server-kerberos-zkcli-userpwd.properties hadoop-node3:$KAFKA_HOME/config/

# kafka-server-zkcli-jaas.conf

$ scp $KAFKA_HOME/config/kerberos/kafka-server-kerberos-zkcli-userpwd-jaas.conf hadoop-node2:$KAFKA_HOME/config/kerberos/

$ scp $KAFKA_HOME/config/kerberos/kafka-server-kerberos-zkcli-userpwd-jaas.conf hadoop-node3:$KAFKA_HOME/config/kerberos/

# kafka-server-start-zkcli-kerberos.sh

$ scp $KAFKA_HOME/bin/kafka-server-kerberos-zkcli-userpwd-start.sh hadoop-node2:$KAFKA_HOME/bin/

$ scp $KAFKA_HOME/bin/kafka-server-kerberos-zkcli-userpwd-start.sh hadoop-node3:$KAFKA_HOME/bin/

6、修改其它节点上的配置

# 修改broker.id和advertised.listeners的主机名

$ vi $KAFKA_HOME/config/server-kerberos-zkcli-userpwd.properties

# 修改主机名

$ vi $KAFKA_HOME/config/kerberos/kafka-server-kerberos-zkcli-userpwd-jaas.conf

7、启动kafka服务

$ cd $KAFKA_HOME

$ ./bin/kafka-server-kerberos-zkcli-userpwd-start.sh ./config/server-kerberos-zkcli-userpwd.properties

# 后台执行

$ ./bin/kafka-server-kerberos-zkcli-userpwd-start.sh -daemon ./config/server-kerberos-zkcli-userpwd.properties

8、kafka客户端测试验证

$ cd $KAFKA_HOME

# 查看topic列表

$ ./bin/kafka-topics-sasl.sh --list --bootstrap-server hadoop-node1:19092 --command-config config/kerberos/client.properties

Zookeeper鉴权认证+Kafka鉴权认证就先到这里了,有疑问的小伙伴欢迎给我留言哦,后面会持续更新关于大数据方向的文章,请小伙伴耐心等待……

最后

以上就是拼搏野狼最近收集整理的关于大数据Hadoop之——Zookeeper鉴权认证(Kerberos认证+账号密码认证)的全部内容,更多相关大数据Hadoop之——Zookeeper鉴权认证(Kerberos认证+账号密码认证)内容请搜索靠谱客的其他文章。

发表评论 取消回复