Kubernetes K8S使用IPVS代理模式,当Service的类型为ClusterIP时,如何处理访问service却不能访问后端pod的情况。

背景现象

Kubernetes K8S使用IPVS代理模式,当Service的类型为ClusterIP时,出现访问service却不能访问后端pod的情况。

主机配置规划

| 服务器名称(hostname) | 系统版本 | 配置 | 内网IP | 外网IP(模拟) |

|---|---|---|---|---|

| k8s-master | CentOS7.7 | 2C/4G/20G | 172.16.1.110 | 10.0.0.110 |

| k8s-node01 | CentOS7.7 | 2C/4G/20G | 172.16.1.111 | 10.0.0.111 |

| k8s-node02 | CentOS7.7 | 2C/4G/20G | 172.16.1.112 | 10.0.0.112 |

场景复现

Deployment的yaml信息

yaml文件

[root@k8s-master service]# pwd

/root/k8s_practice/service

[root@k8s-master service]# cat myapp-deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: myapp-deploy

namespace: default

spec:

replicas: 3

selector:

matchLabels:

app: myapp

release: v1

template:

metadata:

labels:

app: myapp

release: v1

env: test

spec:

containers:

- name: myapp

image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

启动Deployment并查看状态

[root@k8s-master service]# kubectl apply -f myapp-deploy.yaml

deployment.apps/myapp-deploy created

[root@k8s-master service]#

[root@k8s-master service]# kubectl get deploy -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

myapp-deploy 3/3 3 3 14s myapp registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp,release=v1

[root@k8s-master service]# kubectl get rs -o wide

NAME DESIRED CURRENT READY AGE CONTAINERS IMAGES SELECTOR

myapp-deploy-5695bb5658 3 3 3 21s myapp registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp,pod-template-hash=5695bb5658,release=v1

[root@k8s-master service]#

[root@k8s-master service]# kubectl get pod -o wide --show-labels

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES LABELS

myapp-deploy-5695bb5658-7tgfx 1/1 Running 0 39s 10.244.2.111 k8s-node02 <none> <none> app=myapp,env=test,pod-template-hash=5695bb5658,release=v1

myapp-deploy-5695bb5658-95zxm 1/1 Running 0 39s 10.244.3.165 k8s-node01 <none> <none> app=myapp,env=test,pod-template-hash=5695bb5658,release=v1

myapp-deploy-5695bb5658-xtxbp 1/1 Running 0 39s 10.244.3.164 k8s-node01 <none> <none> app=myapp,env=test,pod-template-hash=5695bb5658,release=v1

curl访问

[root@k8s-master service]# curl 10.244.2.111/hostname.html

myapp-deploy-5695bb5658-7tgfx

[root@k8s-master service]#

[root@k8s-master service]# curl 10.244.3.165/hostname.html

myapp-deploy-5695bb5658-95zxm

[root@k8s-master service]#

[root@k8s-master service]# curl 10.244.3.164/hostname.html

myapp-deploy-5695bb5658-xtxbp

Service的ClusterIP类型信息

yaml文件

[root@k8s-master service]# pwd

/root/k8s_practice/service

[root@k8s-master service]# cat myapp-svc-ClusterIP.yaml

apiVersion: v1

kind: Service

metadata:

name: myapp-clusterip

namespace: default

spec:

type: ClusterIP # 可以不写,为默认类型

selector:

app: myapp

release: v1

ports:

- name: http

port: 8080 # 对外暴露端口

targetPort: 80 # 转发到后端端口

启动Service并查看状态

[root@k8s-master service]# kubectl apply -f myapp-svc-ClusterIP.yaml

service/myapp-clusterip created

[root@k8s-master service]#

[root@k8s-master service]# kubectl get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 16d <none>

myapp-clusterip ClusterIP 10.102.246.104 <none> 8080/TCP 6s app=myapp,release=v1

查看ipvs信息

[root@k8s-master service]# ipvsadm -Ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

………………

TCP 10.102.246.104:8080 rr

-> 10.244.2.111:80 Masq 1 0 0

-> 10.244.3.164:80 Masq 1 0 0

-> 10.244.3.165:80 Masq 1 0 0

由此可见,正常情况下:当我们访问Service时,访问链路是能够传递到后端的Pod并返回信息。

Curl访问结果

直接访问Pod,如下所示是能够正常访问的。

[root@k8s-master service]# curl 10.244.2.111/hostname.html

myapp-deploy-5695bb5658-7tgfx

[root@k8s-master service]#

[root@k8s-master service]# curl 10.244.3.165/hostname.html

myapp-deploy-5695bb5658-95zxm

[root@k8s-master service]#

[root@k8s-master service]# curl 10.244.3.164/hostname.html

myapp-deploy-5695bb5658-xtxbp

但通过Service访问结果异常,信息如下。

[root@k8s-master service]# curl 10.102.246.104:8080

curl: (7) Failed connect to 10.102.246.104:8080; Connection timed out

处理过程

抓包核实

使用如下命令进行抓包,并通过Wireshark工具进行分析。

tcpdump -i any -n -nn port 80 -w ./$(date +%Y%m%d%H%M%S).pcap

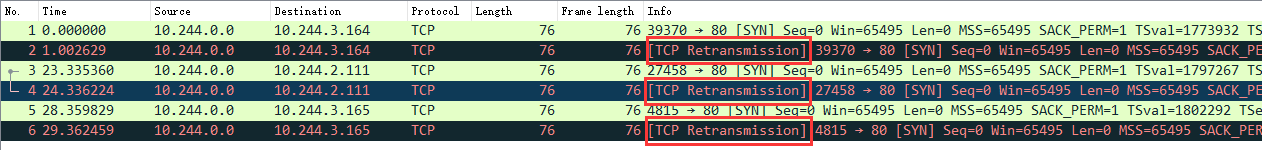

结果如下图:

可见,已经向Pod发了请求,但是没有得到回复。结果TCP又重传了【TCP Retransmission】。

查看kube-proxy日志

[root@k8s-master service]# kubectl get pod -A | grep 'kube-proxy'

kube-system kube-proxy-6bfh7 1/1 Running 1 3h52m

kube-system kube-proxy-6vfkf 1/1 Running 1 3h52m

kube-system kube-proxy-bvl9n 1/1 Running 1 3h52m

[root@k8s-master service]#

[root@k8s-master service]# kubectl logs -n kube-system kube-proxy-6bfh7

W0601 13:01:13.170506 1 feature_gate.go:235] Setting GA feature gate SupportIPVSProxyMode=true. It will be removed in a future release.

I0601 13:01:13.338922 1 node.go:135] Successfully retrieved node IP: 172.16.1.112

I0601 13:01:13.338960 1 server_others.go:172] Using ipvs Proxier. ##### 可见使用的是ipvs模式

W0601 13:01:13.339400 1 proxier.go:420] IPVS scheduler not specified, use rr by default

I0601 13:01:13.339638 1 server.go:571] Version: v1.17.4

I0601 13:01:13.340126 1 conntrack.go:100] Set sysctl 'net/netfilter/nf_conntrack_max' to 131072

I0601 13:01:13.340159 1 conntrack.go:52] Setting nf_conntrack_max to 131072

I0601 13:01:13.340500 1 conntrack.go:83] Setting conntrack hashsize to 32768

I0601 13:01:13.346991 1 conntrack.go:100] Set sysctl 'net/netfilter/nf_conntrack_tcp_timeout_established' to 86400

I0601 13:01:13.347035 1 conntrack.go:100] Set sysctl 'net/netfilter/nf_conntrack_tcp_timeout_close_wait' to 3600

I0601 13:01:13.347703 1 config.go:313] Starting service config controller

I0601 13:01:13.347718 1 shared_informer.go:197] Waiting for caches to sync for service config

I0601 13:01:13.347736 1 config.go:131] Starting endpoints config controller

I0601 13:01:13.347743 1 shared_informer.go:197] Waiting for caches to sync for endpoints config

I0601 13:01:13.448223 1 shared_informer.go:204] Caches are synced for endpoints config

I0601 13:01:13.448236 1 shared_informer.go:204] Caches are synced for service config

可见kube-proxy日志无异常

网卡设置并修改

备注:在k8s-master节点操作的

之后进一步搜索表明,这可能是由于“Checksum offloading” 造成的。信息如下:

[root@k8s-master service]# ethtool -k flannel.1 | grep checksum

rx-checksumming: on

tx-checksumming: on ##### 当前为 on

tx-checksum-ipv4: off [fixed]

tx-checksum-ip-generic: on ##### 当前为 on

tx-checksum-ipv6: off [fixed]

tx-checksum-fcoe-crc: off [fixed]

tx-checksum-sctp: off [fixed]

flannel的网络设置将发送端的checksum打开了,而实际应该关闭,从而让物理网卡校验。操作如下:

# 临时关闭操作

[root@k8s-master service]# ethtool -K flannel.1 tx-checksum-ip-generic off

Actual changes:

tx-checksumming: off

tx-checksum-ip-generic: off

tcp-segmentation-offload: off

tx-tcp-segmentation: off [requested on]

tx-tcp-ecn-segmentation: off [requested on]

tx-tcp6-segmentation: off [requested on]

tx-tcp-mangleid-segmentation: off [requested on]

udp-fragmentation-offload: off [requested on]

[root@k8s-master service]#

# 再次查询结果

[root@k8s-master service]# ethtool -k flannel.1 | grep checksum

rx-checksumming: on

tx-checksumming: off ##### 当前为 off

tx-checksum-ipv4: off [fixed]

tx-checksum-ip-generic: off ##### 当前为 off

tx-checksum-ipv6: off [fixed]

tx-checksum-fcoe-crc: off [fixed]

tx-checksum-sctp: off [fixed]

当然上述操作只能临时生效。机器重启后flannel虚拟网卡还会开启Checksum校验。

之后我们再次curl尝试

[root@k8s-master ~]# curl 10.102.246.104:8080

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@k8s-master ~]#

[root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

myapp-deploy-5695bb5658-7tgfx

[root@k8s-master ~]#

[root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

myapp-deploy-5695bb5658-95zxm

[root@k8s-master ~]#

[root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

myapp-deploy-5695bb5658-xtxbp

[root@k8s-master ~]#

[root@k8s-master ~]# curl 10.102.246.104:8080/hostname.html

myapp-deploy-5695bb5658-7tgfx

由上可见,能够正常访问了。

永久关闭flannel网卡发送校验

备注:所有机器都操作

使用以下代码创建服务

[root@k8s-node02 ~]# cat /etc/systemd/system/k8s-flannel-tx-checksum-off.service

[Unit]

Description=Turn off checksum offload on flannel.1

After=sys-devices-virtual-net-flannel.1.device

[Install]

WantedBy=sys-devices-virtual-net-flannel.1.device

[Service]

Type=oneshot

ExecStart=/sbin/ethtool -K flannel.1 tx-checksum-ip-generic off

开机自启动,并启动服务

systemctl enable k8s-flannel-tx-checksum-off

systemctl start k8s-flannel-tx-checksum-off

相关阅读

1、关于k8s的ipvs转发svc服务访问慢的问题分析(一)

2、Kubernetes + Flannel: UDP packets dropped for wrong checksum – Workaround

最后

以上就是唠叨冬日最近收集整理的关于Kubernetes K8S在IPVS代理模式下Service服务的ClusterIP类型访问失败处理背景现象主机配置规划场景复现处理过程相关阅读的全部内容,更多相关Kubernetes内容请搜索靠谱客的其他文章。

发表评论 取消回复