单节点 :https://blog.csdn.net/Yplayer001/article/details/104234807

先具备单master1节点部署环境

三、master02部署

优先关闭防火墙和selinux服务

在master01上操作

//复制kubernetes目录到master02

[root@localhost k8s]# scp -r /opt/kubernetes/ root@192.168.50.150:/opt

The authenticity of host '192.168.50.150 (192.168.50.150)' can't be established.

ECDSA key fingerprint is SHA256:APJMEiNcVfKUcen7B87XvVOjtToM3/xc7CbAgZamvKQ.

ECDSA key fingerprint is MD5:24:0b:7c:57:7d:b7:51:70:7a:f5:b9:2a:32:fc:f7:ea.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '192.168.50.150' (ECDSA) to the list of known hosts.

root@192.168.50.150's password:

token.csv 100% 84 32.6KB/s 00:00

kube-apiserver 100% 934 115.9KB/s 00:00

kube-scheduler 100% 94 20.4KB/s 00:00

kube-controller-manager 100% 483 127.8KB/s 00:00

kube-apiserver 100% 184MB 45.9MB/s 00:04

kubectl 100% 55MB 54.7MB/s 00:01

kube-controller-manager 100% 155MB 54.2MB/s 00:02

kube-scheduler 100% 55MB 57.0MB/s 00:00

ca-key.pem 100% 1679 1.4MB/s 00:00

ca.pem 100% 1359 975.3KB/s 00:00

server-key.pem 100% 1679 491.8KB/s 00:00

server.pem 100% 1643 455.7KB/s 00:00 //复制master中的三个组件启动脚本kube-apiserver.service kube-controller-manager.service kube-scheduler.service

[root@localhost k8s]# scp /usr/lib/systemd/system/{kube-apiserver,kube-controller-manager,kube-scheduler}.service root@192.168.50.150:/usr/lib/systemd/system/

root@192.168.50.150's password:

kube-apiserver.service 100% 282 253.0KB/s 00:00

kube-controller-manager.service 100% 317 257.6KB/s 00:00

kube-scheduler.service 100% 281 112.3KB/s 00:00

master02上操作

修改配置文件kube-apiserver中的IP

–bind-address=192.168.50.150

–advertise-address=192.168.50.150

[root@localhost ~]# cd /opt/kubernetes/cfg/

[root@localhost cfg]# vim kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true

--v=4

--etcd-servers=https://192.168.195.149:2379,https://192.168.195.150:2379,https://192.168.195.151:2379

--bind-address=192.168.50.150

--secure-port=6443

--advertise-address=192.168.50.150

--allow-privileged=true

--service-cluster-ip-range=10.0.0.0/24

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction

--authorization-mode=RBAC,Node

--kubelet-https=true

--enable-bootstrap-token-auth

--token-auth-file=/opt/kubernetes/cfg/token.csv

--service-node-port-range=30000-50000

--tls-cert-file=/opt/kubernetes/ssl/server.pem

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem

--client-ca-file=/opt/kubernetes/ssl/ca.pem

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem

--etcd-cafile=/opt/etcd/ssl/ca.pem

--etcd-certfile=/opt/etcd/ssl/server.pem

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

特别注意:master02一定要有etcd证书,否则apiserver服务无法启动

需要拷贝master01上已有的etcd证书给master02使用

[root@localhost k8s]# scp -r /opt/etcd/ root@192.168.50.150:/opt/

root@192.168.50.150's password:

etcd 100% 516 16.3KB/s 00:00

etcd 100% 18MB 56.0MB/s 00:00

etcdctl 100% 15MB 45.6MB/s 00:00

ca-key.pem 100% 1675 480.5KB/s 00:00

ca.pem 100% 1265 523.0KB/s 00:00

server-key.pem 100% 1679 1.6MB/s 00:00

server.pem 100% 1338 1.0MB/s 00:00

启动master02中的三个组件服务

[root@localhost cfg]# systemctl start kube-apiserver.service

[root@localhost cfg]# systemctl start kube-controller-manager.service

[root@localhost cfg]# systemctl start kube-scheduler.service

增加环境变量

[root@localhost cfg]# vim /etc/profile

#末尾添加

export PATH=$PATH:/opt/kubernetes/bin/

[root@localhost cfg]# source /etc/profile

master2查看群集中的节点

[root@localhost cfg]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.50.140 Ready <none> 2d12h v1.12.3

192.168.50.141 Ready <none> 38h v1.12.3

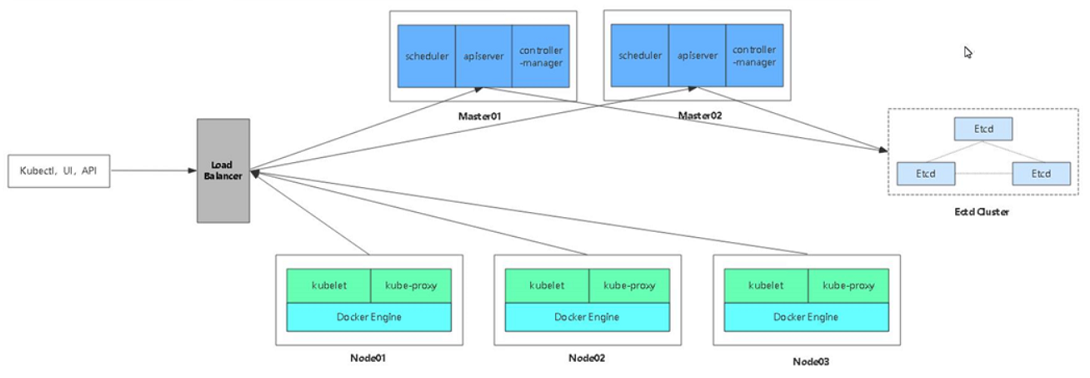

四、负载均衡部署

| lb1(192.168.50.142/24) |

|---|

| lb2(192.168.50.143/24) |

| VIP (192.168.50.100/24) |

lb01 lb02部署:

安装nginx服务,把nginx.sh和keepalived.conf脚本拷贝到家目录

[root@localhost ~]# systemctl stop firewalld.service

[root@localhost ~]# setenforce 0

[root@localhost ~]# vim /etc/yum.repos.d/nginx.repo

[nginx]

name=nginx repo

baseurl=http://nginx.org/packages/centos/7/$basearch/

gpgcheck=0

[root@localhost ~]# yum install nginx -y

//添加四层转发

[root@localhost ~]# vim /etc/nginx/nginx.conf

events {

worker_connections 1024;

}

#将stream模块添加进去

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.50.139:6443;

server 192.168.50.150:6443;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

http {

[root@localhost ~]# systemctl start nginx

部署keepalived服务

[root@localhost ~]# yum install keepalived -y

//修改配置文件

[root@localhost ~]# cp keepalived.conf /etc/keepalived/keepalived.conf

cp:是否覆盖"/etc/keepalived/keepalived.conf"? yes

//注意:lb01是Mster配置如下:

! Configuration File for keepalived

global_defs {

# 接收邮件地址

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

# 邮件发送地址

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/nginx/check_nginx.sh"

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.50.100/24

}

track_script {

check_nginx

}

}

//注意:lb02是Backup配置如下:

! Configuration File for keepalived

global_defs {

# 接收邮件地址

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

# 邮件发送地址

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

}

vrrp_script check_nginx {

script "/etc/nginx/check_nginx.sh"

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 90 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.50.100/24

}

track_script {

check_nginx

}

}

[root@localhost ~]# vim /etc/nginx/check_nginx.sh

count=$(ps -ef |grep nginx |egrep -cv "grep|$$")

if [ "$count" -eq 0 ];then

systemctl stop keepalived

fi

[root@localhost ~]# chmod +x /etc/nginx/check_nginx.sh

[root@localhost ~]# systemctl start keepalived

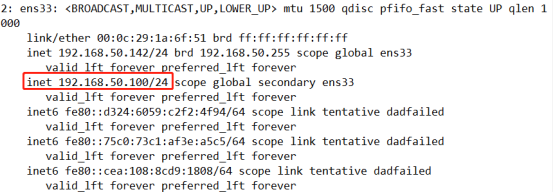

查看lb01地址信息

[root@localhost ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:1a:6f:51 brd ff:ff:ff:ff:ff:ff

inet 192.168.50.142/24 brd 192.168.50.255 scope global ens33

valid_lft forever preferred_lft forever

inet 192.168.50.100/24 scope global secondary ens33

valid_lft forever preferred_lft forever

inet6 fe80::d324:6059:c2f2:4f94/64 scope link tentative dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::75c0:73c1:af3e:a5c5/64 scope link tentative dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::cea:108:8cd9:1808/64 scope link tentative dadfailed

valid_lft forever preferred_lft forever

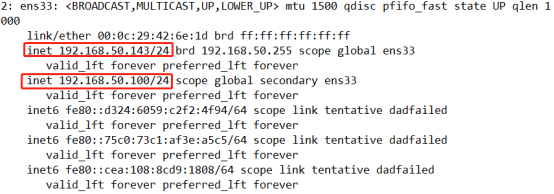

查看lb02地址信息

[root@localhost nginx]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN qlen 1

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:42:6e:1d brd ff:ff:ff:ff:ff:ff

inet 192.168.50.143/24 brd 192.168.50.255 scope global ens33

valid_lft forever preferred_lft forever

inet6 fe80::d324:6059:c2f2:4f94/64 scope link tentative dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::75c0:73c1:af3e:a5c5/64 scope link tentative dadfailed

valid_lft forever preferred_lft forever

inet6 fe80::cea:108:8cd9:1808/64 scope link tentative dadfailed

valid_lft forever preferred_lft forever

//验证地址漂移(lb01中使用pkill nginx,再在lb02中使用ip a 查看)

//恢复操作(在lb01中先启动nginx服务,再启动keepalived服务)

//nginx站点/usr/share/nginx/html

验证地址漂移(lb01中使用pkill nginx,再在lb02中使用ip a 查看)

开始修改node节点配置文件统一VIP(bootstrap.kubeconfig,kubelet.kubeconfig)

[root@localhost cfg]# vim /opt/kubernetes/cfg/bootstrap.kubeconfig

[root@localhost cfg]# vim /opt/kubernetes/cfg/kubelet.kubeconfig

[root@localhost cfg]# vim /opt/kubernetes/cfg/kube-proxy.kubeconfig

//统统修改为VIP

server: https://192.168.50.100:6443

[root@localhost cfg]# systemctl restart kubelet.service

[root@localhost cfg]# systemctl restart kube-proxy.service

//替换完成直接自检

[root@localhost cfg]# grep 100 *

bootstrap.kubeconfig: server: https://192.168.50.100:6443

kubelet.kubeconfig: server: https://192.168.50.100:6443

kube-proxy.kubeconfig: server: https://192.168.50.100:6443

在lb01上查看nginx的k8s日志

[root@localhost ~]# tail /var/log/nginx/k8s-access.log

192.168.50.141 192.168.50.139:6443 - [09/Feb/2020:11:18:41 +0800] 200 1120

192.168.50.141 192.168.50.150:6443 - [09/Feb/2020:11:18:41 +0800] 200 1120

192.168.50.140 192.168.50.139:6443 - [09/Feb/2020:11:18:56 +0800] 200 1120

192.168.50.140 192.168.50.139:6443 - [09/Feb/2020:11:18:56 +0800] 200 1120

在master01上操作

//测试创建pod

[root@localhost ~]# kubectl run nginx --image=nginx

kubectl run --generator=deployment/apps.v1beta1 is DEPRECATED and will be removed in a future version. Use kubectl create instead.

deployment.apps/nginx created

//查看状态

[root@localhost ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-dbddb74b8-nf9sk 0/1 ContainerCreating 0 33s //正在创建中

[root@localhost ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-dbddb74b8-hhkfm 1/1 Running 0 28s //创建完成,运行中

//注意日志问题

[root@localhost ~]# kubectl logs nginx-dbddb74b8-hhkfm

[root@localhost ~]# kubectl create clusterrolebinding cluster-system-anonymous --clusterrole=cluster-admin --user=system:anonymous

clusterrolebinding.rbac.authorization.k8s.io/cluster-system-anonymous created

查看pod网络

[root@localhost ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

nginx-dbddb74b8-hhkfm 1/1 Running 0 2m36s 172.17.0.2 192.168.50.141 <none>

在对应网段的node(192.168.50.141)节点上操作可以直接访问

[root@localhost cfg]# curl 172.17.0.2

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

master01查看日志

[root@localhost ~]# kubectl logs nginx-dbddb74b8-hhkfm

172.17.0.1 - - [09/Feb/2020:03:29:10 +0000] "GET / HTTP/1.1" 200 612 "-" "curl/7.29.0" "-"

最后

以上就是美好八宝粥最近收集整理的关于Kubernetes 集群部署 ------ 二进制部署(二)的全部内容,更多相关Kubernetes内容请搜索靠谱客的其他文章。

本图文内容来源于网友提供,作为学习参考使用,或来自网络收集整理,版权属于原作者所有。

发表评论 取消回复