使用了数据集Oxford-IIIT Pet中的三类猫和狗的数据,猫和狗的数据分别为570多张。

Oxford-IIIT Pet包含 37 种宠物类别的图像数据集,每个类别约有 200 张图像。这些图像在比例、姿势以及光照方面有着丰富的变化。本数据集也可以用于目标检测定位。

#! /usr/bin/python

# -*- coding:utf-8 -*-

#alexnet

import os

import time

import glob

import tensorflow as tf

import numpy as np

from skimage import io,transform

#数据集地址

image_path = '/home/muyangren/projectdir/tensorflow/PetTf/pet_photos_cd/'

#模型保存地址

model_path = '/home/muyangren/projectdir/tensorflow/PetTf/pet_models'

#TensorBorad log

log_dir = '/home/muyangren/projectdir/tensorflow/PetTf/log'

#将所有图片resize成227*227

w = 227

h = 227

c = 3

#读取图片

def read_image(image_path):

cate = [image_path + x for x in os.listdir(image_path) if os.path.isdir(image_path + x)]

imgs = []

labels = []

for idx, folder in enumerate(cate):

for im in glob.glob(folder + '/*.jpg'):

print('reading the image:%s' %(im))

img = io.imread(im)

img = transform.resize(img, (w, h))

imgs.append(img)

labels.append(idx)

return np.asarray(imgs, np.float32), np.asarray(labels, np.int32)

data, label=read_image(image_path)

#打乱顺序

num_example = data.shape[0]

arr = np.arange(num_example)

np.random.shuffle(arr)

data = data[arr]

label = label[arr]

#将所有数据分为训练集和验证集

ratio = 0.8

s = np.int(num_example * ratio)

x_train = data[:s]

y_train = label[:s]

x_val = data[s:]

y_val = label[s:]

############构建网络##########

#占位符

x = tf.placeholder(tf.float32, shape = [None, w, h, c], name = 'x')

y_ = tf.placeholder(tf.int32, shape = [None,], name = 'y_')

def inference(input_tensor, train, regularizer):

with tf.variable_scope("layer1-conv1"):

conv1_weights = tf.get_variable("weight", [11, 11, 3, 96], initializer = tf.truncated_normal_initializer(stddev = 0.1))

conv1_biases = tf.get_variable("biases", [96], initializer = tf.constant_initializer(0.0))

conv1 = tf.nn.conv2d(input_tensor, conv1_weights, strides = [1, 4, 4, 1], padding = "SAME")

relu1 = tf.nn.relu(tf.nn.bias_add(conv1, conv1_biases))

with tf.name_scope("layer2-pool1"):

pool1 = tf.nn.max_pool(relu1, ksize = [1, 3, 3, 1], strides = [1, 2, 2, 1], padding = "VALID")

with tf.variable_scope("layer3-conv2"):

conv2_weights = tf.get_variable("weight", [5, 5, 96, 256], initializer = tf.truncated_normal_initializer(stddev = 0.1))

conv2_biases = tf.get_variable("biases", [256], initializer = tf.constant_initializer(0.0))

conv2 = tf.nn.conv2d(pool1, conv2_weights, strides = [1, 1, 1, 1], padding = "SAME")

relu2 = tf.nn.relu(tf.nn.bias_add(conv2, conv2_biases))

with tf.name_scope("layer4-pool2"):

pool2 = tf.nn.max_pool(relu2, ksize = [1, 3, 3, 1], strides = [1, 2, 2, 1], padding = "VALID")

with tf.variable_scope("layer5-conv3"):

conv3_weights = tf.get_variable("weight", [3, 3, 256, 384], initializer = tf.truncated_normal_initializer(stddev = 0.1))

conv3_biases = tf.get_variable("biases", [384], initializer = tf.constant_initializer(0.0))

conv3 = tf.nn.conv2d(pool2, conv3_weights, strides = [1, 1, 1, 1], padding = "SAME")

relu3 = tf.nn.relu(tf.nn.bias_add(conv3, conv3_biases))

with tf.variable_scope("layer6-conv4"):

conv4_weights = tf.get_variable("weight", [3, 3, 384, 384], initializer = tf.truncated_normal_initializer(stddev = 0.1))

conv4_biases = tf.get_variable("biases", [384], initializer = tf.constant_initializer(0.0))

conv4 = tf.nn.conv2d(conv3, conv4_weights, strides = [1, 1, 1, 1], padding = "SAME")

relu4 = tf.nn.relu(tf.nn.bias_add(conv4, conv4_biases))

with tf.variable_scope("layer7-conv5"):

conv5_weights = tf.get_variable("weight", [3, 3, 384, 256], initializer = tf.truncated_normal_initializer(stddev = 0.1))

conv5_biases = tf.get_variable("biases", [256], initializer = tf.constant_initializer(0.0))

conv5 = tf.nn.conv2d(conv4, conv5_weights, strides = [1, 1, 1, 1], padding = "SAME")

relu5 = tf.nn.relu(tf.nn.bias_add(conv5, conv5_biases))

with tf.name_scope("layer8-pool3"):

pool3 = tf.nn.max_pool(relu5, ksize = [1, 3, 3, 1], strides = [1, 2, 2, 1], padding = "VALID")

nodes = 6 * 6 * 256

reshaped = tf.reshape(pool3, [-1, nodes])

with tf.variable_scope("layer9-fc1"):

fc1_weights = tf.get_variable("weight", [nodes, 4096], initializer = tf.truncated_normal_initializer(stddev = 0.1))

if regularizer != None:

tf.add_to_collection("losses", regularizer(fc1_weights))

fc1_biases = tf.get_variable("bias", [4096], initializer = tf.constant_initializer(0.1))

fc1 = tf.nn.relu(tf.matmul(reshaped, fc1_weights) + fc1_biases)

if train:

fc1 = tf.nn.dropout(fc1, 0.5)

with tf.variable_scope("layer10-fc2"):

fc2_weights = tf.get_variable("weight", [4096, 4096], initializer = tf.truncated_normal_initializer(stddev = 0.1))

if regularizer != None:

tf.add_to_collection("losses", regularizer(fc2_weights))

fc2_biases = tf.get_variable("bias", [4096], initializer = tf.constant_initializer(0.1))

fc2 = tf.nn.relu(tf.matmul(fc1, fc2_weights) + fc2_biases)

if train:

fc2 = tf.nn.dropout(fc2, 0.5)

with tf.variable_scope("layer11-fc3"):

fc3_weights = tf.get_variable("weight", [4096, 2], initializer = tf.truncated_normal_initializer(stddev = 0.1))

if regularizer != None:

tf.add_to_collection("losses", regularizer(fc3_weights))

fc3_biases = tf.get_variable("bias", [2], initializer = tf.constant_initializer(0.1))

logit = tf.matmul(fc2, fc3_weights) + fc3_biases

return logit

regularizer = tf.contrib.layers.l2_regularizer(0.0001)

logits = inference(x, False, regularizer)

#将logits乘以1赋值给logits_eval, 定义name

b = tf.constant(value = 1, dtype = tf.float32)

logits_eval = tf.multiply(logits, b, name = "logits_eval")

loss = tf.nn.sparse_softmax_cross_entropy_with_logits(logits = logits, labels = y_)

train_op = tf.train.AdamOptimizer(learning_rate = 0.001).minimize(loss)

correct_prediction = tf.equal(tf.cast(tf.argmax(logits, 1), tf.int32), y_)

acc = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

train_acc_graph = tf.summary.scalar('train_acc_graph', acc)

#train_loss_graph = tf.summary.scalar('train_loss_graph', loss)

#按批次取数据

def minibatches(inputs = None, targets = None, batch_size = None, shuffle = False):

assert len(inputs) == len(targets)

if shuffle:

indices = np.arange(len(inputs))

np.random.shuffle(indices)

for start_idx in range(0, len(inputs) - batch_size + 1, batch_size):

if shuffle:

excerpt = indices[start_idx:start_idx + batch_size]

else:

excerpt = slice(start_idx, start_idx + batch_size)

yield inputs[excerpt], targets[excerpt]

def variable_summaries(var):

with tf.name_scope('summaries'):

mean = tf.reduce_mean(var)

tf.summary.scalar('mean', mean)

with tf.name_scope('stddev'):

stddev = tf.sqrt(tf.reduce_mean(tf.square(var - mean)))

tf.summary.scalar('stddev', stddev)

tf.summary.scalar('max', tf.reduce_max(var))

tf.summary.scalar('min', tf.reduce_min(var))

tf.summary.histogram('histogram', var)

#训练和测试数据

n_epoch = 50

batch_size = 16

saver = tf.train.Saver()

sess = tf.Session()

sess.run(tf.global_variables_initializer())

train_writer = tf.summary.FileWriter(log_dir, sess.graph)

for epoch in range(n_epoch):

start_time = time.time()

#training

train_loss, train_acc, n_batch = 0, 0, 0

for x_train_a, y_train_a in minibatches(x_train, y_train, batch_size, shuffle = True):

_, err, ac, acc_graph = sess.run([train_op, loss, acc, train_acc_graph], feed_dict = {x: x_train_a, y_: y_train_a})

train_loss += err; train_acc += ac; n_batch +=1

print("the epoch value is {0}, train loss: {1}".format(epoch, (np.sum(train_loss) / n_batch)))

print("the epoch value is {0}, train acc: {1}".format(epoch, (np.sum(train_acc) / n_batch)))

train_writer.add_summary(acc_graph, epoch)

#validation

val_loss, val_acc, n_batch = 0, 0, 0

for x_val_a, y_val_a in minibatches(x_val, y_val, batch_size, shuffle = False):

err, ac, acc_graph = sess.run([loss, acc, train_acc_graph], feed_dict = {x: x_val_a, y_: y_val_a})

val_loss += err; val_acc += ac; n_batch += 1

print("the epoch value is {0}, validation loss: {1}".format(epoch, (np.sum(val_loss) / n_batch)))

print("the epoch value is {0}, validation acc: {1}".format(epoch, (np.sum(val_acc) / n_batch)))

train_writer.add_summary(acc_graph, epoch)

saver.save(sess, model_path, )

sess.close()

#! /usr/bin/python

# -*- coding:utf-8 -*-

from skimage import io,transform

import tensorflow as tf

import numpy as np

path1 = "/home/muyangren/projectdir/tensorflow/PetTf/test_model_photos/Abyssinian_220.jpg"

path2 = "/home/muyangren/projectdir/tensorflow/PetTf/test_model_photos/american_bulldog_217.jpg"

path3 = "/home/muyangren/projectdir/tensorflow/PetTf/test_model_photos/basset_hound_194.jpg"

path4 = "/home/muyangren/projectdir/tensorflow/PetTf/test_model_photos/beagle_195.jpg"

path5 = "/home/muyangren/projectdir/tensorflow/PetTf/test_model_photos/Bengal_194.jpg"

path6 = "/home/muyangren/projectdir/tensorflow/PetTf/test_model_photos/Birman_197.jpg"

#pet_dict = {0:'Abyssinian',1:'american_bulldog',2:'basset_hound',3:'beagle',4:'Bengal',5:'Birman'}

pet_dict = {0:'dog',1:'cat'}

w=227

h=227

c=3

def read_one_image(path):

img = io.imread(path)

img = transform.resize(img,(w,h))

return np.asarray(img)

with tf.Session() as sess:

data = []

data1 = read_one_image(path1)

print(data1)

data2 = read_one_image(path2)

data3 = read_one_image(path3)

data4 = read_one_image(path4)

data5 = read_one_image(path5)

data6 = read_one_image(path6)

data.append(data1)

data.append(data2)

data.append(data3)

data.append(data4)

data.append(data5)

data.append(data6)

saver = tf.train.import_meta_graph('/home/muyangren/projectdir/tensorflow/PetTf/pet_models.meta')

saver.restore(sess,tf.train.latest_checkpoint('/home/muyangren/projectdir/tensorflow/PetTf/'))

graph = tf.get_default_graph()

x = graph.get_tensor_by_name("x:0")

feed_dict = {x:data}

logits = graph.get_tensor_by_name("logits_eval:0")

classification_result = sess.run(logits,feed_dict)

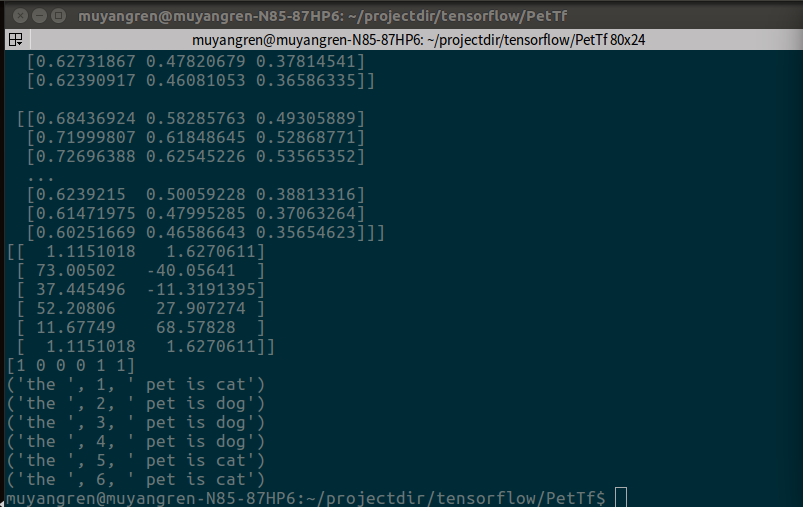

#打印出预测矩阵

print(classification_result)

#打印出预测矩阵每一行最大值的索引

print(tf.argmax(classification_result,1).eval())

#根据索引通过字典对应分类

output = []

output = tf.argmax(classification_result,1).eval()

for i in range(len(output)):

print("the ",i+1," pet is "+pet_dict[output[i]])

最后

以上就是淡然溪流最近收集整理的关于TensorFlow--AlexNet实现的全部内容,更多相关TensorFlow--AlexNet实现内容请搜索靠谱客的其他文章。

本图文内容来源于网友提供,作为学习参考使用,或来自网络收集整理,版权属于原作者所有。

发表评论 取消回复