golang有自己的内存管理机制,自主的完成内存分配、垃圾回收、内存管理等,避免频繁的向操作系统申请、释放内存,有效的提升go语言的性能。

tcmalloc内存分配器

具体策略:全局缓存堆和进程的私有缓存

- 对小容量的内存申请进程的私有缓存,私有缓存不足的时候可以再从全局缓存申请一部分作为私有缓存

- 对大容量的内存申请则需要从全局缓存中进行申请。而容量界定是32k,缓存的组织方式是一个单链表数组,数组的每个元素是一个单链表,链表中的每个元素具有相同的大小。

1. Memory Allocator

Go 内存分配器基于 tcmalloc 模型,这在 malloc.go 头部注释中有明确说明。

based on tcmalloc http://goog-perftools.sourceforge.net/doc/tcmalloc.html

分配器主要是基于页分配,< 32KB的大约有70类,使用bitmap管理

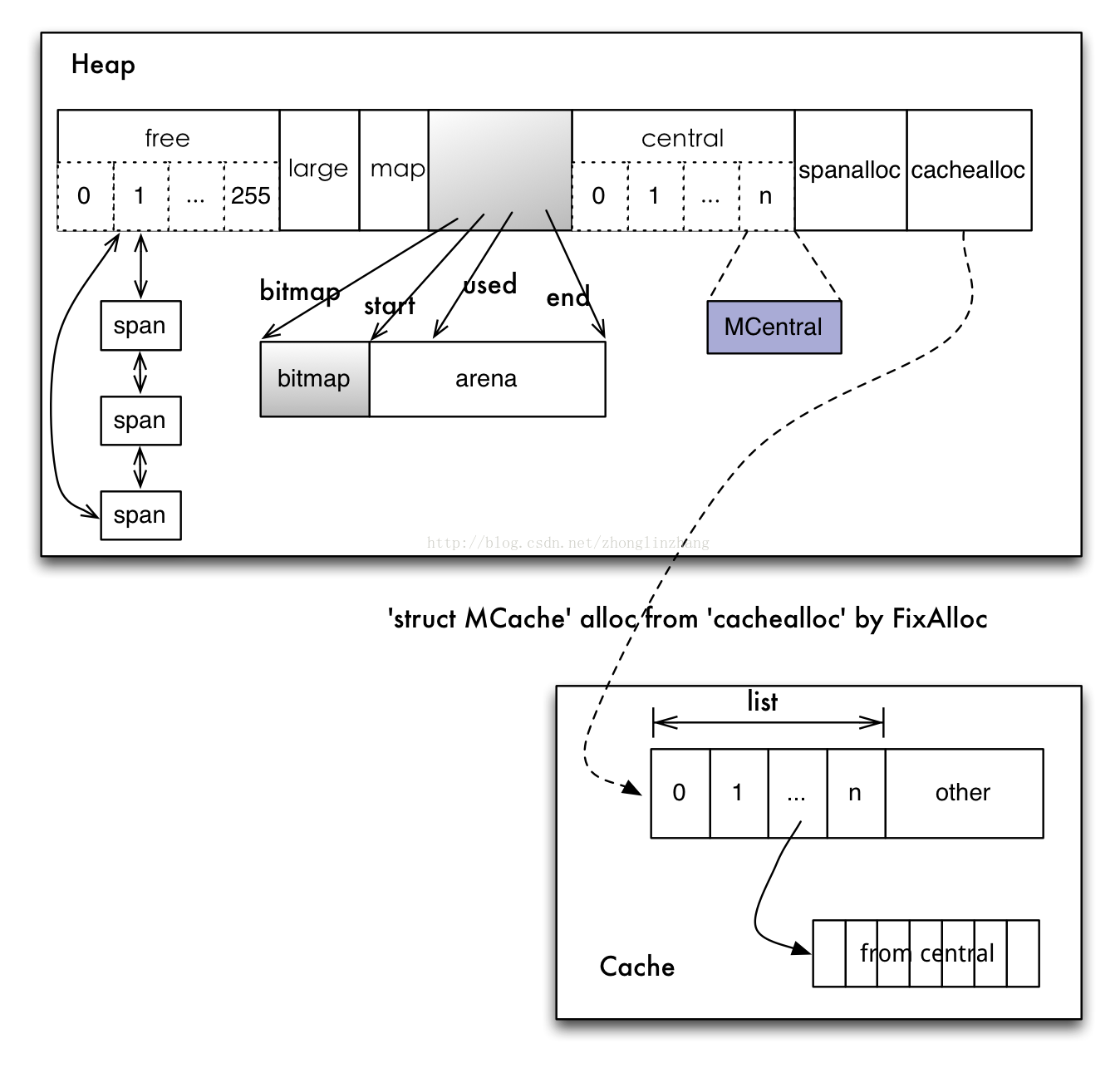

Cache、Central、Heap是三个核心组件

一个线程有一个cache对应,这个cache用来存放小对象。所有线程共享Central和Heap。

1.1 分配器的数据结构:

- fixalloc: 固定尺寸的堆对象空闲列表分配器,用来管理分配器的存储

- mheap: 堆分配器,以8192byte页进行管理

- mspan: 由mheap管理的页面

- mcentral: 所有给定大小类的mspan集合,Central组件其实也是一个缓存,但它缓存的不是小对象内存块,而是一组一组的内存page(一个page占4k大小)

- mstats: 分配统计

1.2 小对象内存分配

- a. 直接访问本线程的cache,浏览mspan的空闲bitmap,如果有空闲的slot则分配,无需加锁,mache是线程所拥有

- b. mspan没有空闲的slot,将从mcentral列表获得需要尺寸的类,这需要加锁

- c. mcentral的mspan列表空的话,就要从mheap获取页

- d. 如果mheap空或者没有足够的页大小可用,就要从操作系统分配页,至少需要1MB

1.3 大对象内存分配

直接从mheap分配大对象

为了管理好内存,分配器使用用三级组件来完成不同操作。

• heap: 全局根对象。负责向操作系统申请内存,管理由垃圾回收器收回的空闲 span 内存块。

• central: 从 heap 获取空闲 span,并按需要将其切分成 object 块。heap 管理着多个central 对象,每个 central 负责处理一一种等级的内存分配需求。

• cache: 运行行期,每个 cache 都与某个具体线程相绑定,实现无无锁内存分配操作。其内部有个以等级为序号的数组,持有多个切分好的 span 对象。缺少空间时,向等级对应的 central 获取新的 span 即可。

2. 关键数据结构

2.1 mheap

主要是负责向系统申请大块的内存,为下层MCentral和MCache提供内存服务。他管理的基本单位是MSpan(连续内存页的数据结构)

free和busy数组以span为序号管理多个链表。当central需要时,只需从free找到页数合适的链表

large链表用于保存所有超出free和busy页数限制的MSpan

type mheap struct {

lock mutex

free [_MaxMHeapList]mSpanList // free lists of given length

freelarge mSpanList // free lists length >= _MaxMHeapList

busy [_MaxMHeapList]mSpanList // busy lists of large objects of given length

busylarge mSpanList // busy lists of large objects length >= _MaxMHeapList

allspans **mspan // all spans out there

gcspans **mspan // copy of allspans referenced by gc marker or sweeper

nspan uint32

sweepgen uint32 // sweep generation, see comment in mspan

sweepdone uint32 // all spans are swept

// span lookup

spans **mspan

spans_mapped uintptr

// Proportional sweep

pagesInUse uint64 // pages of spans in stats _MSpanInUse; R/W with mheap.lock

spanBytesAlloc uint64 // bytes of spans allocated this cycle; updated atomically

pagesSwept uint64 // pages swept this cycle; updated atomically

sweepPagesPerByte float64 // proportional sweep ratio; written with lock, read without

// TODO(austin): pagesInUse should be a uintptr, but the 386

// compiler can't 8-byte align fields.

// Malloc stats.

largefree uint64 // bytes freed for large objects (>maxsmallsize)

nlargefree uint64 // number of frees for large objects (>maxsmallsize)

nsmallfree [_NumSizeClasses]uint64 // number of frees for small objects (<=maxsmallsize)

// range of addresses we might see in the heap

bitmap uintptr // Points to one byte past the end of the bitmap

bitmap_mapped uintptr

arena_start uintptr

arena_used uintptr // always mHeap_Map{Bits,Spans} before updating

arena_end uintptr

arena_reserved bool

// central free lists for small size classes.

// the padding makes sure that the MCentrals are

// spaced CacheLineSize bytes apart, so that each MCentral.lock

// gets its own cache line.

central [_NumSizeClasses]struct {

mcentral mcentral

pad [sys.CacheLineSize]byte

}

spanalloc fixalloc // allocator for span*

cachealloc fixalloc // allocator for mcache*

specialfinalizeralloc fixalloc // allocator for specialfinalizer*

specialprofilealloc fixalloc // allocator for specialprofile*

speciallock mutex // lock for special record allocators.

}2.2 mspan

内存管理的基本单位,每个span用于管理特定的class对象, 跟据对象大小,span将一个或多个页拆分成多个块进行管理

startAddr: 起始地址

npages: 管理多少个页数

type mspan struct {

next *mspan // next span in list, or nil if none

prev **mspan // previous span's next field, or list head's first field if none

startAddr uintptr // address of first byte of span aka s.base()

npages uintptr // number of pages in span

stackfreelist gclinkptr // list of free stacks, avoids overloading freelist

freeindex uintptr

// TODO: Look up nelems from sizeclass and remove this field if it

// helps performance.

nelems uintptr // number of object in the span.

allocCache uint64

allocBits *uint8

gcmarkBits *uint8

// sweep generation:

// if sweepgen == h->sweepgen - 2, the span needs sweeping

// if sweepgen == h->sweepgen - 1, the span is currently being swept

// if sweepgen == h->sweepgen, the span is swept and ready to use

// h->sweepgen is incremented by 2 after every GC

sweepgen uint32

divMul uint32 // for divide by elemsize - divMagic.mul

allocCount uint16 // capacity - number of objects in freelist

sizeclass uint8 // size class

incache bool // being used by an mcache

state uint8 // mspaninuse etc

needzero uint8 // needs to be zeroed before allocation

divShift uint8 // for divide by elemsize - divMagic.shift

divShift2 uint8 // for divide by elemsize - divMagic.shift2

elemsize uintptr // computed from sizeclass or from npages

unusedsince int64 // first time spotted by gc in mspanfree state

npreleased uintptr // number of pages released to the os

limit uintptr // end of data in span

speciallock mutex // guards specials list

specials *special // linked list of special records sorted by offset.

baseMask uintptr // if non-0, elemsize is a power of 2, & this will get object allocation base

}2.3 mcentral

作为mheap和mcache的承上启下,承上从mheap申请mSpan;启下将mSpan划分为各种尺寸的对象给MCache使用

// Central list of free objects of a given size.

//

//go:notinheap

type mcentral struct {

lock mutex

spanclass spanClass

nonempty mSpanList // list of spans with a free object, ie a nonempty free list

empty mSpanList // list of spans with no free objects (or cached in an mcache)

// nmalloc is the cumulative count of objects allocated from

// this mcentral, assuming all spans in mcaches are

// fully-allocated. Written atomically, read under STW.

nmalloc uint64

}2.4 mcache

运行时分配池,每个线程都有自己的局部内存缓存mCache,实现goroutine高并发的重要因素(分配小对象可直接从mCache中分配,不用加锁)

type mcache struct {

// The following members are accessed on every malloc,

// so they are grouped here for better caching.

next_sample int32 // trigger heap sample after allocating this many bytes

local_scan uintptr // bytes of scannable heap allocated

tiny uintptr

tinyoffset uintptr

local_tinyallocs uintptr // number of tiny allocs not counted in other stats

// The rest is not accessed on every malloc.

alloc [numSpanClasses]*mspan // spans to allocate from, indexed by spanClass

stackcache [_NumStackOrders]stackfreelist

// Local allocator stats, flushed during GC.

local_nlookup uintptr // number of pointer lookups

local_largefree uintptr // bytes freed for large objects (>maxsmallsize)

local_nlargefree uintptr // number of frees for large objects (>maxsmallsize)

local_nsmallfree [_NumSizeClasses]uintptr // number of frees for small objects (<=maxsmallsize)

}3. 代码分析-malloc.go->mallocInit

分配器管理算法依赖连续内存地址,在初始化时,分配器会预留一块巨大的虚拟地址空间。该空间被成三个部分:

- arena: 用户内存实际分配范围。

- bitmap: 为每个地址提供 4bit 标记位,用于垃圾回收操作。

- spans: 记录每个页所对应 span 地址,用于反查和合并操作。

分配器heap的初始化工作,主要是几个span管理连标和central数组的创建

关键代码:

initSizes()初始化 size class 表

if sys.PtrSize == 8 && (limit == 0 || limit > 1<<30) {

arenaSize := round(_MaxMem, _PageSize)

bitmapSize = arenaSize / (sys.PtrSize * 8 / 2)

spansSize = arenaSize / _PageSize * sys.PtrSize

spansSize = round(spansSize, _PageSize)mheap_.spans = (**mspan)(unsafe.Pointer(p1))

mheap_.bitmap = p1 + spansSize + bitmapSize

if sys.PtrSize == 4 {

// Set arena_start such that we can accept memory

// reservations located anywhere in the 4GB virtual space.

mheap_.arena_start = 0

} else {

mheap_.arena_start = p1 + (spansSize + bitmapSize)

}

mheap_.arena_end = p + pSize

mheap_.arena_used = p1 + (spansSize + bitmapSize)

mheap_.arena_reserved = reserved初始化好heap的arena以及bitmap

4. 代码分析-mheap.go->init

func (h *mheap) init(spans_size uintptr) {

h.spanalloc.init(unsafe.Sizeof(mspan{}), recordspan, unsafe.Pointer(h), &memstats.mspan_sys)

h.cachealloc.init(unsafe.Sizeof(mcache{}), nil, nil, &memstats.mcache_sys)

h.specialfinalizeralloc.init(unsafe.Sizeof(specialfinalizer{}), nil, nil, &memstats.other_sys)

h.specialprofilealloc.init(unsafe.Sizeof(specialprofile{}), nil, nil, &memstats.other_sys)

// h->mapcache needs no init

for i := range h.free {

h.free[i].init()

h.busy[i].init()

}

h.freelarge.init()

h.busylarge.init()

for i := range h.central {

h.central[i].mcentral.init(int32(i))

}

sp := (*slice)(unsafe.Pointer(&h_spans))

sp.array = unsafe.Pointer(h.spans)

sp.len = int(spans_size / sys.PtrSize)

sp.cap = int(spans_size / sys.PtrSize)

}- 初始化管理类型的固定分配器

- 初始化 free / busy 数组

- 初始化 large 链表

- 初始化所有等级的 central

4. 代码分析-malloc.go->newobject

func newobject(typ *_type) unsafe.Pointer {

return mallocgc(typ.size, typ, true)

}函数mallocgc主要工作总结:

- 大对象直接从 heap 获取 span。

- 小对象从 cache.alloc[sizeclass].freelist 获取 object

- 微小对象组合使用 cache.tiny object。

大对象分配调用函数:largeAlloc

func largeAlloc(size uintptr, needzero bool) *mspan {

if size+_PageSize < size {

throw("out of memory")

}

npages := size >> _PageShift

if size&_PageMask != 0 {

npages++

}

deductSweepCredit(npages*_PageSize, npages)

s := mheap_.alloc(npages, 0, true, needzero)

if s == nil {

throw("out of memory")

}

s.limit = s.base() + size

heapBitsForSpan(s.base()).initSpan(s)

return s

}分配内存流程总结:

size > 32K,则使用 mheap 分配

size < 16 byte,使用 mcache 的小对象分配器 tiny 直接分配(tiny 就是一个指针)

size > 16 byte && size <=32K byte 时,先使用 mcache 中对应的 size class 分配。

如果 mcache 对应的 size class 的 span 已经没有可用的块,则向 mcentral 请求

如果 mcentral 也没有可用的块,则向 mheap 申请,并切分

如果 mheap 也没有合适的 span,则向操作系统申请

最后

以上就是多情老虎最近收集整理的关于【golang 源码分析】内存分配与管理tcmalloc内存分配器1. Memory Allocator2. 关键数据结构3. 代码分析-malloc.go->mallocInit分配内存流程总结:的全部内容,更多相关【golang内容请搜索靠谱客的其他文章。

发表评论 取消回复