1、前言

本文主要研究高通平台Camera驱动和HAL层代码架构,熟悉高通Camera的控制流程。

平台:Qcom-高通平台

Hal版本:【HAL1】

知识点如下:

从HAL层到driver层:研究Camera以下内容

1.打开(open)流程

2.预览(preview)流程

3.拍照(tackPicture)流程

2、Camera软件架构

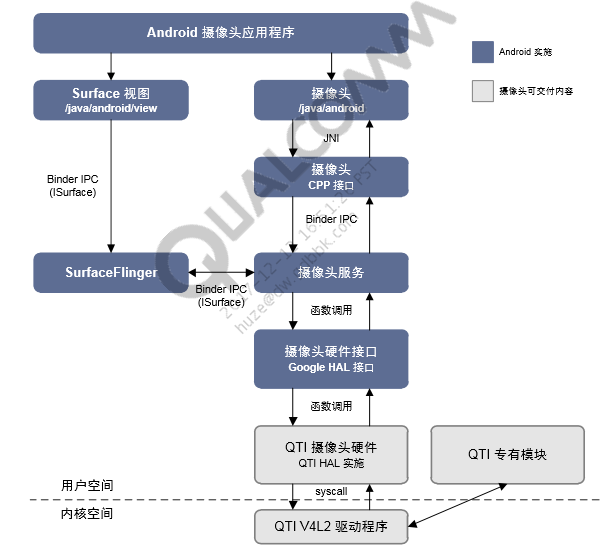

由上图可以看出,Android Camera 框架是 client/service 的架构,

1.有两个进程:

**client 进程:**可以看成是 AP 端,主要包括 JAVA 代码与一些 native c/c++代码;

**service 进 程:”**属于服务端,是 native c/c++代码,主要负责和 linux kernel 中的 camera driver 交互,搜集 linuxkernel 中 cameradriver 传上来的数据,并交给显示系统SurfaceFlinger显示。

client 进程与 service 进程通过 Binder 机制通信, client 端通过调用 service 端的接口实现各个具体的功能。

2.最下面的是kernel层的驱动,其中按照V4L2架构实现了camera sensor等驱动,向用户空间提供/dev/video0节点,这些设备节点文件,把操作设备的接口暴露给用户空间。

3.在往上是HAL层,高通代码实现了对/dev/video0的基本操作,对接了android的camera相关的interface。

2.1 Camera的open流程

2.1.1 Hal层

Android中Camera的调用流程, 基本是 Java -> JNI -> Service -> HAL -> 驱动层。

frameworks/av/services/camera/libcameraservice/device1/CameraHardwareInterface.h

status_t initialize(CameraModule *module) {

···

rc = module->open(mName.string(), (hw_device_t **)&mDevice);

···

}这里调用module->open开始调用到HAL层,那调用的是哪个方法呢?

我们继续往下看:

hardware/qcom/camera/QCamera2/HAL/wrapper/QualcommCamera.cpp

static hw_module_methods_t camera_module_methods = {

open: camera_device_open,

};实际上是调用了camera_device_open函数,为了对调用流程更加清晰的认识,

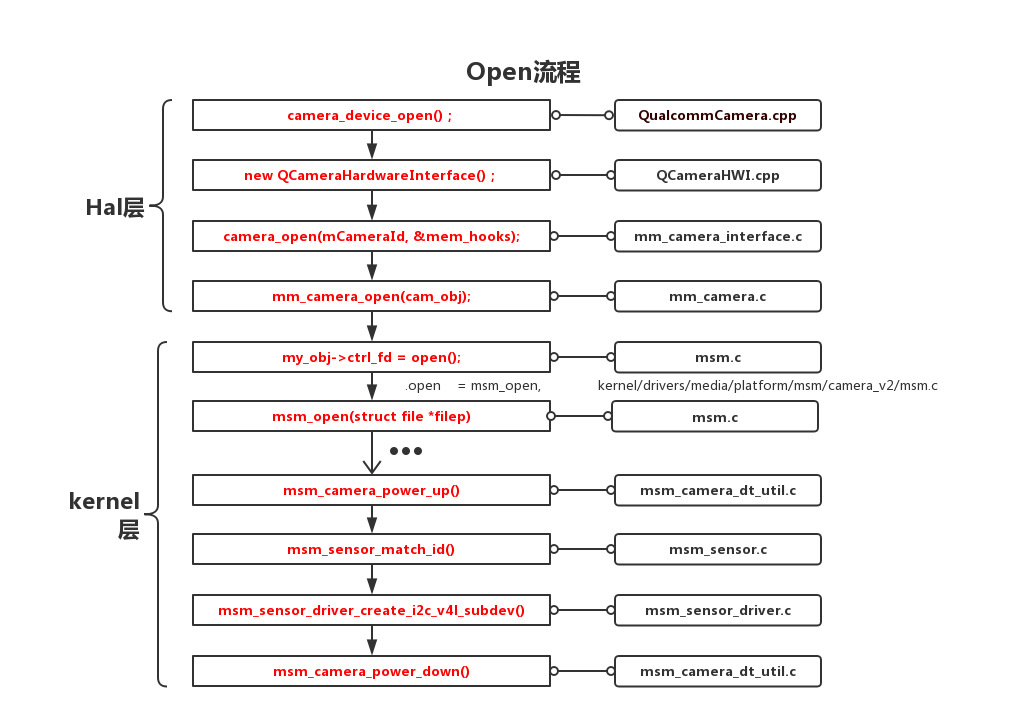

我画了一张流程图(画图工具:processon):

open流程图已经很清晰明了,我们关注一些重点函数:

在HAL层的 module->open(mName.string(), (hw_device_t **)&mDevice)层层调用,最终会调用到函数mm_camera_open(cam_obj);

hardware/qcom/camera/QCamera2/HAL/core/src/QCameraHWI.cpp

QCameraHardwareInterface::QCameraHardwareInterface(int cameraId, int mode)

{

···

/* Open camera stack! */

mCameraHandle=camera_open(mCameraId, &mem_hooks);

//Preview

result = createPreview();

//Record

result = createRecord();

//Snapshot

result = createSnapshot();

/* launch jpeg notify thread and raw data proc thread */

mNotifyTh = new QCameraCmdThread();

mDataProcTh = new QCameraCmdThread();

···

}分析:new QCameraHardwareInterface()进行初始化:主要做了以下动作:

1.打开camera

2.creat preview stream、record stream、snapshot stream

3.创建2个线程(jpeg notify thread和raw data proc thread)hardware/qcom/camera/QCamera2/stack/mm-camera-interface/src/mm_camera.c

int32_t mm_camera_open(mm_camera_obj_t *my_obj)

{

···

my_obj->ctrl_fd = open(dev_name, O_RDWR | O_NONBLOCK);

···

}在V4L2框架中,Camera被看做一个视频设备,使用open函数打开这个设备:这里以阻塞模式打开Camera。

1. 用非阻塞模式打开摄像头设备

cameraFd = open("/dev/video0", O_RDWR | O_NONBLOCK);2. 如果用阻塞模式打开摄像头设备,上述代码变为:

cameraFd = open("/dev/video0", O_RDWR);ps:关于阻塞模式和非阻塞模式

应用程序能够使用阻塞模式或非阻塞模式打开视频设备,如果使用非阻塞模式调用视频设备,

即使尚未捕获到信息,驱动依旧会把缓存(DQBUFF)里的东西返回给应用程序。

那么,接下来就会调用到Kernel层的代码2.1.2Kernel层

kernel/drivers/media/platform/msm/camera_v2/msm.c

static struct v4l2_file_operations msm_fops = {

.owner = THIS_MODULE,

.open = msm_open,

.poll = msm_poll,

.release = msm_close,

.ioctl = video_ioctl2,

#ifdef CONFIG_COMPAT

.compat_ioctl32 = video_ioctl2,

#endif

};

实际上是调用了msm_open这个函数,我们跟进去看:

static int msm_open(struct file *filep)

{

···

/* !!! only ONE open is allowed !!! */

if (atomic_cmpxchg(&pvdev->opened, 0, 1))

return -EBUSY;

spin_lock_irqsave(&msm_pid_lock, flags);

msm_pid = get_pid(task_pid(current));

spin_unlock_irqrestore(&msm_pid_lock, flags);

/* create event queue */

rc = v4l2_fh_open(filep);

if (rc < 0)

return rc;

spin_lock_irqsave(&msm_eventq_lock, flags);

msm_eventq = filep->private_data;

spin_unlock_irqrestore(&msm_eventq_lock, flags);

/* register msm_v4l2_pm_qos_request */

msm_pm_qos_add_request();

···

}

分析:

通过调用v4l2_fh_open函数打开Camera,该函数会创建event队列等进行一些其他操作。

接下来我们跟着log去看:

camera open log

<3>[ 12.526811] msm_camera_power_up type 1

<3>[ 12.526818] msm_camera_power_up:1303 gpio set val 33

<3>[ 12.528873] msm_camera_power_up index 6

<3>[ 12.528885] msm_camera_power_up type 1

<3>[ 12.528893] msm_camera_power_up:1303 gpio set val 33

<3>[ 12.534954] msm_camera_power_up index 7

<3>[ 12.534969] msm_camera_power_up type 1

<3>[ 12.534977] msm_camera_power_up:1303 gpio set val 28

<3>[ 12.540162] msm_camera_power_up index 8

<3>[ 12.540177] msm_camera_power_up type 1

<3>[ 12.562753] msm_sensor_match_id: read id: 0x5675 expected id 0x5675:

<3>[ 12.562763] ov5675_back probe succeeded

<3>[ 12.562771] msm_sensor_driver_create_i2c_v4l_subdev camera I2c probe succeeded

<3>[ 12.564930] msm_sensor_driver_create_i2c_v4l_subdev rc 0 session_id 1

<3>[ 12.565495] msm_sensor_driver_create_i2c_v4l_subdev:120

<3>[ 12.565507] msm_camera_power_down:1455

<3>[ 12.565514] msm_camera_power_down index 0分析:

最终就是调用msm_camera_power_up上电,msm_sensor_match_id识别sensor id,调用ov5675_back probe()探测函数去完成匹配设备和驱动的工作,msm_camera_power_down下电!

到此 我们的open流程就结束了!!!2.2 Camera的preview流程

2.2.1 Hal层

hardware/qcom/camera/QCamera2/HAL/QCamera2HWI.cpp

int QCamera2HardwareInterface::startPreview()

{

···

int32_t rc = NO_ERROR;

···

rc = startChannel(QCAMERA_CH_TYPE_PREVIEW);

···

}这里调用startChannel(QCAMERA_CH_TYPE_PREVIEW),开启preview流。

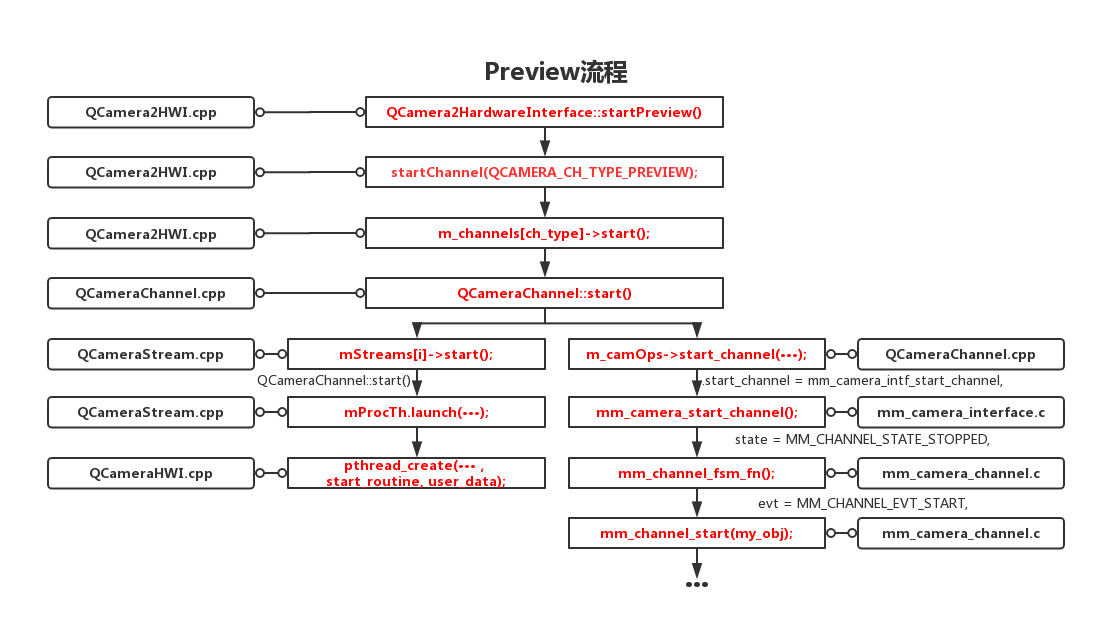

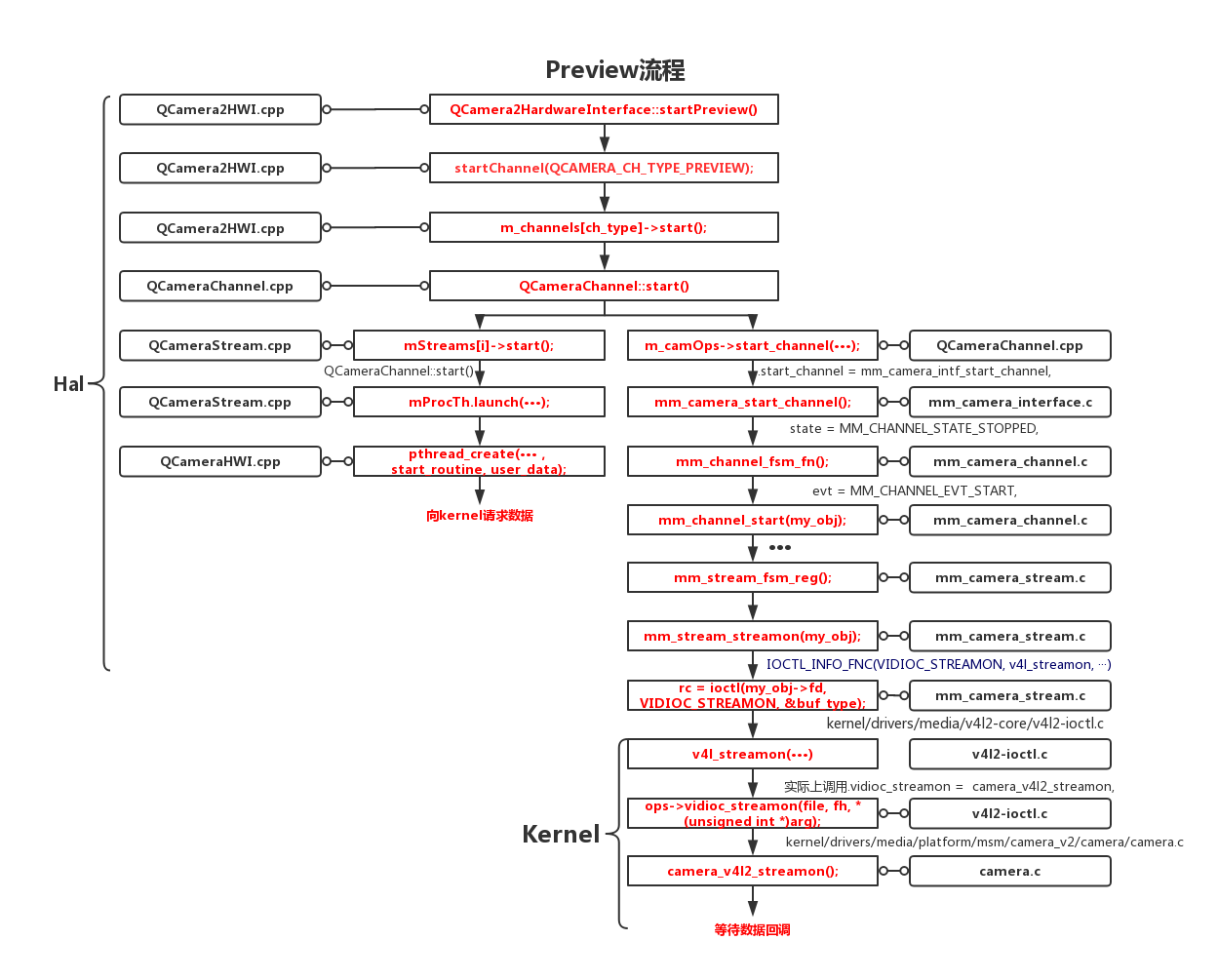

接来下看我画的一张流程图:(Hal层)

关注一些重点函数:

hardware/qcom/camera/QCamera2/HAL/QCameraChannel.cpp

int32_t QCameraChannel::start()

{

···

mStreams[i]->start();//流程1

···

rc = m_camOps->start_channel(m_camHandle, m_handle);//流程2

···

}进入QCameraChannel::start()函数开始执行两个流程,分别是

mStreams[i]->start()

m_camOps->start_channel(m_camHandle, m_handle);

流程1:mStreams[i]->start()

1.通过mProcTh.launch(dataProcRoutine, this)开启新线程

2.执行CAMERA_CMD_TYPE_DO_NEXT_JOB分支,

3.从mDataQ队列中取出数据并放入mDataCB中,等待数据返回到对应的stream回调中去,

4.最后向kernel请求数据;流程2:m_camOps->start_channel(m_camHandle, m_handle);

通过流程图,我们可以清晰的看到,经过一系列复杂的调用用,

最后在mm_camera_channel.c中

调用mm_channel_start(mm_channel_t *my_obj)函数,来看mm_channel_start做了什么事情:

hardware/qcom/camera/QCamera2/stack/mm-camera-interface/src/mm_camera_channel.c

int32_t mm_channel_start(mm_channel_t *my_obj)

{

···

/* 需要发送cb,因此启动线程 */

/* 初始化superbuf队列 */

mm_channel_superbuf_queue_init(&my_obj->bundle.superbuf_queue);

/* 启动cb线程,通过cb调度superbuf中 */

snprintf(my_obj->cb_thread.threadName, THREAD_NAME_SIZE, "CAM_SuperBuf");

mm_camera_cmd_thread_launch(&my_obj->cb_thread,

mm_channel_dispatch_super_buf,

(void*)my_obj);

/* 启动 cmd 线程,作为superbuf接收数据的回调函数*/

snprintf(my_obj->cmd_thread.threadName, THREAD_NAME_SIZE, "CAM_SuperBufCB");

mm_camera_cmd_thread_launch(&my_obj->cmd_thread,

mm_channel_process_stream_buf,

(void*)my_obj);

/* 为每个strean分配 buf */

/*allocate buf*/

rc = mm_stream_fsm_fn(s_objs[i],

MM_STREAM_EVT_GET_BUF,

NULL,

NULL);

/* reg buf */

rc = mm_stream_fsm_fn(s_objs[i],

MM_STREAM_EVT_REG_BUF,

NULL,

NULL);

/* 开启 stream */

rc = mm_stream_fsm_fn(s_objs[i],

MM_STREAM_EVT_START,

NULL,

NULL);

···

}

过程包括:

1.创建cb thread,cmd thread线程以及

2.为每个stream分配buf

3.开启stream;我们继续关注开启stream后的流程:

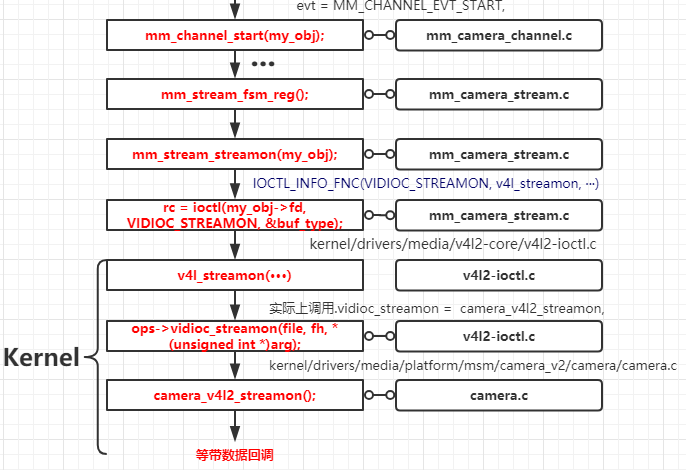

rc = mm_stream_fsm_fn(s_objs[i],MM_STREAM_EVT_START,NULL,NULL);

调用到

rc = mm_stream_fsm_reg(my_obj, evt, in_val, out_val)

hardware/qcom/camera/QCamera2/stack/mm-camera-interface/src/mm_camera_stream.c

int32_t mm_stream_fsm_reg(···)

{

···

case MM_STREAM_EVT_START:

rc = mm_stream_streamon(my_obj);

···

}在mm_camera_stream.c中调用mm_stream_streamon(mm_stream_t *my_obj)函数.

向kernel发送v4l2请求,等待数据回调

int32_t mm_stream_streamon(mm_stream_t *my_obj)

{

···

enum v4l2_buf_type buf_type = V4L2_BUF_TYPE_VIDEO_CAPTURE_MPLANE;

···

rc = ioctl(my_obj->fd, VIDIOC_STREAMON, &buf_type);

···

}

2.2.2 Kernel层

kernel/drivers/media/platform/msm/camera_v2/camera/camera.c

通过ioctl的方式,经过层层调用,最后调用到camera_v4l2_streamon();

static int camera_v4l2_streamon(struct file *filep, void *fh,

enum v4l2_buf_type buf_type)

{

struct v4l2_event event;

int rc;

struct camera_v4l2_private *sp = fh_to_private(fh);

rc = vb2_streamon(&sp->vb2_q, buf_type);

camera_pack_event(filep, MSM_CAMERA_SET_PARM,

MSM_CAMERA_PRIV_STREAM_ON, -1, &event);

rc = msm_post_event(&event, MSM_POST_EVT_TIMEOUT);

···

rc = camera_check_event_status(&event);

return rc;

}

分析:通过msm_post_event发生数据请求,等待数据回调。Preview完整流程图

到此,preview预览流程结束

2.3 Camera的tackPicture流程

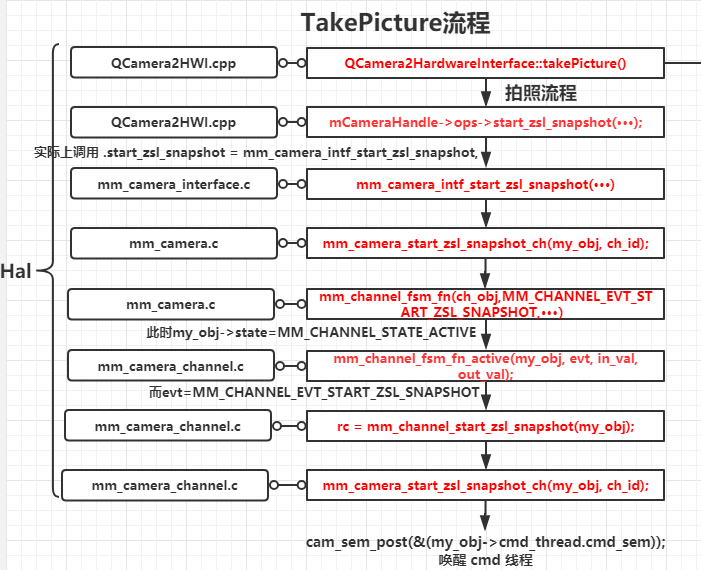

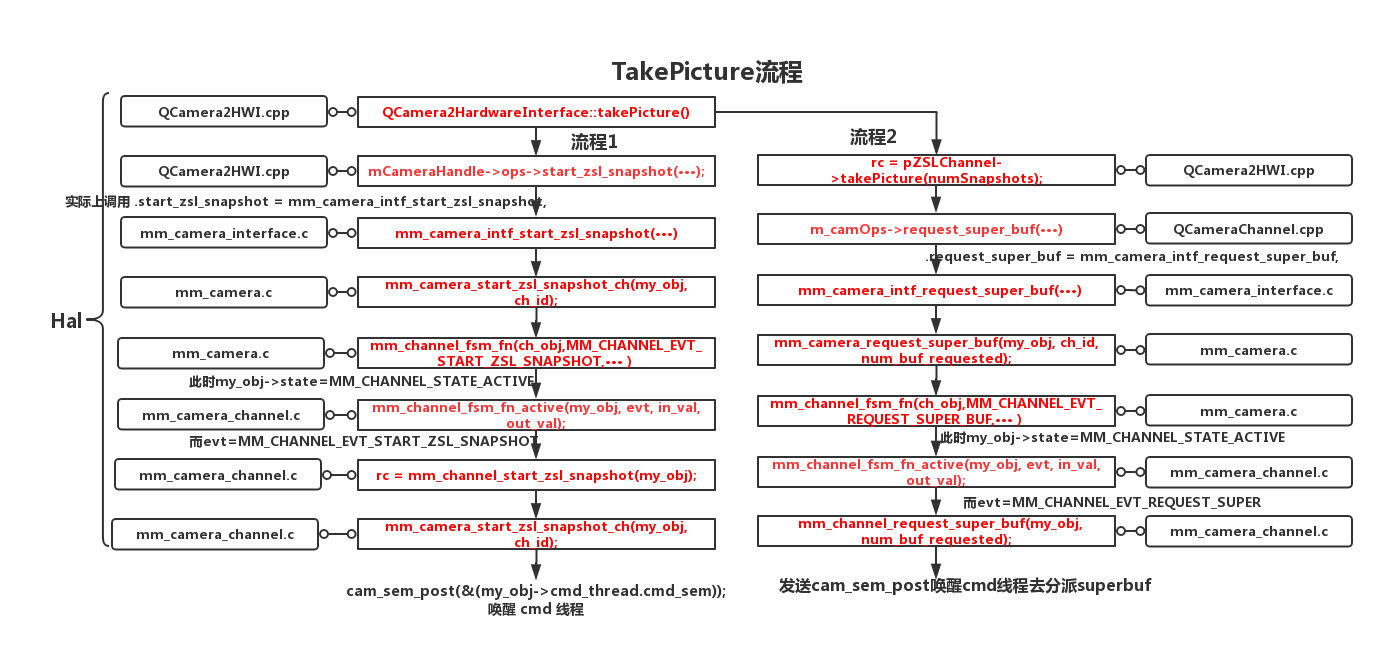

事实上,tackPicture流程和preview的流程很类似!

以ZSL模式(零延迟模式)为切入点:2.3.1 Hal层

hardware/qcom/camera/QCamera2/HAL/QCamera2HWI.cpp

int QCamera2HardwareInterface::takePicture()

{

···

//流程1

mCameraHandle->ops->start_zsl_snapshot(mCameraHandle->camera_handle,

pZSLChannel->getMyHandle());

···

//流程2

rc = pZSLChannel->takePicture(numSnapshots);

···

}

进入QCamera2HardwareInterface::takePicture后,会走2个流程:

1.mCameraHandle->ops->start_zsl_snapshot(···);

2.pZSLChannel->takePicture(numSnapshots);流程1:

经过层层调用,最终会调用到mm_channel_start_zsl_snapshot

hardware/qcom/camera/QCamera2/stack/mm-camera-interface/src/mm_camera_channel.c

int32_t mm_channel_start_zsl_snapshot(mm_channel_t *my_obj)

{

int32_t rc = 0;

mm_camera_cmdcb_t* node = NULL;

node = (mm_camera_cmdcb_t *)malloc(sizeof(mm_camera_cmdcb_t));

if (NULL != node) {

memset(node, 0, sizeof(mm_camera_cmdcb_t));

node->cmd_type = MM_CAMERA_CMD_TYPE_START_ZSL;

/* enqueue to cmd thread */

cam_queue_enq(&(my_obj->cmd_thread.cmd_queue), node);

/* wake up cmd thread */

cam_sem_post(&(my_obj->cmd_thread.cmd_sem));

} else {

CDBG_ERROR("%s: No memory for mm_camera_node_t", __func__);

rc = -1;

}

return rc;

}

分析:

该函数主要做了2件事情:

1 cam_queue_enq(&(my_obj->cmd_thread.cmd_queue), node);入队

2 通过cam_sem_post(&(my_obj->cmd_thread.cmd_sem));唤醒cmd线程

这里的node->cmd_type=MM_CAMERA_CMD_TYPE_START_ZSL

hardware/qcom/camera/QCamera2/stack/mm-camera-interface/src/mm_camera_thread.c

static void *mm_camera_cmd_thread(void *data)

{

···

case MM_CAMERA_CMD_TYPE_START_ZSL:

cmd_thread->cb(node, cmd_thread->user_data);

···

}

这里cmd_thread->cb是回调函数:

cmd_thread->cb = mm_channel_process_stream_buf,经过层层复杂的回调

最终:

mm_channel_superbuf_skip(ch_obj, &ch_obj->bundle.superbuf_queue);

super_buf = (mm_channel_queue_node_t*)node->data;

将buffer 取出 且释放list中的node,最终将buffer queue给kernel进行下一次填充.

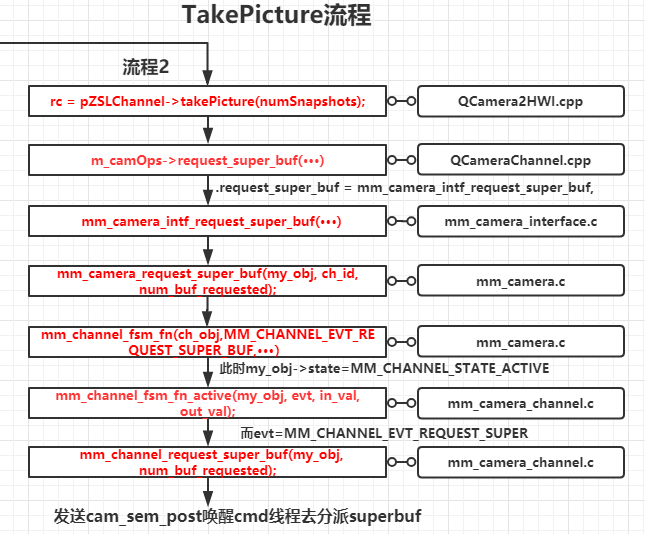

流程2:

同样,经过层层调用,最终调用到mm_channel_request_super_buf

hardware/qcom/camera/QCamera2/stack/mm-camera-interface/src/mm_camera_channel.c

int32_t mm_channel_request_super_buf(mm_channel_t *my_obj, uint32_t num_buf_requested)

{

/* set pending_cnt

* will trigger dispatching super frames if pending_cnt > 0 */

/* send cam_sem_post to wake up cmd thread to dispatch super buffer */

node = (mm_camera_cmdcb_t *)malloc(sizeof(mm_camera_cmdcb_t));

if (NULL != node) {

memset(node, 0, sizeof(mm_camera_cmdcb_t));

node->cmd_type = MM_CAMERA_CMD_TYPE_REQ_DATA_CB;

node->u.req_buf.num_buf_requested = num_buf_requested;

/* enqueue to cmd thread */

cam_queue_enq(&(my_obj->cmd_thread.cmd_queue), node);

/* wake up cmd thread */

cam_sem_post(&(my_obj->cmd_thread.cmd_sem));

} else {

CDBG_ERROR("%s: No memory for mm_camera_node_t", __func__);

rc = -1;

}

return rc;

}

分析:该函数和流程1一样:

1 cam_queue_enq(&(my_obj->cmd_thread.cmd_queue), node);入队

2 通过cam_sem_post(&(my_obj->cmd_thread.cmd_sem));唤醒cmd线程

static void *mm_camera_cmd_thread(void *data)

{

···

case MM_CAMERA_CMD_TYPE_START_ZSL:

case MM_CAMERA_CMD_TYPE_REQ_DATA_CB:

cmd_thread->cb(node, cmd_thread->user_data);

···

}这里和流程1一样,就不再赘述!

2.3.2 Kernel层

int32_t mm_camera_start_zsl_snapshot(mm_camera_obj_t *my_obj)

{

···

rc = mm_camera_util_s_ctrl(my_obj->ctrl_fd,

CAM_PRIV_START_ZSL_SNAPSHOT, &value);

···

}

int32_t mm_camera_util_s_ctrl(int32_t fd, uint32_t id, int32_t *value)

{

···

rc = ioctl(fd, VIDIOC_S_CTRL, &control);

···

}

kernel/drivers/media/v4l2-core/v4l2-subdev.c

static long subdev_do_ioctl(struct file *file, unsigned int cmd, void *arg)

{

···

case VIDIOC_S_CTRL:

return v4l2_s_ctrl(vfh, vfh->ctrl_handler, arg);

···

}通过ioctl(fd, VIDIOC_S_CTRL, &control)的方式,借助V4L2框架,调用到kernel层,

最终buffer queue给kernel进行下一次填充。

takePicture完整流程图

Stay hungry,Stay foolish!

最后

以上就是淡淡心锁最近收集整理的关于【Camera专题】Qcom-Camera驱动框架浅析(Hal层->Driver层)的全部内容,更多相关【Camera专题】Qcom-Camera驱动框架浅析(Hal层->Driver层)内容请搜索靠谱客的其他文章。

发表评论 取消回复