本次任务选择lightgbm进行建模调参。

这里写目录标题

- 1.关于lightgbm

- 2.代码实现及参数说明

- 2.1建模及训练

- 2.2lightgbm主要参数

- 2.3性能评估

- 2.4模型调参

- 2.5输出结果

1.关于lightgbm

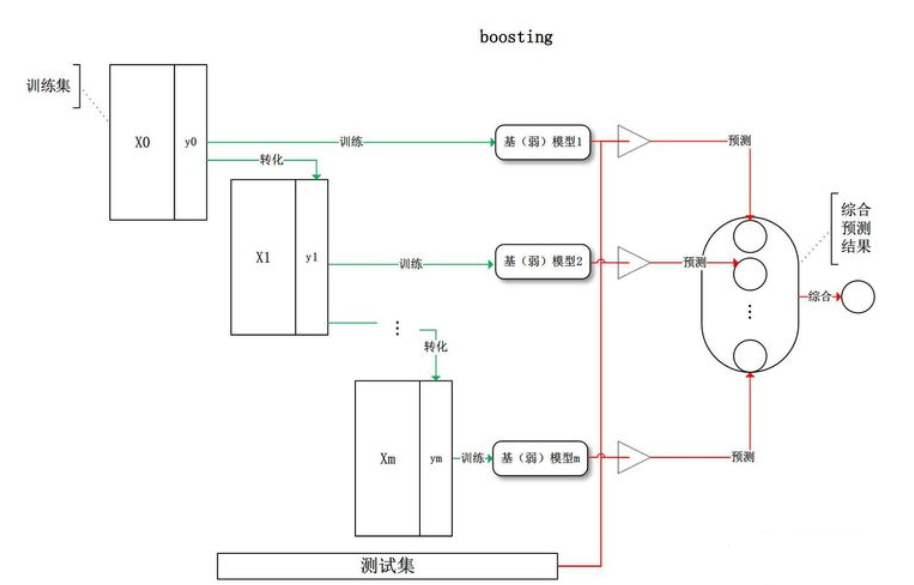

LightGBM 由微软提出,主要用于解决 GDBT 在海量数据中遇到的问题,以便其可以更好更快地用于工业实践中。LightGBM及GDBT都是boosting的方法,即基模型的训练是有顺序的,每轮训练在前一轮训练的基础上进行。

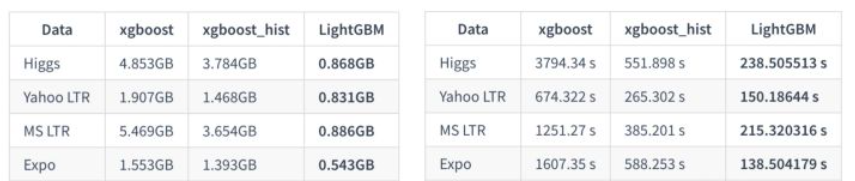

LightGBM针对XGboost在以下几个方面进行优化:

1.单边梯度抽样算法;

2.直方图算法;

3.互斥特征捆绑算法;

4.基于最大深度的 Leaf-wise 的垂直生长算法;

5.类别特征最优分割;

6.特征并行和数据并行;

7.缓存优化。

占用内存更小,计算代价更低。

2.代码实现及参数说明

2.1建模及训练

import pandas as pd

import numpy as np

from sklearn.metrics import f1_score

import os

import seaborn as sns

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings("ignore")

#优化内存

def reduce_mem_usage(df):

start_mem = df.memory_usage().sum() / 1024**2

print('Memory usage of dataframe is {:.2f} MB'.format(start_mem))

for col in df.columns:

col_type = df[col].dtype

if col_type != object:

c_min = df[col].min()

c_max = df[col].max()

if str(col_type)[:3] == 'int':

if c_min > np.iinfo(np.int8).min and c_max < np.iinfo(np.int8).max:

df[col] = df[col].astype(np.int8)

elif c_min > np.iinfo(np.int16).min and c_max < np.iinfo(np.int16).max:

df[col] = df[col].astype(np.int16)

elif c_min > np.iinfo(np.int32).min and c_max < np.iinfo(np.int32).max:

df[col] = df[col].astype(np.int32)

elif c_min > np.iinfo(np.int64).min and c_max < np.iinfo(np.int64).max:

df[col] = df[col].astype(np.int64)

else:

if c_min > np.finfo(np.float16).min and c_max < np.finfo(np.float16).max:

df[col] = df[col].astype(np.float16)

elif c_min > np.finfo(np.float32).min and c_max < np.finfo(np.float32).max:

df[col] = df[col].astype(np.float32)

else:

df[col] = df[col].astype(np.float64)

else:

df[col] = df[col].astype('category')

end_mem = df.memory_usage().sum() / 1024**2

print('Memory usage after optimization is: {:.2f} MB'.format(end_mem))

print('Decreased by {:.1f}%'.format(100 * (start_mem - end_mem) / start_mem))

return df

# 读取数据

data = pd.read_csv('data/train.csv')

# 简单预处理

data_list = []

for items in data.values:

data_list.append([items[0]] + [float(i) for i in items[1].split(',')] + [items[2]])

data = pd.DataFrame(np.array(data_list))

data.columns = ['id'] + ['s_'+str(i) for i in range(len(data_list[0])-2)] + ['label']

data = reduce_mem_usage(data)

#F1-score

def f1_score_vali(preds, data_vali):

labels = data_vali.get_label()

preds = np.argmax(preds.reshape(4, -1), axis=0)

score_vali = f1_score(y_true=labels, y_pred=preds, average='macro')

return 'f1_score', score_vali, True

lgb建模

from sklearn.model_selection import train_test_split

import lightgbm as lgb

# 数据集划分

X_train_split, X_val, y_train_split, y_val = train_test_split(X_train, y_train, test_size=0.4)

train_matrix = lgb.Dataset(X_train_split, label=y_train_split)

valid_matrix = lgb.Dataset(X_val, label=y_val)

params = {

"learning_rate": 0.1,

"boosting": 'gbdt',

"lambda_l2": 0.1,

"max_depth": 15,

"num_leaves": 31,

"bagging_fraction": 0.8,

"feature_fraction": 0.8,

"metric": None,

"objective": "multiclass",

"num_class": 4,

"verbose": -1,

}

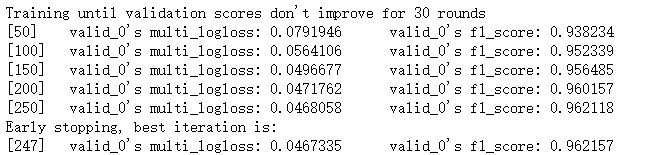

"""使用训练集数据进行模型训练"""

model = lgb.train(params,

train_set=train_matrix,

valid_sets=valid_matrix,

num_boost_round=1000,

verbose_eval=50,

early_stopping_rounds=30,

feval=f1_score_vali)

2.2lightgbm主要参数

max_depth:树的最大深度,过拟合时应降低此值

feature_fraction:意味着在每次迭代中随机选择的特征的比例

bagging_fraction:每次迭代时用的数据比例,即迭代数据量的指定比例时bagging一次

early_stopping_round:如果一次验证数据的一个度量在最近的early_stopping_round 回合中没有提高,模型将停止训练

lambda:指定正则化0~1,如lambda_l2=0.1,表示采用l2正则化系数为0.1,系数越小正则化程度越高,用来防止过拟合

num_boost_round:迭代次数 通常 100+

learning_rate:学习率,常用 0.1, 0.001, 0.003…

num_leaves :叶子数,默认 31,取值应 <= 2 ^(max_depth)

device:设置cpu 或者 gpu

metric:评价指标设置,mae: mean absolute error , mse: mean squared error ,binary_logloss: loss for binary classification ,multi_logloss: loss for multi classification

Task:数据的用途, train 或者 predict

application模型的用途 ,regression: 回归,binary: 二分类,multiclass: 多分类

boosting:要用的算法 gbdt, rf: random forest, dart: Dropouts meet Multiple Additive Regression Trees, goss: Gradient-based One-Side Sampling

2.3性能评估

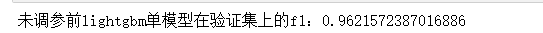

val_pre_lgb = model.predict(X_val, num_iteration=model.best_iteration)

preds = np.argmax(val_pre_lgb, axis=1)

score = f1_score(y_true=y_val, y_pred=preds, average='macro')

print('未调参前lightgbm单模型在验证集上的f1:{}'.format(score))

"""使用lightgbm 5折交叉验证进行建模预测"""

cv_scores = []

for i, (train_index, valid_index) in enumerate(kf.split(X_train, y_train)):

print('************************************ {} ************************************'.format(str(i+1)))

X_train_split, y_train_split, X_val, y_val = X_train.iloc[train_index], y_train[train_index], X_train.iloc[valid_index], y_train[valid_index]

train_matrix = lgb.Dataset(X_train_split, label=y_train_split)

valid_matrix = lgb.Dataset(X_val, label=y_val)

model = lgb.train(params,

train_set=train_matrix,

valid_sets=valid_matrix,

num_boost_round=1000,

verbose_eval=100,

early_stopping_rounds=20,

feval=f1_score_vali)

val_pred = model.predict(X_val, num_iteration=model.best_iteration)

val_pred = np.argmax(val_pred, axis=1)

cv_scores.append(f1_score(y_true=y_val, y_pred=val_pred, average='macro'))

print(cv_scores)

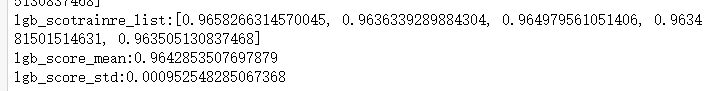

print("lgb_scotrainre_list:{}".format(cv_scores))

print("lgb_score_mean:{}".format(np.mean(cv_scores)))

print("lgb_score_std:{}".format(np.std(cv_scores)))

2.4模型调参

采用贝叶斯调参的方法进行调试

贝叶斯调参的主要思想是:给定优化的目标函数(广义的函数,只需指定输入和输出即可,无需知道内部结构以及数学性质),通过不断地添加样本点来更新目标函数的后验分布(高斯过程,直到后验分布基本贴合于真实分布)。简单的说,就是考虑了上一次参数的信息,从而更好的调整当前的参数。

主要步骤:定义优化函数(rf_cv)

建立模型

定义待优化的参数

得到优化结果,并返回要优化的分数指标

from sklearn.model_selection import cross_val_score

from sklearn.metrics import make_scorer

"""定义优化函数"""

def rf_cv_lgb(num_leaves, max_depth, bagging_fraction, feature_fraction,min_data_in_leaf,lambda_l2):

# 建立模型

model_lgb = lgb.LGBMClassifier(boosting_type='gbdt', objective='multiclass', num_class=4,

learning_rate=0.1,

num_leaves=int(num_leaves), max_depth=int(max_depth),

bagging_fraction=round(bagging_fraction, 2),

feature_fraction=round(feature_fraction, 2),

min_data_in_leaf=int(min_data_in_leaf),

lambda_l2=lambda_l2

)

f1 = make_scorer(f1_score, average='micro')

val = cross_val_score(model_lgb, X_train_split, y_train_split, cv=5, scoring=f1).mean()

return val

from bayes_opt import BayesianOptimization

"""定义优化参数"""

bayes_lgb = BayesianOptimization(

rf_cv_lgb,

{

'num_leaves':(31, 200),

'max_depth':(4, 20),

'bagging_fraction':(0.5, 1),

'feature_fraction':(0.5, 1),

'min_data_in_leaf':(10,100),

'lambda_l2':(0.01, 0.5)

}

)

"""开始优化"""

bayes_lgb.maximize(n_iter=10)

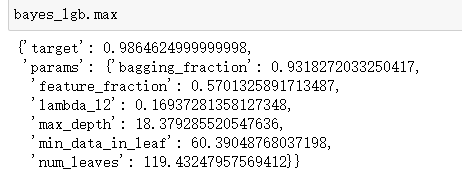

bayes_lgb.max

采用新参数进行训练:

params = {

"learning_rate": 0.1,

"boosting": 'gbdt',

"lambda_l2": 0.17,#越小正则化程度越高

"max_depth": 18,

"num_leaves": 119,

"bagging_fraction": 0.93,

"feature_fraction": 0.57,

"metric": None,

"objective": "multiclass",

"num_class": 4,

"verbose": -1,

"min_data_in_leaf": 60

}

"""使用训练集数据进行模型训练"""

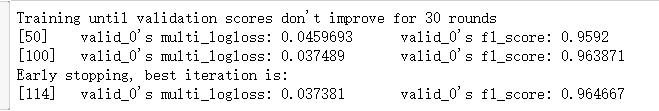

model = lgb.train(params,

train_set=train_matrix,

valid_sets=valid_matrix,

num_boost_round=1000,

verbose_eval=50,

early_stopping_rounds=30,

feval=f1_score_vali)

比较未优化的模型,f1分数虽然提升不大,但是多分类log损失函数明显降低

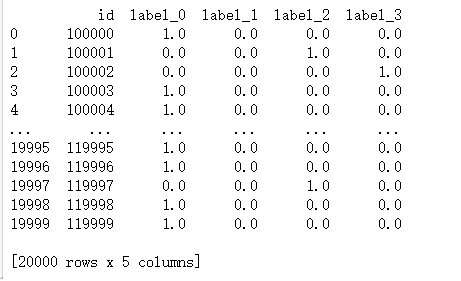

2.5输出结果

temp=pd.DataFrame(test_pred)

result=pd.read_csv('sample_submit.csv')

def ff(x):

if x<0.3:

x=0

if x>0.7:

x=1

return x

result['label_0']=temp[0].apply(ff)

result['label_1']=temp[1].apply(ff)

result['label_2']=temp[2].apply(ff)

result['label_3']=temp[3].apply(ff)

print(result)

最终成绩

比优化之前低了大概30分

最后

以上就是含糊银耳汤最近收集整理的关于心跳信号分类预测(四)建模与调参1.关于lightgbm2.代码实现及参数说明的全部内容,更多相关心跳信号分类预测(四)建模与调参1内容请搜索靠谱客的其他文章。

发表评论 取消回复