文章目录

- Before You Start

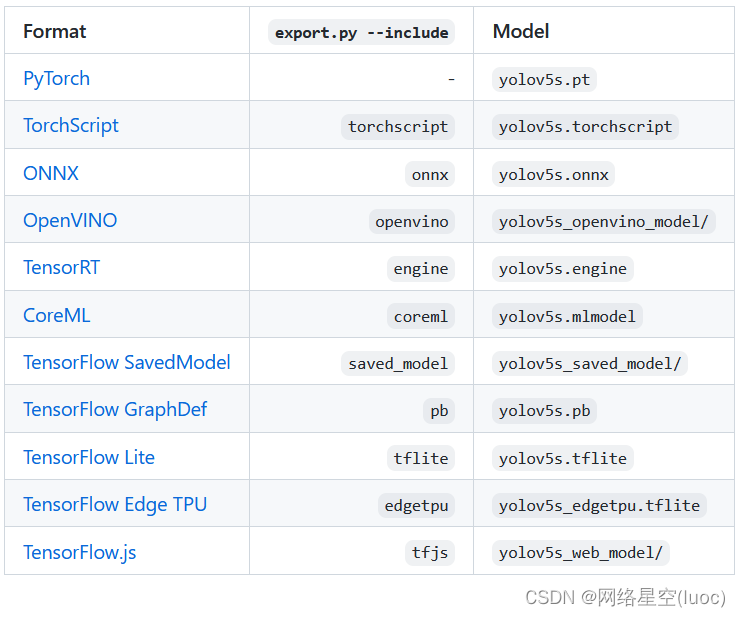

- Formats

- Export a Trained YOLOv5 Model

- Output:

- Example Usage of exported models

- OpenCV DNN C++

- Environments

- Status

This guide explains how to export a trained YOLOv5 ???? model from PyTorch to ONNX and TorchScript formats. UPDATED 18 May 2022.

Before You Start

Clone repo and install requirements.txt in a Python>=3.7.0 environment, including PyTorch>=1.7. Models and datasets download automatically from the latest YOLOv5 release.

git clone https://github.com/ultralytics/yolov5 # clone

cd yolov5

pip install -r requirements.txt # install

For TensorRT export example (requires GPU) see our Colab notebook appendix section. Open In Colab

Formats

YOLOv5 inference is officially supported in 11 formats:

???? ProTip: TensorRT may be up to 2-5X faster than PyTorch on GPU

???? ProTip: ONNX and OpenVINO may be up to 2-3X faster than PyTorch on CPU

Export a Trained YOLOv5 Model

This command exports a pretrained YOLOv5s model to TorchScript and ONNX formats. yolov5s.pt is the ‘small’ model, the second smallest model available. Other options are yolov5n.pt, yolov5m.pt, yolov5l.pt and yolov5x.pt, along with their P6 counterparts i.e. yolov5s6.pt or you own custom training checkpoint i.e. runs/exp/weights/best.pt. For details on all available models please see our README table.

python path/to/export.py --weights yolov5s.pt --include torchscript onnx

Output:

export: data=data/coco128.yaml, weights=yolov5s.pt, imgsz=[640, 640], batch_size=1, device=cpu, half=False, inplace=False, train=False, optimize=False, int8=False, dynamic=False, simplify=False, opset=12, verbose=False, workspace=4, nms=False, agnostic_nms=False, topk_per_class=100, topk_all=100, iou_thres=0.45, conf_thres=0.25, include=['torchscript', 'onnx']

YOLOv5 ???? v6.0-241-gb17c125 torch 1.10.0 CPU

Fusing layers...

Model Summary: 213 layers, 7225885 parameters, 0 gradients

PyTorch: starting from yolov5s.pt (14.7 MB)

TorchScript: starting export with torch 1.10.0...

TorchScript: export success, saved as yolov5s.torchscript (29.4 MB)

ONNX: starting export with onnx 1.10.2...

ONNX: export success, saved as yolov5s.onnx (29.3 MB)

Export complete (7.63s)

Results saved to /Users/glennjocher/PycharmProjects/yolov5

Detect: python detect.py --weights yolov5s.onnx

PyTorch Hub: model = torch.hub.load('ultralytics/yolov5', 'custom', 'yolov5s.onnx')

Validate: python val.py --weights yolov5s.onnx

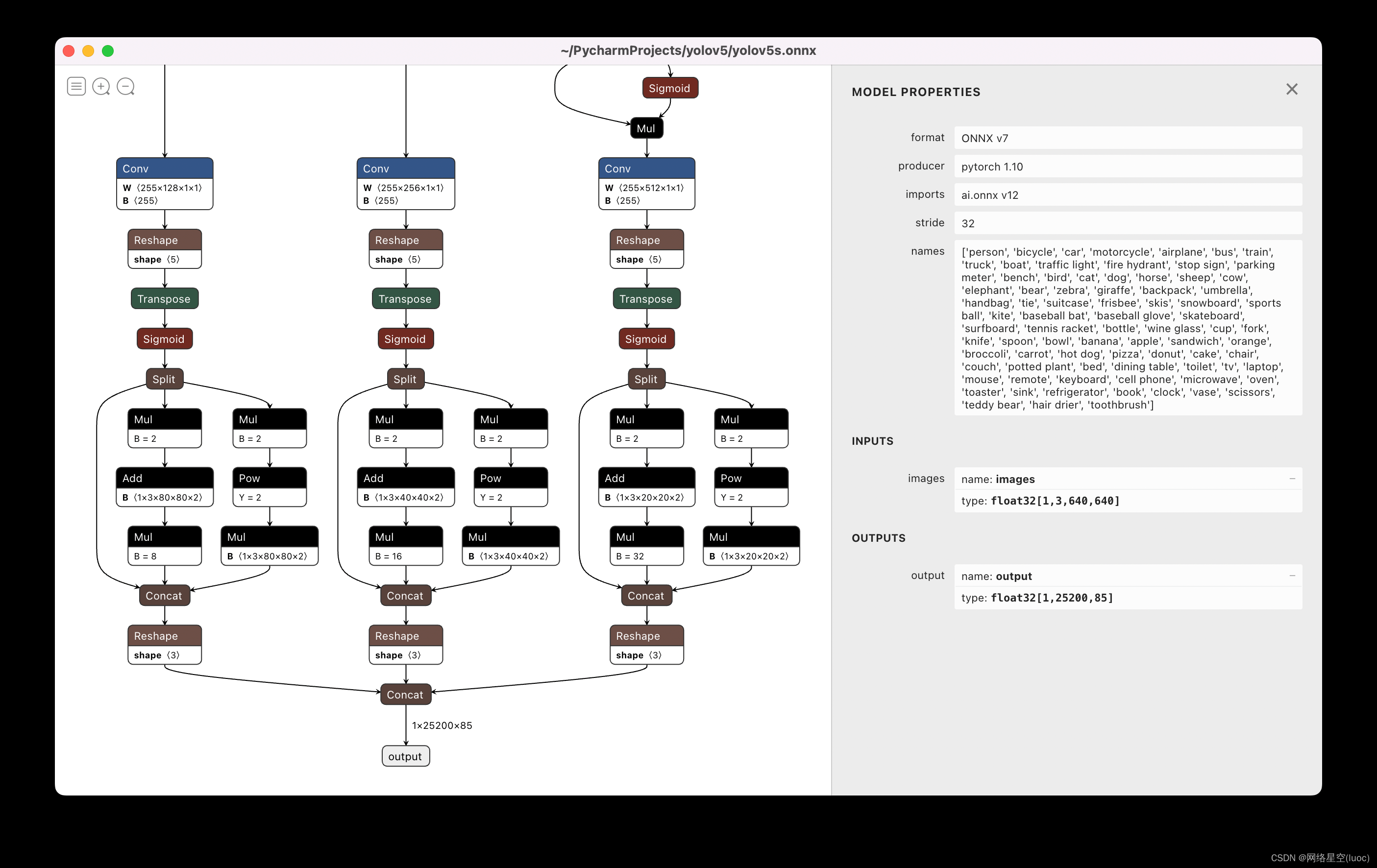

Visualize: https://netron.app

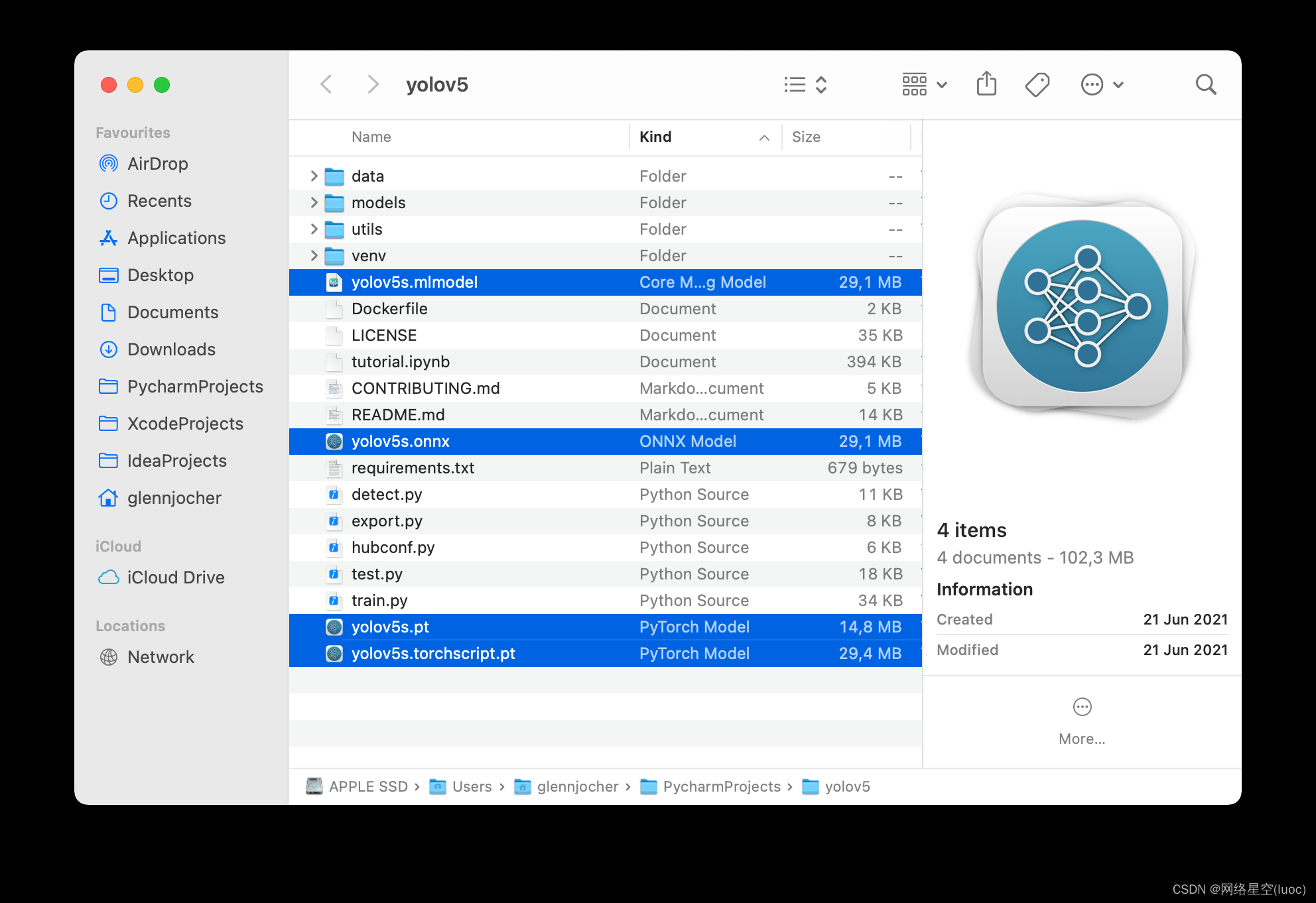

The 3 exported models will be saved alongside the original PyTorch model:

Netron Viewer is recommended for visualizing exported models:

Example Usage of exported models

export.py will show Usage examples for the last export format indicated. For example for ONNX:

detect.py runs inference on exported models:

python path/to/detect.py --weights yolov5s.pt # PyTorch

yolov5s.torchscript # TorchScript

yolov5s.onnx # ONNX Runtime or OpenCV DNN with --dnn

yolov5s.xml # OpenVINO

yolov5s.engine # TensorRT

yolov5s.mlmodel # CoreML (macOS only)

yolov5s_saved_model # TensorFlow SavedModel

yolov5s.pb # TensorFlow GraphDef

yolov5s.tflite # TensorFlow Lite

yolov5s_edgetpu.tflite # TensorFlow Edge TPU

val.pyruns validation on exported models:

python path/to/val.py --weights yolov5s.pt # PyTorch

yolov5s.torchscript # TorchScript

yolov5s.onnx # ONNX Runtime or OpenCV DNN with --dnn

yolov5s.xml # OpenVINO

yolov5s.engine # TensorRT

yolov5s.mlmodel # CoreML (macOS Only)

yolov5s_saved_model # TensorFlow SavedModel

yolov5s.pb # TensorFlow GraphDef

yolov5s.tflite # TensorFlow Lite

yolov5s_edgetpu.tflite # TensorFlow Edge TPU

OpenCV DNN C++

Examples of YOLOv5 OpenCV DNN C++ inference on exported ONNX models can be found at

https://github.com/Hexmagic/ONNX-yolov5/blob/master/src/test.cpp

https://github.com/doleron/yolov5-opencv-cpp-python

Environments

YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including CUDA/CUDNN, Python and PyTorch preinstalled):

-

Google Colab and Kaggle notebooks with free GPU: Open In Colab Open In Kaggle

-

Google Cloud Deep Learning VM. See GCP Quickstart Guide

-

Amazon Deep Learning AMI. See AWS Quickstart Guide

-

Docker Image. See Docker Quickstart Guide Docker Pulls

Status

If this badge is green, all YOLOv5 GitHub Actions Continuous Integration (CI) tests are currently passing. CI tests verify correct operation of YOLOv5 training (train.py), validation (val.py), inference (detect.py) and export (export.py) on MacOS, Windows, and Ubuntu every 24 hours and on every commit.

最后

以上就是含蓄钻石最近收集整理的关于【TFLite, ONNX, CoreML, TensorRT Export】Before You StartFormatsExport a Trained YOLOv5 ModelOutput:Example Usage of exported modelsOpenCV DNN C++EnvironmentsStatus的全部内容,更多相关【TFLite,内容请搜索靠谱客的其他文章。

发表评论 取消回复